I'm sorry, but I've been hearing about some "top-secret", area 51 type stuff called "quantum computing" and how it's (according to some dumb YouTube video) "100 million times faster" than traditional computing, and they always say that it's because of some magical thing called a "qubit". Now, I'm no physicist or anything, as people act like you need to be one to understand it,

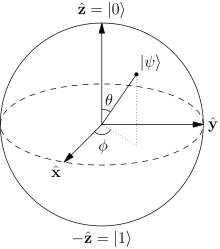

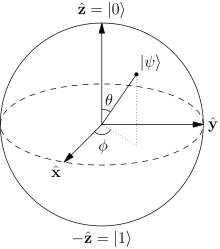

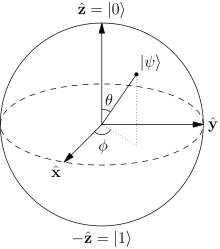

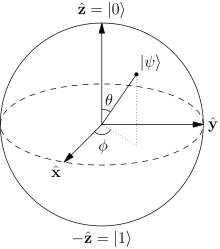

(What the heck is this, a math problem?)

But the somewhat limited definition that I've heard is that "unlike traditional computing where everything is either true or false, in quantum computing, you can have either true or false and both at the same time". Now, unless the people behind quantum computing are God, that's impossible given how they're trying to explain it. I can have a white room, I can have a black room, I can have a zebra striped room, and I can have a gray room, but I can't have a room that's fully white and black at the same time. What I think they're just trying to say is that there's just a third state, like instead of "0" and "1", I have, "0", "1", and "2". Now, if this is true, it doesn't seem very helpful... Is it that as a side effect of being "100 million times faster", it's like this, or does this actually somehow make it "100 million times faster"? Does anyone even really know, because anyone I've heard try to explain it doesn't seem to, and I certainly don't.

It's more or less a parallel computing paradigm, but instead of having two processors side by side you can have two processors "entangled" into one.

Basically it's something that if they can make it cost effective, they can use it to get more power into a smaller space. That's really all it is. Right now it's a long, long way from being cost effective.

So basically, it's a fairly simple concept that everyone is freaking out about as if it's some sort of space alien technology. Nothing out of the ordinary...

The dream is that because qubits combine (entangle) exponentially (i.e. N qubits is equivalent to 2N bits), there's a lot of potential there, but how to utilize this effectively is an ongoing research question.

To get a more intuitive understanding of quantum computing, you would want to learn about the principles of quantum mechanics:

uncertainty principle, probability functions,

duality, etc.

Espozo wrote:

I'm sorry, but I've been hearing about some "top-secret", area 51 type stuff called "quantum computing" and how it's (according to some dumb YouTube video) "100 million times faster" than traditional computing, and they always say that it's because of some magical thing called a "qubit". Now, I'm no physicist or anything, as people act like you need to be one to understand it,

(What the heck is this, a math problem?)

But the somewhat limited definition that I've heard is that "unlike traditional computing where everything is either true or false, in quantum computing, you can have either true or false and both at the same time". Now, unless the people behind quantum computing are God, that's impossible given how they're trying to explain it. I can have a white room, I can have a black room, I can have a zebra striped room, and I can have a gray room, but I can't have a room that's fully white and black at the same time. What I think they're just trying to say is that there's just a third state, like instead of "0" and "1", I have, "0", "1", and "2". Now, if this is true, it doesn't seem very helpful... Is it that as a side effect of being "100 million times faster", it's like this, or does this actually somehow make it "100 million times faster"? Does anyone even really know, because anyone I've heard try to explain it doesn't seem to, and I certainly don't.

It's kind of a messy thing to wrap your mind around but no there is not a third state. Traditional computers can be though of deterministic. A given set of inputs gives the same output given the function evaluting it. Example 1 + 1 always =2.

The results from quantum computers least based on what I know now will always be probablistic. You might get something like 1+1 has a 99.9999% chance of being one.

One big benefit of quantum computing is that it can check more possible input states simultanously making it good for 2^n search type of problems.

hackfresh wrote:

Traditional computers can be though of deterministic.

I assume you mean "thought"?

hackfresh wrote:

You might get something like 1+1 has a 99.9999% chance of being one.

I assume you mean two?

Now, I don't have a clue about quantum mechanics or whatever and I don't really care enough about this to learn it, but in what specific scenario would a quantum computer have a more efficient way to find the solution than a regular computer, and how would the quantum computer go about finding the solution vs. a regular computer?

With how rainwarrior said that "N qubits is equivalent to 2

N bits", it seems like this would be useful in itself, because there'd be less data sent for the same amount of information (somehow), assuming it'd be just as easy to move 1 bit vs 1 qubit.

You know, do these special quantum processor things even use transistors? (Actually, are there even logic gates?) Again, if I need to learn about quantum mechanics to even grasp basic principles of quantum computing and how good it is, than just forget it. It's just hard to hear "100 million times faster" and not have questions.

There's a few different schools of thought on it.

One is to create algorithms that are well suited to quantum computation. Like I think the general idea is that reading all the output states is difficult, but if you have a lot of intput bits and only need to read some of the output. There's an

quantum integer factorization algorithm, which supposedly would invalidate RSA encryption if it could be run on a suitable quantum computer (no such computer exists yet).

The other school of thought is that eventually you could write a compiler to automatically organize your code into patterns that will fit the quantum paradigm. This would make it a lot more general purpose, and make it a lot more like regular parallel computing. This is an ongoing area of research.

The current state of state of quantum computing is mostly theoretical. We can, of course, already simulate the finished quantum computer with a regular computer (just slowly, i.e. emulation). There are a few real quantum computer implementations in research labs, but they're basically only up to doing computations like 2+2=4 very slowly right now. Still in the "because we can" stage.

Espozo wrote:

It's just hard to hear "100 million times faster" and not have questions.

You can kinda just take it for granted that computers are going to be faster in the future, quantum or otherwise. It's kind of pointless to give any figures like that. I think current real quantum computers are probably easily 100 million times slower than current low end computer hardware

.

The probabalistic thing is resolved by redoing it enough times until you meet your confidence interval. Regular computation also has a probabilistic behaviour, it's just got very high level of confidence. Integer factorization is a well suited task because it's slow to compute but very quick to verify. You can just keep redoing it (i.e. increasing confidence) until verification passes. The idea that the amount of redoing is linear (or someting sub-exponential anyway), but the computational gain with each qubit is exponential, so eventually you overtake it with enough qubits.

The real problem isn't the quantum probability, that's well enough behaved by itself that they can deal with it. The problem is noise and stability, environmental interference, etc. the devices involved are so sensitive that it's really hard to keep the noise from overpowering the signal. They're trying to do computations using individual electrons; pretty tricky stuff.

So, instead of actually solving problems, it just kind of plugs information in randomly, and sees if the random thing it plugged in made the problem true? It's like with something like 602 / 7 = x, instead of taking the time to find out to divide 602 by 7, you'd just plug in random numbers for x? Is there some sort of method to the madness? I mean, if it could somehow tell if what it plugged in was further away or closer to solving the equation than what it did earlier and would compensate? It seems like some sort of weird analogue technology, especially when you started mentioning "noise", like a coaxial cable.

rainwarrior wrote:

The problem is noise and stability, environmental interference, etc. the devices involved are so sensitive that it's really hard to keep the noise from overpowering the signal.

It kind of seems like quantum computing would be better for some things, while regular computers would be better for others. Would a theoretical device from the future likely have both quantum and traditional processors in it for dedicated operations? I mean, something like addition is very simple on traditional computers, unless quantum computers can "guess" so fast that they'd overtake it either way.

Espozo wrote:

So, instead of actually solving problems, it just kind of plugs information in randomly, and sees if the random thing it plugged in made the problem true? It's like with something like 602 / 7 = x, instead of taking the time to find out to divide 602 by 7, you'd just plug in random numbers for x? Is there some sort of method to the madness?

It's not exactly what you think. It's more like when they do an operation, say the result has two states. One of those states is that it will read 1 75% of the time, and the other state is that it will read 1 20% of the time. The probability is very predictable from quantum theory, you just have to do the operation enough times to figure out if you're in the 75% state or the 20% state. Figuring out which of these two states it was is equivalent to reading a 1 or a 0 from a binary bit.

The actual quantum calculation has a definite result. The probability stuff is just about how you read that result back. Again, the less you have to read back, the more suitable the algorithm is for quantum computing.

Espozo wrote:

It kind of seems like quantum computing would be better for some things, while regular computers would be better for others.

The first practical quantum computing applications, if they ever happen, are going to be very specialized, yes. (That was the first approach I mentioned.)

Quote:

...unless quantum computers can "guess" so fast that they'd overtake it either way.

This is the second approach... With enough qubits and a suitable compiler, theoretically it would overpower the traditional approach. We have to prove first that a physical quantum computer is scalable in a practical way, though.

Anyhow, I don't know why anyone would be excited about quantum computing now, unless they're a researcher. The theory is sound, I think, but the question of whether it can be made practical is very much open. I put it in a similar category with cold fusion.

I have not that much knowledge of quantum computers or any of this, it confuses me too. But I believe it's not a computer in the normal sense of programming and running code, but instead like the Qubit=n^2 bits, that it it's self is just a new era of problem solving machines. Like an FPU in a computer, but it computes on a different level of complexity, and is just a lot faster than normal brute-force which out current computers do today. Instead of programming it to do something, you inject the qubits to be something certain, give it other tangled qubits, and from that you can eventually derive the information needed from the qubit stats to find your answer to the problem in the way a brute-force modern computer could, but not nearly as fast. That's why it gets talked about with encryption, encryption without the keys needs the brute-force stateful information to be de-encrypted which is hard to find, but that's where quantum state computing can make it easy to do calculations. That is an area of computing we haven't done anything besides brute-force on algorithms, so it has lots of potential to further that field in general.

3gengames wrote:

That is an area of computing we haven't done anything besides brute-force on algorithms, so it has lots of potential to further that field in general.

I simply though that it's because "that area of computing" could only be made possible using "brute-force". In fact, I thought just about everything with any form of intelligence operated almost exactly like a "traditional" computer, like the human mind, just very complex. I guess that explains how easy it is to decipher objects based on images and stuff like that and how difficult it is to do math problems that cheap $5 calculators can do in a split second.

3gengames wrote:

Like an FPU in a computer

I've always heard the term "Floating Point Unit", but I've never had a clue as to what that meant...

rainwarrior wrote:

It's more like when they do an operation, say the result has two states. One of those states is that it will read 1 75% of the time, and the other state is that it will read 1 20% of the time. The probability is very predictable from quantum theory, you just have to do the operation enough times to figure out if you're in the 75% state or the 20% state. Figuring out which of these two states it was is equivalent to reading a 1 or a 0 from a binary bit.

So by some freak chance, the computer could actually get the wrong answer for a problem? This seems like it would be extremely problematic in any scenario that isn't a microwave, like if it were used in servers holding credit card balances. It seems so tricky, because you want to do the operation as few times as possible for efficiency, but you also want the data to be correct... From what you're saying though, it does seem like it solves the problem "normally" though, just a bunch of times.

3gengames wrote:

Instead of programming it to do something, you inject the qubits to be something certain, give it other tangled qubits, and from that you can eventually derive the information needed from the qubit stats to find your answer to the problem

What? Inject the qubits to be something certain? You mean like setting them to create a certain value? Tangled qubits? Are qubit stats just like the state in which the qubit is in, like how a bit can either be 0 or 1?

In fact, in the way you say a bit is either 0 or 1, how would you describe a qubit, if it is even possible in the same way? What would 7 be in "qubits"? Sorry if I'm still not really understanding this...

Espozo wrote:

So by some freak chance, the computer could actually get the wrong answer for a problem? This seems like it would be extremely problematic...

The possibility of error is inherent in every computing application. There is nothing special about quantum computing in this regard.

There is noise and interference and chosen levels of certainty in all computer engineering. A combination of materials and process is chosen to be as accurate as your application needs.

Internet traffic, hard drives, CDs, transmissions from mars, these things all have error correction schemes, because we

expect some amount of corruption.

In a CPU, usually it's not done with an error correcting algorithm, I don't think (though there is such a thing as

error correcting RAM), but error tolerance level is still a big factor in design. How close can components be put together, how fast can they switch, etc. all of this affects the reliability of its operation, and there is an engineering decision to allow some % of error to happen. (Yes, the computer you're using DOES make mistakes some amount of the time.)

Quantum computing is exactly the same in this regard, do the operation until you meet your target % of confidence.

On the NES, if we're using DPCM while reading the controller, we simply re-read the controller until we're confident we have valid input.

The potential for error is not really that unusual a problem with quantum computing; at the high level, it's the same problem as other kinds of computers; you engineer the tolerance for what you need, create redundancies, checksums, etc. whatever is appropriate for your application.

The thing that is very unusual about quantum computing is just this potential for exponential growth of power, provided we can ever find a practical way to implement it. (I'm not terribly confident we'll ever get there, but I'm glad that people are looking into it, just in case.)

On my 2 quotes:

An FPU is part of a computer that handles floating point operations. These are just mathematical operations that have a large number of numbers, and sometimes decimal points. They're doable in software, but a hardware FPU to do the mathematics needed speeds them up. Just like Quantum computing can speed up other applications. Just google FPU and start reading, I won't spoon feed it, google is just one website away.

And yes, with today's easy-to-do programming and inexpensive hardware, that area of computing can exist. If quantum computing gets good, it will also eliminate that field. Or at least change it a lot to how it is today.

But yes, you don't just read an atom and get information you need. You have to inject data into them, just like in binary. It's not exactly like that, but the idea is you put data in, wait, data out. Exponentially faster than modern computers is all.

I still love the idea of a quantum bogosort. "Shuffle your list, using quantum randomness. If the list is not in the desired order, destroy the universe."

Well, I remember, Floating Point Unit got mentioned somewhere in this, and because it's pretty much useless to worry about quantum computing at this point if you're not a scientist, (although many people are for whatever reason) I want to ask something about that.

Now, according to this:

https://en.wikipedia.org/wiki/Floating-point_unit, it seems just like computer hardware that does math. The thing is, it seems that this is already integrated in just about every single processor ever, unless there's a processor that can only move numbers, which is pretty much useless. I guess could you call the multiplication/division units in the SNES "Floating Point Units"? I just don't see how this is a new concept.

It's not new, but an FPU at one point in time was the equivalent of the qubit on how much it can help process.

Well, actually, I do have something I was thinking about when it comes to qubits. I know it's a common misconception that we aren't using 32 or 64 bit processors and that we're using 128 + bit processors because the PlayStation uses a 32 bit and the N64 uses a 64 bit processor and that we've come along way since then, and we have, but we are still using the same size processor because there is little reason to move numbers larger than 64 bits in one go. The thing about qubits everyone has been saying is that because there is so much checking for "1"s and "0"s to determine what would be a 1 or a 0 on a traditional computer (the whole 75% and 20% thing) that it would actually be slower than a traditional computer if you didn't add up a bunch of qubits, as the amount of data you can have grows exponentially with each qubit you add. The thing is, only 6 qubits is apparently equal to 64 bits, which is what we've been using for over a decade as there's little need to change. I'm not getting this at all, are I...

Now take that 64-bit PC and brute for a 2048-bit encryption. I'll see you next millennium. A quantum computer would need a lot less qubits, and be able to do the task nearly instantaneous. Understand it yet? Just like the FPU. Floating point on the CPU is slow as molasses. FPU in hardware was a big leap, and makes it not slow. Same idea, just different areas of computation. Without FPU's as good as they are now, GPU's wouldn't exist. Processors have FPU's, but the main application they shine in is 3D graphics, doing billions of calculations, which are all floating point, every second.

3gengames wrote:

Now take that 64-bit PC and brute for a 2048-bit encryption. I'll see you next millennium. A quantum computer would need a lot less qubits, and be able to do the task nearly instantaneous. Understand it yet?

So you're saying we'd see quantum computers (assuming we even do) with more than 6 qubits?

3gengames wrote:

Floating point on the CPU is slow as molasses.

I almost don't even see how it's possible... What instructions would you even have left? Would you still have AND, OR, and EOR? You couldn't even have things like CMP because it involves subtraction.

Yes, you'd need enough qubits to do the computation you wanted.

And floating point is the same as normal point math, you add, subtract, multiply, divide, etc. JUst with much more complex numbers, which is done by the FPU for you. Please just read up on floating point numbers in programming, I'm using it as an example to relate the computation technology that is the closest leap next to Quantum computing to make it easy, but I don't think you even know what floating point numbers are.

3gengames wrote:

Yes, you'd need enough qubits to do the computation you wanted.

I just can't see how working with numbers this large would ever be practical.

3gengames wrote:

And floating point is the same as normal point math, you add, subtract, multiply, divide, etc. JUst with much more complex numbers, which is done by the FPU for you. Please just read up on floating point numbers in programming, I'm using it as an example to relate the computation technology that is the closest leap next to Quantum computing to make it easy, but I don't think you even know what floating point numbers are.

Well, when I looked up "Floating Point Unit", I was led to believe that it was for addition, subtraction, multiplication, and division for all types of numbers, but then I looked up just plain "Floating Point" and again according to Wikipedia, it seems like "Floating Point Numbers" are just decimal numbers with whole numbers, and that this "Floating Point" is the decimal. It says that it's kind of like scientific notation, and I'm under the impression that it works like you have 2 numbers to make a floating point number:

The numbers like 1.265 (1265)

And numbers like 10

2 (I'm not sure how this would be expressed due to the fact it can be negative)

To create 126.5

https://en.wikipedia.org/wiki/IEEE_floating_pointEdit: Sorry, the aforementioned article might be interesting also, but I meant to post this:

https://en.wikipedia.org/wiki/Floating_point

Espozo wrote:

Now, according to this:

https://en.wikipedia.org/wiki/Floating-point_unit, it seems just like computer hardware that does math. The thing is, it seems that this is already integrated in just about every single processor ever

The difference between the math done in an FPU and the math done in the CPU is that in an FPU, the "exponents" determine how much shifting is done to the numbers when they are added or subtracted. This means you can represent numbers in the quintillions and numbers in the one-quintillionths with the same data type.

Prior to the i486DX and MC68040, the FPU was a separate coprocessor, model number 8087, 80287, 80387, 68881, or 68882.

Espozo wrote:

I know it's a common misconception that we aren't using 32 or 64 bit processors and that we're using 128 + bit processors

Misconception my rectum. SSE instructions, introduced with the Pentium III, work on 128-bit vectors. So do the AltiVec instructions in the PowerPC G4. AVX instructions, introduced with the Intel Core Sandy Bridge and AMD Bulldozer processors, work on 256-bit vectors.

Atari defines the "bits" of a platform as the width of the

data bus to RAM or VRAM. By this definition, the NES and SMS are 8-bit, the TG16 and Super NES are 16-bit because the VDC/S-PPU data bus is 16-bit, the Genesis is 16-bit because the CPU data bus is 16-bit, and the Jaguar is 64-bit because the GPU data bus is 64-bit. The GBA is 16-bit, the 8088 is 8-bit, the 286 and 386SX are 16-bit, the 386DX and 486 are 32-bit, and everything from the Pentium through the first Athlon 64 are 64-bit. But bus width can be deceiving, as Rambus RDRAM and AMD HyperTransport use narrower buses at double or higher data rate. The N64 is 8-bit by this measure.

OK, just to make it clear: the FPU handles "floating point numbers", which are numbers that can have fractional parts. The CPU handles integers, which are much faster to process and can easily take up less space if they're small (and they're the only ones on which you can perform binary operations like AND or XOR or bit shifting).

In other words: integers (CPU) vs non-integers (FPU).

Espozo wrote:

It says that it's kind of like scientific notation

That's just how floating point numbers are stored internally (usually!), so the number of digits after (or before) the point isn't fixed. You get less precision with larger numbers and an absurd amount of precision with smaller numbers. This tends to work well enough with most math stuff. But this is probably you shouldn't worry much about if you aren't a programmer =P

rainwarrior wrote:

One is to create algorithms that are well suited to quantum computation. Like I think the general idea is that reading all the output states is difficult, but if you have a lot of intput bits and only need to read some of the output. There's an

quantum integer factorization algorithm, which supposedly would invalidate RSA encryption if it could be run on a suitable quantum computer (no such computer exists yet).

This has been interesting me for a while now. Do you think prime factorization will ever be able to be done better than exponential time on a traditional computer?

I came up with a really simple factorization algorithm, which I later found out was just called Trial Division, but I don't understand why this runs exponential time given the worst case (semiprimes).

For example, 100. The smallest prime factor is two, so the number is now 50, half of what it originally was. 50 / 2 is 25, / 5 = 5. Done. But now, a number like 4,611,686,112,916,668,371; the product of the prime numbers 2147483647 and 2147483693. Not an RSA number by any means, but it took about 22 seconds to factor. Obviously the time is exponential, but I really don't understand why. Without nitty gritty optimization details, the number of checks would only need to be roughly the sum of all the factors, right? I read somewhere that the time grows exponentially with how many digits the number is or something, but not how big the number actually is. (i.e 241 doesn't take exponentially more time to factor than 240.) I know I'm swimming in a pretty deep pool right now.

My other question is, how are they "doing things" with quantum computers? I understand it's still only for really specialized things (As of april of this year, they factored 200,099 using Shor's algorithm.) So are quantum processors a thing? Or are they just using physics or QM to make logic happen? What would an instruction set look like for a quantum computer?

It's "exponential" in the length of the input, which is the logarithm of the number being factored. For example, factoring a 20-digit semiprime into two 10- to 11-digit primes takes on the order of 10^10 trial divisions.

[Corrected math error]

Sogona wrote:

I don't understand why this runs exponential time

I think the problem is that you can grow the size of your key exponentially. Adding a single bit doubles* your expected execution time for factoring it. Your solution is outpaced by the problem.

* Not entirely accurate, but you get the point.

Sogona wrote:

prime numbers 2147483647 and 2147483693

Today I learned that INT32_MAX is prime o_o

Sik wrote:

Today I learned that INT32_MAX is prime o_o

So is INT8_MAX, INT64_MAX, and INT128_MAX. These convenient numbers are

Mersenne primes.

Joe wrote:

Sik wrote:

Today I learned that INT32_MAX is prime o_o

So is INT8_MAX, INT64_MAX, and INT128_MAX. These convenient numbers are

Mersenne primes.

Doesn't seem to be true for INT64_MAX:

http://www.wolframalpha.com/input/?i=is+2%5E63-1+primeOthers are correct, though.

If x is composite, 2x - 1 is also composite. And 63 = 3 * 3 * 7.

I'd better learn how to make bread or fix a car, because there's no way I will be able to wrap my mind around this once real quantum computers are viable.

Luckily for me, I already have some mechanical knowledge.

thefox wrote:

Doesn't seem to be true for INT64_MAX:

...And that's what I get for posting when I'm supposed to be asleep.

Punch wrote:

I'd better learn how to make bread or fix a car, because there's no way I will be able to wrap my mind around this once real quantum computers are viable.

By the time quantum computers are viable, automation will have already taken care of that stuff as well =P

I don't know why fast food restaurants haven't automated preparing food more, especially with people demanding a higher minimum wage. It costs a lot of money initially, but it'll pay for itself in time. I think I heard McDonalds wants to put in kiosks for ordering, so that's a step forward.

Espozo wrote:

I don't know why fast food restaurants haven't automated preparing food more, especially with people demanding a higher minimum wage. It costs a lot of money initially, but it'll pay for itself in time. I think I heard McDonalds wants to put in kiosks for ordering, so that's a step forward.

It's kind of a question of reliability.

The

kind of machine that can make a good looking burger costs a lot to make, but also a lot to

maintain. The more complicated and subtle a machine is, the more often it's going to break down, and I'm sure the cost of a qualified technician and repair is going to easily be 1000x higher than the cost of the employee you have to hire to replace it while it's on the fritz.

Why doesn't McDonalds cook your food with robots? Probably because the kind of burger a

reliable machine would make is a pretty sorry looking thing. ;P It'd be disgusting to their customers, who would stop coming back.

I've seen automated kiosks for ordering in Burger King and at Jack in the Box, but they didn't last too long at either place. I think generally the user experience has been to reject this kind of thing. Probably it can be implemented well (e.g. pizza delivery via website order form seems to work great), but I think most of the times it's been tried in fast food it's failed.

Espozo wrote:

I don't know why fast food restaurants haven't automated preparing food more, especially with people demanding a higher minimum wage. It costs a lot of money initially, but it'll pay for itself in time.

For now, I can't really quite believe it's anything more than a threat against metropolitan areas that have voted in favor of higher minimum wages.

If it were actually worth doing, the difference between paying for a ~$30k robot (and a service contract) shouldn't matter much whether you're paying humans a minimum wages of ~$18k/year or ~$30k/year.

FWIW they do automate lots of steps in the making of fast food. Particularly this applies to the supply chain that gathers and prepares the ingredients, and processes them into the components that go into the food.

Fast food is "fast" because they're mostly just assembling stuff from ready made parts, and performing the last stage of cooking/heating.

I've worked on assembly lines making food products. Generally companies automate whatever they can. Some stuff can be done easily by machines, and some stuff it's just more practical to hire a human to do.

It might change in the future as automation technology improves, but I doubt that buying a robot today to replace current uses of human labour will be cost effective in the long run. I'm pretty sure it's been thoroughly considered in cases like this (e.g. McDonalds certainly has). All of the machines have maintenance costs. They have to meet some target volume of reliability before they're worth replacing a human with.

rainwarrior wrote:

It's kind of a question of reliability.

Oh, I kind of forgot about that detail...

Never mind?

lidnariq wrote:

If it were actually worth doing, the difference between paying for a ~$30k robot (and a service contract) shouldn't matter much whether you're paying humans a minimum wages of ~$18k/year or ~$30k/year.

Well, one's a single payment while the other is every year. Maintenance can't cost as much as the whole thing.

rainwarrior wrote:

The kind of machine

I wasn't thinking anything near as complex as that, well, I mean no single piece of machinery would be that complex, but there'd be a lot more of it. Everything would be running on a computer together that is directly licked to the kiosks. I was thinking you would have a couple of wheeled or treaded "tables" that would move around, and there would be specialized mechanical arms (they don't need to have anything like fingers or the range of flexibility that robot had, if it works the grill, all it needs is something like a spatula) that would take a burger off the table robot and put it on the grill. When it's done, another robot would come back to carry it. There would be QR codes that tell the robot where to be, and when it reaches the destination, it would send a signal to the main computer that would go to the machine at the station it is supposed to be at. If it doesn't get there, it would send out a signal to whatever human workers are there to fix it. The main computer would have to multitask all of this to ensure that everything is done as fast as possible. The main problem I see with this is keeping the robot carrying the food sanitary (I'm not even sure how regular fast food workers do this. They'd have to at least touch the bun of a burger, and I doubt they change there gloves every burger) and mostly, having the things at each station work. For example, how would you get a robot to fish pickles out of a jar and place them on a sandwich without falling off, and if they did, how would it know? You'd have to add a bunch of sensors and fancy AI that would drive up the price.

Yeah, the more and more I think of this, the dumber and dumber it sounds.

I wouldn't be surprised if this did happen one day, but I imagine it will be a slow progression.

Espozo wrote:

For example, how would you get a robot to fish pickles out of a jar

The same way you get a PCB-assembly robot to fish out individual chips.

Quote:

and place them on a sandwich without falling off, and if they did, how would it know?

Prevent it by constructing the sandwich inside a container not much bigger than the bun. Did you know

Big Mac sandwiches are constructed upside down and McDouble sandwiches aren't?

Quote:

You'd have to add a bunch of sensors and fancy AI that would drive up the price.

It depends on how much the sensors cost and how many staff they replace.

Well, I mean, it's all definitely possible, but we want profitable. Like I said though, I wouldn't be surprised if production was made faster though, which is profitable.

I think a large part of the fact that there seems to be no push for this comes from the fact that big businesses generally don't like to take risks, and if fast food ordering has worked with humans since forever, then why bother changing it?

I'll tell you though, businesses will start to consider if minimum wage ever reaches something insane like $15 (in current money).

Espozo wrote:

Well, one's a single payment while the other is every year. Maintenance can't cost as much as the whole thing.

It certainly

can. That's the business model of a lot of companies, really, where the service costs are where they make their real profit.

Espozo wrote:

The main problem I see with this is keeping the robot carrying the food sanitary (I'm not even sure how regular fast food workers do this. They'd have to at least touch the bun of a burger, and I doubt they change there gloves every burger)

Tongs can be used to pick up a bun. A spatula can be used to move cooked meat onto the bun. Condiments can be delivered with spoons and squeeze bottles. Et cetera. Go to a fast food restaurant and watch the food handlers and you'll get your questions answered pretty quick.

Gloves are only required for some stages of the process (usually the later stages). A lot of food preparation is just done with clean, naked hands. Gloves interfere with your sense of touch, which keeps you from feeling whether your hands have become dirty. In particular, cross contamination needs to be avoided, like touching raw meat and then transferring the bacteria that lives on it to anything else. You can handle meat with bare hands, but you'll have to wash them before you touch anything else. Gloves can help with that problem.

rainwarrior wrote:

That's the business model of a lot of companies, really, where the service costs are where they make their real profit.

Maybe fast food restaurants could start to hire engineers.

But yeah, I've never been in the back of any sort of restaurant before. Actually, I've been in the back of a Papa Johns once.

Just gonna say:

http://www.techinsider.io/wendys-worker ... ots-2016-5And yeah, it's related to rising wages. Instead of raising the wages they'd rather replace employees with computers. Of course now you have some people using it to make fun of those who wanted higher wages.

rainwarrior wrote:

(e.g. pizza delivery via website order form seems to work great)

To be fair, that's because usually you'd just call over phone and the store owner would take the call, and a website is just replacing the phone call with sending a form (somebody still needs to check it), so it's not like anything was lost here.

What's lost is that some restaurants are making remote ordering available only to smartphone users by allowing orders to be placed through an iOS or Android app but not through the web.

A phone call wouldn't give you the web option either =P

Although I did notice the trend of making apps instead of sites (to the point that a lot of new services are smartphone-only and rely on your phone number instead of making an account, with PC support if any being an afterthought and you need the phone just to sign up - Discord being a notable exception). Some people are really set on killing off PCs for real. But I guess that's another topic. I also wonder if this is why Google bought Android, now searching apps may be even more important than searching sites.

Punch wrote:

I'd better learn how to make bread or fix a car, because there's no way I will be able to wrap my mind around this once real quantum computers are viable.

I started reading

Quantum Computing and Quantum Information just "for fun", and what do you know, I get it* now! I really believe this is going to be the future and a viable quantum computer will be a major milestone for human civilization. Maybe we'll be better equipped to go one level up in the Kardashev scale by then.

*get it: barely understand a limited set of concepts regarding quantum computing. Wasn't Feynman who said that "if you think you understand quantum mechanics, you don't understand quantum mechanics"?