I did...okay...at math in highschool and college. Didn't knock anyone's socks off with grades. I'm decent enough with everyday arithmetic and trigonometry to find my way around in 2D game development. Like for example, I just integrated an atan2 routine into my current NES game to implement following behavior, and generated fixed point cos/sin tables for the velocity using python.

But knowing how to USE math is way different from a deep understanding of it. Like, I find myself wondering: How does the "sin" function in most programming libraries work? When I look it up it sounds horrifyingly complex and I find my way to a wikipedia page about a Taylor series. I seem to vaguely recall learning you can compute sin with a taylor series somewhere. But...since I can pragmatically just USE the results of math functions for my games without having a really really deep understanding of why they work, I don't bother putting in the effort to understand them at that level.

I guess I was curious how many folks here are/were so good at math that you feel you have a proof-level depth of understanding of some of the types of math that we use daily as game programmers. I often feel frustrated when I can't TOTALLY understand something, but as I get older, pragmatism is taking a stronger hold and I'm less upset when I just have to use something instead of understand absolutely every facet of it down to the proof of a theorem. I WISH I could understand it all in its entirety, but...in a way math is the same thing as the open source programming world...there are tons of pragmatic results there for you to just USE, we don't all have to absorb absolutely all of how to derive all of it the way the original discoverers of these things did.

It's been 11 years since I've even done a math problem on paper, I think, I graduated college in 2006! All of the professional coding I've done hasn't involved any mathematics at all (in the sense I'm describing anyway). I maybe used a tiny, tiny bit of linear algebra once with some rotation animation or other on Android at some point in the past, but even that's been abstracted out so far at this point you barely need to know anything to use it.

I guess this is no different than, say, not exhaustively understanding how an OS works. It'd probably take me years to understand everything needed to write a small unix like kernel for instance. On the same token it would probably take me years to learn how to prove all of the math that I use on a daily basis, exhaustively. It's just not worth it when you're after pragmatic results based on things that are available to use, I guess.

I used to be good at math, or at least pretty decent. In highscool it was my favourite discipline by far, and I was pretty good. In uni however the level of expectations was much, MUCH higher and we had to solve problems that were so crazy, for example having multidimentional integrals to compute. it was almost insane. I managed to get by, but mediocrely, fixing my previous impression that I was good at math. I especially loved gemometry, but I liked algebra as well.

At the 2nd grade in uni this went even more insane and unfortunately I had an absolutely awful professor which was full of hatred towards students and absolutely did not want us to learn but just proof us we sucked at math. So he disgusted me of math and since then I avoided math like cancer. Which is really shame, since I originally loved it !

Taylor's series is an extremely simple concept that allows to do great interpolation around a point. If I were to code a sine function I'd probably just ressort to lookup tables and interpolation, however Taylor series can greatly improve the interpolation as instead to connect dots directly it will epouse the shape of the function locally. Usually only one or two extra terms are necessary, but you can go as far as you'd like. If you'd use a Taylor series with more terms, the interpolation will be so good that you can make the lookup table much smaller, actually in extreme cases you could just have angles like 0°, 30°, 45°, 60° etc... and do the others angles just with Taylor's series. The problem is that there will could still be some discontunity when using Taylor approximation from one point and then moving to the next point.

How good are you at doping silicon, fabbing plastic shells?

These strike me as about as relevant as grokking those proofs of how computers gen sin(θ), to game development.

Quote:

I'm decent enough with […] trigonometry to find my way around in 2D game development.

You know that is not common, right?

Myask wrote:

How good are you at doping silicon, fabbing plastic shells?

These strike me as about as relevant as grokking those proofs of how computers gen sin(θ), to game development.

Quote:

I'm decent enough with […] trigonometry to find my way around in 2D game development.

You know that is not common, right?

By decent enough I mean I know the basic definitions of sin, cos, and tan, how to solve for sides of triangles, converting between radians and degrees (or other subdivisions) etc. Super super simple stuff. That's not common!?

Bregalad wrote:

Taylor's series is an extremely simple concept that allows to do great interpolation around a point.

Really simple eh?

like...why does it work? I've kind of gathered it's an infinite sum of derivatives of a function (with other terms in the formula I don't yet understand) which approximates the shape of the actual function, so if you can keep computing the derivative of a function you can get as much precision as you want for the function you're going for, right? So ok...but like the formula itself, WHY does it work. Why is there a factorial in the formula. etc. etc. Like, it's not bad to find out the high level definition of something and plug and chug and just use it, but ...I want to know how it was discovered and derived to begin with. It amazes me when I think about that somebody figured these things out, on paper.

Derivative of xn = n·xn-1

Do it again: n·(n-1)·xn-2

And again: n·(n-1)·(n-2)·xn-3

Looks like factorial, no?

lidnariq wrote:

Derivative of xn = n·xn-1

Do it again: n·(n-1)·xn-2

And again: n·(n-1)·(n-2)·xn-3

Looks like factorial, no?

Oh, cool! That makes sense (seeing the pattern there...still don't have any idea how somebody figured this out to begin with). Well, I still am confused because...I understand you can approximate the sin function with the derivative(s) of sin at just one point. What I don't get is how does it work that you can approximate a whole function just from the derivatives at one point?

lidnariq wrote:

Derivative of xn = n·xn-1

Do it again: n·(n-1)·xn-2

And again: n·(n-1)·(n-2)·xn-3

Looks like factorial, no?

When I look at stuff like this (which flies completely over my head) I realize I suck at math. I barely managed to pass calculus in college.

Myask wrote:

How good are you at doping silicon, fabbing plastic shells?

These strike me as about as relevant as grokking those proofs of how computers gen sin(θ), to game development.

Quote:

I'm decent enough with […] trigonometry to find my way around in 2D game development.

You know that is not common, right?

I would say that knowing how to prove / derive how a sin generation or other math operation can be very helpful knowledge in the right situation.

For instance, in school I was taught how a

Catmull-Rom Spline is derived, but the process of learning that (combined with a prerequisite in linear algebra) made me realize how other interpolating curves may be derived in general. This has meant that at many points in my career I've been able to create a custom curve that best fits the situation at hand, rather than being stuck with whatever pre-fab solutions I can find.

This kind of knowledge often makes the difference between something that roughly fits together and barely works, and something that feels perfect and solid. In many cases this isn't even a tradeoff of time and effort: when you have the right knowledge often you can do something quickly and well, much better than adapting an acquired "black box".

Calculus in particular has tons of applications in 3D graphics. It comes up a lot in physics simulations, or even just some exploratory questions about gameplay (e.g. "how long will this level take to fill with water"). There's a lot of stuff that you can do really easily with the right knowledge of math that is very difficult to do otherwise.

Even bringing it back to sines and the NES, there's like a hundred different ways to calculate a sine, and in the right situation you might be able to make one that's very fast to calculate, numerically stable, no lookup tables / small code etc. but only suitable for for just that specific purpose. If you don't have this kind of knowledge, you'll probably be stuck with a more generic solution, and you might even just give up on what you want to do when it's actually very feasible.

I don't think the comparison with doping silicon is even

remotely fair here. There's tons of ways that being able to understand and customize a sine calculation can be

directly useful to the problem of developing an NES game. You might not be able to see those possibilities without that knowledge, though. If you find yourself curious about what's inside your tool, why not take some time out to understand it?

There's plenty of situations where a ready made tool is fine to use, too. Especially in commercial development, it's very important to effectively manage your time. A well made pre-fab solution is probably better than a poorly made custom solution... though even just having some knowledge of what's going on inside will help you make a better/informed choice about which existing tool to use!

Knowing

how to implement something isn't only useful for implementing it; it also gives you the ability to evaluate and understand other implementations, when to use them, and how long it would take to write it yourself. Often I would specifically chose to use a ready-made system

because it's something I know a lot about and could make myself (and have an idea how much the labour involved is worth).

In a lot of cases the rough solution and the "perfect" solution are equivalent for the end user, but that's also something that's difficult to judge without experience. Math in particular has a lot of uses in software, and in a lot of ways that aren't obvious or easy to explain the application until you know that math. I definitely encourage taking a little break to push out the edges of your sphere of knowledge occasionally.

GradualGames wrote:

Oh, cool! That makes sense (seeing the pattern there...still don't have any idea how somebody figured this out to begin with). Well, I still am confused because...I understand you can approximate the sin function with the derivative(s) of sin at just one point. What I don't get is how does it work that you can approximate a whole function just from the derivatives at one point?

Yes, it works not just for sine, but for any continuous function.

e.g. if you pick a point on a curve f(a), you can approximate a point f(a+b) nearby by

taking the slope and following a straight line to that nearby point.

The slope is just the first derivative, though, you can make a better approximation if you take into account whether it is curving up or down vs. that straight line slope... so you can take the "slope" of that derivative slope, or second derivative, to improve the estimate. Instead of following the straight line, you follow the straight line, plus a continual curve up or down to adjust from it...

This process can be repeated until you have the accuracy you want. If the function is a simple polynomial eventually you get a derivative that is just 0, and at that point you're calculating it exactly.

If you want more accuracy around a specific region of the function, you can pick your starting point there. Considering a sine, you might realize that an approximation of sine(0.3) near 0 might be more accurate than sine(50π+0.3), even though the target value should be the same. Each successive layer of approximation will maybe get you one more "curve" in your approximated function. Of course with sine you know it's periodic so you can just modulo 2π to keep everything in the "close" range, but there are lots of non-periodic functions out there you may want to approximate.

Anyhow, that's just the Taylor Series idea, there's lots of other approximation methods. Many approximation methods have

undesirable instability that you should be careful to avoid. Again, hard to know where they can apply until you understand them and have experience with them, but I recommend taking it as far as you're interested. It's

OK to use stuff you don't understand if it solves your problem adequately, just it's hard to know what you're missing until you do.

Wikipedia is also generally a very bad place to learn anything to do with math. I find most of the articles are written by experts who expect a lot of pre-requisite knowledge. Wikipedia isn't supposed to be a tutorial, either, but it's not even structured in a way that is suitable for learning these concepts anyway. If you're lucky there's good learning material in the "external links" but that's a crap shoot. Much better to learn math from the traditional sources: textbooks, school programs, good teachers, etc.

I've had a love/hate relationship with math for most of my life. I currently like it.

I was decent at math my first few years of school. I remember my dad teaching me the powers of 2 up until 8,192; and I don't think school'd even taught us the thousands yet. Then came multiplication and division of 3-digit numbers, which really screwed with me. I hated math for the next few years until I slowly but surely got better at it and finally got on the same level as most of the other kids.

Then sometime early in my 8th grade year I got hit by a car while riding my bike, resulting in a concussion. This was around the time they were prepping us for Algebra 1 (Basic solve-for-x linear equation stuff), so it was literally a foreign language to me for the rest of the year. I ended up, against the will of my parents, being put into what was basically the remedial math class my freshman year. Luckily though, a friend of mine in study hall showed me how to do a simple problem, and suddenly it all just clicked. Math for the next 4 years came surprisingly easy, to the point where I just read

A Clockwork Orange in Algebra II for the last 2 weeks of class without paying attention to anything the teacher was saying and still aced the final.

Lol, sorry for telling my life story.

Fast forward to last year, and Calc 1 kicked my ass, but I managed to come out victorious. Calc II was rough too, but not as bad. I'll be honest, I still don't fully understand the Taylor/MacLauran series; I'm probably gonna have to brush up on it before Calc 3 this fall. Anyways, I've decided I'm gonna try to minor in math. I plan on taking Multivariable calc, a class on DiffEQs, and then Real and Numerical Analysis.

I often feel shame on the subject of math, because i got behind the pace by some as early as 5th grade and barely managed senior high school math. I use mental arithmetic all the time, especially at work, and have learned some mildly useful everyday things (like interest on interest) from youtube tutors at a later point in life (it really helps when you can rewind and hear it again after some thinking). But i tend to have trouble keeping numbers in my head for more complex problems. Above all, my library of methods is small, and my understanding of the methods present is very limited. I'm probably at the bottom rank on these boards.

When i need to solve a particular problem, like needing to define the active area of a hit box for a button that's not square or circle and can't be solved with a visual vector layout, i look it up.

I've self-taught my self to use math enough to (adequately) understand and make simple analogue circuits (like an amplifier or filter). All the things i learned in BASIC/qbasic has proved useful when writing js-like scriptlets when animating in Adobe AfterEffects, but i could certainly get much better at it.

I sometimes feel a language barrier - both towards the language of mathematics, but also math in english. If i have one suggestion to improve how math is taught in any small language, it'd be to include an english glossary at the end of every chapter.

Math was my weakest subject at school which I barely passed, and is the reason I wouldn't be able to get into any university to study the things I would like such as electronics stuff. All the stuff that was taught almost made no sense the way they were presented and things moved really quickly too, by the time I was almost understanding one thing there was a test that I fail or barely pass and another thing was brought up and you had to learn it. End result is nothing stuck, and pretty much nothing had any application so I couldn't even try to put those things to use somewhere so they could stick. Geometry was easy though, and only because I could put it to use outside the paper that was put under my nose.

On the bright side I vaguely remember the names of things and what they were supposed to be doing so I can look up the things over the internet and adapt the methods described to my needs. But that only works if things are actually described, a wall of formulas usually makes little sense and tends to abstract away the process of getting from input to output. If you feed things to me like to a CPU I'll immediately start seeing the connections and everything begins to make sense even though the whole thing would look whole lot bigger and messier the "programmer way" compared to "mathematician way".

Sometimes I have a problem at hand that I don't really know a solution for so I write down the numbers, make graphs or other diagrams and seek the connections, sooner or later I have found a perfect or good enough solution and can carry on (and I would have no idea if what I made exists already and what it would be called). So in a way I'm terrible as I don't know much and not terrible because I can understand it if it is presented the right way and that I can come up with things needed on my own too.

Well... I was a maths major in university, since this was the only subject I was good at in secondary school(I sucked hard in any non-science subjects, such as those history subjects, geography and economy(right), for science subjects such as physics and biology I was average at best and even for maths I sucked at arithmetic badly; that's to say something...).

Anyway, I graduated my university degree with a "not very bad" grade, and even continued to study for a master degree in maths afterwards.

Then, I got this job, an editor for textbooks, primary school maths textbooks actually. So, soon my knowledge in maths stayed at primary school level thereafter.

I was good at math, getting top grades all through uni. It's definitely come in handy tons of times, particularly when optimizing things. In the 3d world it's a lot more common than in 2d, but still useful. Synthesizing curves like rainwarrior said, finding faster ways to do something, etc.

I think it's similar to CS education vs a self-taught programmer. With a good math background, you can jump straight to the best way, and not have to guess or research (kinda like tokumaru's logarithm surprise recently. Sorry for calling you out specifically, but that came to mind).

A self-taught programmer will know how to get something done, but he won't know the area of solutions, O notation, what is best used where, the tradeoffs. When getting my software engineering degree, I recognized multiple times things that I previously had no idea about, even though I could solve the issue. I had no idea some kinds of solutions even existed, let alone when I should use what. I only tried to use my hammers even when a screw appeared in front.

Gilbert wrote:

even for maths I sucked at arithmetic badly

I suck at arithmetic very hard. I cannot stand when people ask "how many is 7 times 56" and I have to use a calculator and they say "I thought you were skilled at math ?". This is NOT math. Math is about solving equations, geometry problems, etc... This is arithmetic, and it is *NOT* math.

Quote:

When I look at stuff like this (which flies completely over my head) I realize I suck at math. I barely managed to pass calculus in college.

Same here, however the calculus level required was very high so barely passing is already an acomplishement. The uni I was in is reputed to be extremely severe with math, basically no matter what you study you have to be very good at math or they'll make you fail. The grading system even have a "math" average and a "non-math" average, and you needed to pass both. My math average was always very close to the minimum for passing.

Quote:

I graduated my university degree with a "not very bad" grade, and even continued to study for a master degree in maths afterwards.

I was an expert in the art of barely getting the grade required to pass. That until the Master's degree, included.

Quote:

Wikipedia is also generally a very bad place to learn anything to do with math.

Yes. Wikipedia is a good source of info for many things but I noticed it sucks HARD in several area, and definitely suck when it comes to either math or religion, or health for that matter. The content is both extremely incomplete and inacessible due to using specialist terminology which is not available to random person looking things up.

Quote:

Really simple eh?

like...why does it work? I've kind of gathered it's an infinite sum of derivatives of a function (with other terms in the formula I don't yet understand) which approximates the shape of the actual function, so if you can keep computing the derivative of a function you can get as much precision as you want for the function you're going for, right?

Seems like you understood it fully. The concept is extremely simple, you're re-building a function which for a said point have the same derivate, 2nd derivate, 3rd derivate, etc... as another function (in this case the sin function). It thus embraces the shape of the sin function arround the point (but not elsewhere !), and it will be polynomial, hence computable.

Now actually computing a taylor series is not always simple, but the concept is extremely simple I think.

Quote:

In the 3d world

Am I the only one who thought you said "In the 3rd world" for awhile ?

Bregalad wrote:

Am I the only one who thought you said "In the 3rd world" for awhile ?

I did too.

I'd argue wikipedia isn't particularily good overall ("approximate knowledge of many things", to quote Adventure Time, but hey, it's free). For math, it basically looks to me like a reference guide for those who already know how to "read" math. It's probably incomplete, too, even if i can't judge that.

Bregalad wrote:

Quote:

In the 3d world

Am I the only one who thought you said "In the 3rd world" for awhile ?

No you aren't

rainwarrior wrote:

GradualGames wrote:

Oh, cool! That makes sense (seeing the pattern there...still don't have any idea how somebody figured this out to begin with). Well, I still am confused because...I understand you can approximate the sin function with the derivative(s) of sin at just one point. What I don't get is how does it work that you can approximate a whole function just from the derivatives at one point?

Yes, it works not just for sine, but for any continuous function.

e.g. if you pick a point on a curve f(a), you can approximate a point f(a+b) nearby by

taking the slope and following a straight line to that nearby point.

The slope is just the first derivative, though, you can make a better approximation if you take into account whether it is curving up or down vs. that straight line slope... so you can take the "slope" of that derivative slope, or second derivative, to improve the estimate. Instead of following the straight line, you follow the straight line, plus a continual curve up or down to adjust from it...

This process can be repeated until you have the accuracy you want. If the function is a simple polynomial eventually you get a derivative that is just 0, and at that point you're calculating it exactly.

If you want more accuracy around a specific region of the function, you can pick your starting point there. Considering a sine, you might realize that an approximation of sine(0.3) near 0 might be more accurate than sine(50π+0.3), even though the target value should be the same. Each successive layer of approximation will maybe get you one more "curve" in your approximated function. Of course with sine you know it's periodic so you can just modulo 2π to keep everything in the "close" range, but there are lots of non-periodic functions out there you may want to approximate.

Anyhow, that's just the Taylor Series idea, there's lots of other approximation methods. Many approximation methods have

undesirable instability that you should be careful to avoid. Again, hard to know where they can apply until you understand them and have experience with them, but I recommend taking it as far as you're interested. It's

OK to use stuff you don't understand if it solves your problem adequately, just it's hard to know what you're missing until you do.

Wikipedia is also generally a very bad place to learn anything to do with math. I find most of the articles are written by experts who expect a lot of pre-requisite knowledge. Wikipedia isn't supposed to be a tutorial, either, but it's not even structured in a way that is suitable for learning these concepts anyway. If you're lucky there's good learning material in the "external links" but that's a crap shoot. Much better to learn math from the traditional sources: textbooks, school programs, good teachers, etc.

That bit about the slope really helped me understand how that is working. Since a "sampled" derivative is the slope so if you keep taking (additional derivatives), it gets more and more accurate. Makes sense. It's still kind of amazing to me though...I mean looking at the Taylor series for the sin function on wikipedia, there isn't any trigonometry to be found, yet it works.

This further helps me realize how much of math is breaking things down into smaller steps, much like programming. I mean, I've been doing sinusoidal motion in my games since Nomolos, by accelerating an object in the opposite direction relative to a center point. It was just somehow easier to understand because I was down at the step by step, "add the next acceleration value" level, whereas math on paper has all the steps kind of expressed all at once. (though come to think of it, so is code, but maybe since I was writing it myself with a notation I was familiar with, a programming language, it was easier to think about...)

It's still damn impressive it works and somebody figured it out to begin with. Like, I really have trouble calling these things "simple." Maybe they're simple to use and perhaps to understand in some cases, but...actually being the guy who invents something like this...that amazes me.

Proofs are another thing I really never got a good grip on. The furthest I got is a few lights maybe turned on in my discrete math course in college and I did a little bit of induction, but that's it. After that whenever a proof came up in a class I was usually quite lost.

I was also always scared off of complex numbers and the concept of the "imaginary unit." I think the word "imaginary" created a lot of unwelcome cognitive dissonance. It's real math, right? It solves real problems, right? Then there's nothing remotely imaginary about it.

I realized recently that even negative numbers can be thought of as "imaginary", in so far as the "minus" is more of an "action" or a "direction," it is a tool. It's not a "real" thing in so far as, you can't count a negative amount of something in the real world. Negative numbers only exist as an abstraction. 5 less of something. Etc. It's the action of taking away. It's not an actual observable THING, the way "5 things" is something you can see with your eyes. So one could say negative numbers are "imaginary" too.

Naming things is hard. Math is full of really really scary sounding or bad names. That's probably the biggest problem a lot of folks have with it, right there.

I discovered fractals at a very early age, and despite not "understanding" what

i was, I thought the resultant pictures cool enough to abstract around not really understanding complex numbers.

Access to

FRACTINT and

James Gleick's Chaos: The Software helped a lot, too.

Just wait until you get to imaginary space & imaginary time, very real physics concepts

calima wrote:

Just wait until you get to imaginary space & imaginary time, very real physics concepts

Are they real in so far as someone has observed imaginary space and imaginary time in a lab, or just that there are equations that use these concepts? (I know very little about physics besides the basics that are useful for game development)

Imaginary space has been observed (the virtual particles that affect light speed in a vacuum), but imaginary time is just a theory so far.

GradualGames wrote:

I realized recently that even negative numbers can be thought of as "imaginary", in so far as the "minus" is more of an "action" or a "direction," it is a tool. It's not a "real" thing in so far as, you can't count a negative amount of something in the real world. Negative numbers only exist as an abstraction. 5 less of something. Etc. It's the action of taking away. It's not an actual observable THING, the way "5 things" is something you can see with your eyes. So one could say negative numbers are "imaginary" too.

Be careful when using the words "imaginary" and "real" in math. Those refers to complex numbers which obviously you didn't want to take in consideration here.

There is a lot of applications in math where negative numbers have to be excluded, but even more situations where they are an extremely practical tool to describe things. They are a natural invention following the invention of substaction, which very much exists.

Bregalad wrote:

GradualGames wrote:

I realized recently that even negative numbers can be thought of as "imaginary", in so far as the "minus" is more of an "action" or a "direction," it is a tool. It's not a "real" thing in so far as, you can't count a negative amount of something in the real world. Negative numbers only exist as an abstraction. 5 less of something. Etc. It's the action of taking away. It's not an actual observable THING, the way "5 things" is something you can see with your eyes. So one could say negative numbers are "imaginary" too.

Be careful when using the words "imaginary" and "real" in math. Those refers to complex numbers which obviously you didn't want to take in consideration here.

There is a lot of applications in math where negative numbers have to be excluded, but even more situations where they are an extremely practical tool to describe things. They are a natural invention following the invention of substaction, which very much exists.

The very paragraph above that one stated that I felt the word "imaginary" in complex numbers was a bad name. Then, I simply proceeded to describe how the concept of "negative" does not describe any real, observable thing in the real world---it is an abstraction, which helps us ARRIVE at real observable results in the real world. It's very real on paper, yes, but it is "imaginary" in so far as it is an abstraction we are using to solve problems. The "imaginary unit" in complex numbers, is another abstraction---the ONLY point I am making is that traditional names in mathematics sometimes scare people off, but not for any good reason---they're just bad names. I have less of a problem with the name "complex number" than I do with the name "imaginary." Because it is clearly not imaginary, it solves real problems. I have no ideas for a better name for it. But math is full of things with scary sounding names, which, when you break it down, turn out to not be all that difficult to understand after alll---that's my only point. It's a dumb reason to be scared off of it, but lots of people are.

In other words, I'm very aware that "imaginary" has a long-standing and traditional use in mathematics. I'm using "imaginary" in the english sense of the word, something that exists in your mind that doesn't exist in the real world, to point out that math is full of abstractions that are not representations of real physical objects but of operations to arrive at real results that may represent real objects. Does that make sense? I'm just pointing out that "imaginary" is simply a NAME for an abstraction, much like we arbitrarily name routines and functions. It's a bad name, because it isn't imaginary at all, it solves real problems.

Let me put it another way:

The simplest abstraction, positive natural numbers, are the easiest to "see" in the real world. e.g. COUNT things. They are the most "real" of anything in mathematics. (note: using a crude definition of real, meaning, your average person trying to understand what aspects of math actually exist not on paper at all. I'm not saying math isn't real or true, of course it is)

When you add negative numbers, now all numbers have a "-" next to them. Is there anything in the real world you can see and touch that has this property? No. Minus is an operation. An abstraction, which is real only on paper and in our minds. When applied to a real problem, we might wind up with "5 apples minus 3 apples is 2 apples" 2 apples, which is real and we can see it.

The point I'm really trying to make (and probably failing to explain what I'm saying) is that an operation such as - is no more real than the square root of -1. They are abstractions which allow us to solve increasingly difficult problems. They aren't something we observed somewhere in the real world. That's why I have a problem with calling the square root of -1 imaginary. In terms of the usual definitions and rules for multiplying negative numbers, the square root of -1 doesn't make sense (thus the term 'imaginary'), but -1 was an abstraction to begin with, e.g. "imagined." Then, mathematicians discovered you could solve more interesting problems by defining the square root of -1 as part of a term to use in a complex number. It's an abstraction. All abstractions exist in our minds and thus could be SAID to be "imaginary," re-iterating that I'm well aware of the traditional use of the word and do not mean to conflate negative numbers with complex numbers.

Now that I've established that---nobody wants to think of something in mathematics as being "imaginary." It's just a bad name. Much like if I named update_column "pink_elephant." I have no idea what it means and it doesn't adequately describe what it does. And worse, the name "imaginary" is lying to me and makes me think something impossible is magically working anyway. Which obviously isn't true.

Another example. Division by zero. 1 / 0. What is that? We say its NaN because it isn't defined, unless you are talking about *approaching* infinity, then it makes sense. They're all exceptions and edge cases and rules and names, just like in programming. Thus you could call any of these "odd" things "imaginary" if you wanted to. I just think it's a dumb word to use because these rules, definitions and abstractions all hold universally and all can be used to describe real world phenomenon accurately in many cases, so I just think it's dumb to use the word "imaginary," though I understand why it was used to begin with. When it was first discovered it was probably surprising that it worked, and caused the same cognitive dissonance that is bothering me and which probably bothers a lot of people when they learn math more advanced than highschool math.

Another funny thing about abstractions in math is that when you boil them all down, you have digits which originate in the abstraction of counting discrete objects. Yet we've found ways to describe continuous phenomena with the "programming" of arranging our rules and abstractions to continue to work with what ultimately are symbols for counting discrete objects. It's really quite amazing when you think about it. We went from counting things as cavemen, to creating this vast network of symbols and abstractions to describe the real world IN TERMS, ultimately, of the symbols we use to count discrete objects. It's quite impressive! In summary: Math is programming, but unfortunately was invented long before software engineers came along and said: "Let's give things good names rather than greek letters and brainfucky names like 'imaginary'!"

Even "

brainfuck" is arguably a less brainfucky name than its original name,

P Prime Prime.

My first guess about the use of Greek letters and the like in mathematical notation rather than whole-word names is to let more of an expression's numerator or denominator fit within the width of a page.

tepples wrote:

Even "

brainfuck" is arguably a less brainfucky name than its original name,

P Prime Prime.

My first guess about the use of Greek letters and the like in mathematical notation rather than whole-word names is to let more of an expression's numerator or denominator fit within the width of a page.

I think the thing is---so many concepts in math boil down to something that's not *that* hard to understand ultimately. But there's something odd in our culture which makes people just sort of throw up their arms and go: "Might as well be chinese to me!" Likely do to the unfamiliarity of the symbols used. Those who are able to get a little bit further might still throw up their arms later when they encounter something which appears to be the definition of an impossibility: i, the square root of -1. But I'm trying to say that ....if we taught math more carefully and properly, we could mitigate the cognitive dissonance by explaining exactly what math and abstractions actually are. Instead we just ask students to take the definitions and abstractions as they were originally formulated a hundred, two hundred or more years ago so they seem archaic and no effort is put into explaining them in simpler terms.

It seems as though some folks are blessed with the ability to intuit abstractions right away. They just "get" that they are abstractions without ever consciously verbalizing this fact. Then there are folks for whom for whatever reason abstract thinking is a bit more of a challenge---it was for me. I think I'm just now reaching some kind of threshold in my mental development which is making it easier to grasp and accept abstractions as they are. My point is I feel if mathematics were taught more carefully, more people might be able to develop that ability more quickly rather than it just being a "you're born with it or you're not" kind of deal.

Perhaps L. Ron Hubbard was right about people not being able to learn without visual aids, which

he called "mass". Hubbard also realized that instruction can't be productive until definitions of words are agreed upon, which later became

Layne's law of debate, and that a "steep" learning curve would be most easily understood as having effort on the vertical axis and achievement on the horizontal.

Not that that excuses

counterproductive application of his Study Tech though.

GradualGames wrote:

Division by zero. 1 / 0. What is that? We say its NaN because it isn't defined, unless you are talking about *approaching* infinity, then it makes sense.

Brief moment of offtopic pedantry: 1/0 is +∞. (lim x→0 1/x).

0/0 is the only one that's NaN because what it converges to depends on which limit you take.

The limit of 1/x differs based on whether x approaches 0 from the left or right side. From the left side, 1/x decreases without bound (-∞); from the right, it increases without bound (+∞).

GradualGames wrote:

Another example. Division by zero. 1 / 0. What is that? We say its NaN because it isn't defined, unless you are talking about *approaching* infinity, then it makes sense.

Sin(x)/x is not even close to approaching infinity when x is near zero (hint, it approaches 1), but is still NAN for x=0.

Quote:

Then, I simply proceeded to describe how the concept of "negative" does not describe any real, observable thing in the real world---it is an abstraction, which helps us ARRIVE at real observable results in the real world.

I disagree. If you're counting potatoes, then you're right. But when counting meters above sea level for example, negative numbers are a very real thing that happens in real life. A wider situation is locating a point on an infinite straight line; you will have to resort to negative values because the line does not end or start anywhere, so you cannot set the zero adequately to use only positive numbers, no matter how you try.

Temperature is often used as an example but it's a horrible example because actually 0K is a true zero and there's no reason to have negative temperatures at all, except we didn't know about absolute zero back when the concept of "temperature" was invented.

It's difficult to communicate what I'm really trying to say, here. What I'm saying is that the concept of "negative" is an operation that we essentially define to mean "reverse direction" on the number line, and it helps solve problems algebraically. We can't count a negative number of something unless we're talking about taking something away, which is an *action* not a thing in the real world. It's abstract to begin with is what I'm saying. It's real, of course, but not real the way a physical object is real. This is the only point I'm trying to make.

Defining i as the square root of -1 seems like we're defining a falsehood. But if you think about it like I'm trying to suggest, it's simply another abstraction which enables yet more algebraic solutions to work. The normal way we apply "negative" to multiplying negative numbers makes the square root of -1 seem like a falsehood, but since "negative" was a rule or action to begin with, what we're really saying is: "IF -1 had a square root and followed this rule, let's call it i." And the fascinating thing is that it lets us solve more problems.

For some reason it's a lot easier to understand and internalize "negative" than to accept an abstraction where we must suppose a falsehood is true (i is the square root of -1). Get where I'm going with this? They're both very "real" in that they solve real problems, but one is a hell of a lot harder to grasp if you aren't blessed with an innate ability to grasp definitions and abstractions. It seems to me with enough effort math pedagogy could be improved so that concepts such as complex numbers would not be so intimidating.

Bregalad wrote:

But when counting meters above sea level for example, negative numbers are a very real thing that happens in real life.

What? How?

Edit: To answer the thread title, bad.

The distance between sea level and the sea floor still has positive magnitude; it's just in the downward direction. Negative real numbers are an abstraction including whether the direction is forward or backward. Complex numbers are a generalization of this direction to a plane.

tepples wrote:

The distance between sea level and the sea floor still has positive magnitude; it's just in the downward direction. Negative real numbers are an abstraction including whether the direction is forward or backward. Complex numbers are a generalization of this direction to a plane.

That's what I thought. Who the hell says "-5 meters above sea level"?

tepples wrote:

The distance between sea level and the sea floor still has positive magnitude; it's just in the downward direction. Negative real numbers are an abstraction including whether the direction is forward or backward. Complex numbers are a generalization of this direction to a plane.

I haven't studied complex numbers in depth yet, but at a high level, what makes complex numbers different from just having a pair of X and Y coordinates, then?

Nothing, it's a regular 2d vector.

GradualGames wrote:

I haven't studied complex numbers in depth yet, but at a high level, what makes complex numbers different from just having a pair of X and Y coordinates, then?

Complex numbers

can be used for various 2D geometry problems, though I think the notation is a bit confusing for someone who is really trying to do 2D geometry and would rather just work with a 2D

vector.

The original reason for

i is simply to solve the

quadratic equation where there are no "real" answers. It is

not the square root of -1, but rather it is the "imaginary" number that when squared will equal -1.

i2 = -1When trying to solve a quadratic, if that square root term

b2-4ac is positive you get 2 solutions, if it is 0 you get 1 solution, if it is negative you get 0 solutions. However, you can think of the 1 solution case as

two solutions that just happen to be equal to each other. If you use complex numbers, even the negative/0 solutions case becomes again

two solutions, just requiring an "imaginary" component. So with complex numbers this thing that had 3 different types of outcome now only has 1. This kind of uniformity is part of why complex numbers can be very useful.

So... okay maybe it doesn't immediately sound useful for a quadratic, but as soon as you want to solve cubic (

x3) or quartic (

x4) equations, you'll discover that the "equivalent" to the quadratic formula for them is

maddeningly complicated. Complex analysis becomes a light leading out of the tunnel for this.

There's all sorts of useful things that come out of complex numbers, particularly to do with exponents, and periodic functions like sine and cosine fit in here as well in ways that might surprise you. It becomes a

very good way at looking at a lot of problems that aren't obvious at all when you first hear about

i.

calima wrote:

Nothing, it's a regular 2d vector.

The thing that makes a complex number different than a 2D vector is that you can

multiply two complex numbers (and all the consequences that carry on from that). 2D vectors can be multiplied (

scaled) by a scalar (single value), but not by another vector. Everything that follows from this is what makes complex numbers useful for things that 2D vectors alone are not.

rainwarrior wrote:

GradualGames wrote:

I haven't studied complex numbers in depth yet, but at a high level, what makes complex numbers different from just having a pair of X and Y coordinates, then?

Complex numbers

can be used for various 2D geometry problems, though I think the notation is a bit confusing for someone who is really trying to do 2D geometry and would rather just work with a 2D

vector.

The original reason for

i is simply to solve the

quadratic equation where there are no "real" answers. It is

not the square root of -1, but rather it is the "imaginary" number that when squared will equal -1.

i2 = -1When trying to solve a quadratic, if that square root term

b2-4ac is positive you get 2 solutions, if it is 0 you get 1 solution, if it is negative you get 0 solutions. However, you can think of the 1 solution case as

two solutions that just happen to be equal to each other. If you use complex numbers, even the negative/0 solutions case becomes again

two solutions, just requiring an "imaginary" component. So with complex numbers this thing that had 3 different types of outcome now only has 1. This kind of uniformity is part of why complex numbers can be very useful.

So... okay maybe it doesn't immediately sound useful for a quadratic, but as soon as you want to solve cubic (

x3) or quartic ([i]x4) equations, you'll discover that the "equivalent" to the quadratic formula for them is

maddeningly complicated. Complex analysis becomes a light leading out of the tunnel for this.

There's all sorts of useful things that come out of complex numbers, particularly to do with exponents, and periodic functions like sine and cosine fit in here as well in ways that might surprise you. It becomes a

very good way at looking at a lot of problems that aren't obvious at all when you first hear about

i.

Aren't complex numbers used in 3D graphics a lot too, via "quaternions?" That's probably the main application I'll wind up reading up about...it's the only application I can think about that would produce interesting results with things I like (game programming). I just have no idea what those results are. Haha

...You get why the concept is intimidating to those who haven't studied math very hard, right? Once one has internalized that no negative number can be a square, the definition makes you think that complex numbers are based on a falsehood. It produces a similar cognitive dissonance to that one proof where you can say the sum of all natural numbers is -1/12 (which I really don't understand to this day. I can see it shows up when you graph it but I really don't get it yet. I'd have to spend some quality time with it...) lol.

GradualGames wrote:

Aren't complex numbers used in 3D graphics a lot too, via "quaternions?" That's probably the main application I'll wind up reading up about...it's the only application I can think about that would produce interesting results with things I like (game programming). I just have no idea what those results are. Haha

I would say no about quaternions; the primary use for them in 3D graphics is simply as a way to express a rotation and its axis in a compact/efficient way. You don't

really need to understand the "complex" consequences of that to work with them for that purpose, which is merely a geometric purpose. (Just as I said above, how I think using complex numbers for 2D geometry problems is probably unnecessarily confusing.)

So... while the

word quarternion will come up almost certainly, its actual use is really to just represent a 3D geometric rotation and it need not be understood any more than that to work with quaternions in 3D graphics. (There

are 3D graphics applications of the true complex quaternion, but they're obscure.)

However, if you want to write a raytracer, for example, you'll probably very quickly run into cases that require complex numbers to solve effectively. An intersection of a ray and a sphere is a quadratic equation, but a ray and a torus is a quartic. I mentioned how they have some hideous "

formula" solutions for such a thing but in practice they do not tend to be numerically stable (the floating point errors compound wildly). You're going to have a very hard time writing a practical solver for this kind of thing without dealing in complex numbers. (Just complex numbers here, not quaternions.) ...though you might manage with just some calculus and an iterative solver, if you can trade the CPU / accuracy for it.

GradualGames wrote:

...You get why the concept is intimidating to those who haven't studied math very hard, right?

I do, but I can't address your complaint that you don't like the terminology. I didn't make up the words, I can only try to help you understand what they mean.

GradualGames wrote:

you can say the sum of all natural numbers is -1/12

That one is an interesting paradox, and it definitely is something that follows from a particular sequence of logical conclusions, but probably the most interesting thing about this is finding out which premise that you've accepted made this

thing that should not be true.

There are possible paradoxes in all formal systems of logic,

unfortunately, but that doesn't necessarily make the less paradoxical parts of it unuseful, and you should guard yourself against sophistry that would rob you of that utility.

rainwarrior wrote:

There are possible paradoxes in all formal systems of logic

To be pedantic (that's why we're all here, right?) there are possible paradoxes in all

sufficiently powerful formal systems of logic. Predicate logic and first-order logic are famously sound and complete. Second-order logic is incomplete, but certain rather

esoteric fragments of it aren't.

Lots of teaching seems to be

"We told you this thing was impossible because it would have been a distraction when we were working on basics. Now we need you to unlearn a little so that we can fill in that gap".

Not just with math but lots of engineering fields too.

Here's a brief article about one way that complex numbers naturally arose in the 16th century from trying to solve cubic equations:

https://www2.clarku.edu/~djoyce/complex/cubic.htmlOne mathematician came up with a generic formula solution for solving cubic equations (like the quadratic formula does for quadratics).

From quadratic problems they were already used to "impossible" solutions where there's a square root of a negative number, but it was noticed that in some cases of the cubic formulae you could get a pair of such square roots that cancel each other out, leaving a valid solution!

Down some lines of inquiry, the idea of the "imaginary" number

i2=1 is just kind of staring you in the face. This wasn't some arbitrary hack just tacked on there on a whim, it was a thing that kept coming up in what they were doing. It seems a bit bizarre at first, but it very "naturally" comes out of the study of roots. This is also probably hard to see if you're taught about

i before you've ever seen a place where it springs out of.

Actually, maybe that's a good way to show one of their uses... you know that a square root has 2 solutions, negative and positive. Why does a cube root have only 1 solution and not 3? Why does a 4th root have 2 solutions and not 4? Shouldn't a 5th root have 5 solutions?

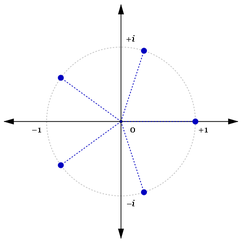

Well, with complex numbers it does! On the complex plane,

these roots are evenly spaced along a circle:

Attachment:

fifth_root_of_1.png [ 11.38 KiB | Viewed 1239 times ]

fifth_root_of_1.png [ 11.38 KiB | Viewed 1239 times ]

Anyhow, I'm not really trying to explain complex exponents here, I'm just trying to show how having

i as a tool might begin to shed light on these things in a useful way.

A deep feeling of depression comes over me when I think about all the stuff I don't know. And with all the new stuff that I learn, I end up forgetting an equal amount of the old stuff.

I barely remember calculus, for example. I couldn't solve an integral if you asked me.

rainwarrior wrote:

Here's a brief article about one way that complex numbers naturally arose in the 16th century from trying to solve cubic equations:

https://www2.clarku.edu/~djoyce/complex/cubic.htmlOne mathematician came up with a generic formula solution for solving cubic equations (like the quadratic formula does for quadratics).

From quadratic problems they were already used to "impossible" solutions where there's a square root of a negative number, but it was noticed that in some cases of the cubic formulae you could get a pair of such square roots that cancel each other out, leaving a valid solution!

Down some lines of inquiry, the idea of the "imaginary" number

i2=1 is just kind of staring you in the face. This wasn't some arbitrary hack just tacked on there on a whim, it was a thing that kept coming up in what they were doing. It seems a bit bizarre at first, but it very "naturally" comes out of the study of roots. This is also probably hard to see if you're taught about

i before you've ever seen a place where it springs out of.

Actually, maybe that's a good way to show one of their uses... you know that a square root has 2 solutions, negative and positive. Why does a cube root have only 1 solution and not 3? Why does a 4th root have 2 solutions and not 4? Shouldn't a 5th root have 5 solutions?

Well, with complex numbers it does! On the complex plane,

these roots are evenly spaced along a circle:

Attachment:

fifth_root_of_1.png

Anyhow, I'm not really trying to explain complex exponents here, I'm just trying to show how having

i as a tool might begin to shed light on these things in a useful way.

Cool, thanks for that...makes me even more interested in the subject.

If by arbitrary hack you were referring to my complaint about the name "imaginary," I didn't mean to say I thought it was an arbitrary invention, just simply to say that it is unfortunate to call something imaginary and define something which appears to be a falsehood without context---it's hard to swallow for many students I think. But information like what you just shared, I wish could be more seamlessly integrated into math courses. Instead its like an assembly line. Learn your formulas! Got 'em? Do a test! Failed? Oh well you have a good job 11 years later anyway

Quote:

So... okay maybe it doesn't immediately sound useful for a quadratic, but as soon as you want to solve cubic (x3) or quartic (x4) equations, you'll discover that the "equivalent" to the quadratic formula for them is maddeningly complicated. Complex analysis becomes a light leading out of the tunnel for this.

Oh I remember having studied this, and had to use the cubic formula, it was insanely complicated. Basically if I remember well solving arbitrary equation of 3rd and 4th order is possible but insanely complicated, and 5th order and upper is basically impossible without resorting to numerical approximations. Or you can be smart and factor the equation away by "guessing" solutions, but it's not always possible.

Quote:

That's what I thought. Who the hell says "-5 meters above sea level"?

Well, the sign simply shows the direcition. The same could be said about latitude and longitude positioning, the sign indicate the direction whether it's north/south or east/west. Basically it carries extra information in the numebr itself. Complex numbers is the same concept, they carry information for a number and an angle at the same time.

Quote:

It is not the square root of -1, but rather it is the "imaginary" number that when squared will equal -1. i2 = -1

Actually every (positive real) number has two "square roots" (i.e. two reals that multiplied by themselves gives this result) but the "square root" function only returns the positive one. For example both 3 and -3 will give 9 when multiplied with themselves, but only 3 is the square root of 9, not -3.

Similarly, both i and -i gives -1 when multipplied by themselves. Since neither is "positive" nor "negative" (they are something else entierely !) we can't decide which one is the "square root" of -1. We could decide that we keep the one in the first hemicycle (angle is smaller than 180°), this is simple when having the square root of negative numbers (the two "roots" will always be conjugated), but when taking the square root of an already complex number there is no simple solution (the two "roots" can be anything if I'm not mistaken).

DoNotWant wrote:

Who the hell says "-5 meters above sea level"?

HEIGHT REACHED.....-240m.I think it's kind of wierd though. In that game, the levels during game play are called DEPTH xxx m when you're under ground and will be called HEIGHT xxx m when you're above ground in the full game. I think it's kind of weird it switches from DEPTH x during game play to HEIGHT -x on the game over screen, but I guess it makes it easier to compare progress with other people at a glance. (The instructions encourage you to take a picture of this progress screen to share with others.)