Originally posted by: PatrickM.

While they may be being selective, I don't think it's totally arbitrary. It can be argued that scanlines are part of the intended look and an inevitable result of upscaling 240p on TVs that do not double the lines (boo!). Color bleed and static are indeed the result of flaws in the CRT, while scanlines are the result of how the technology works- it's not the product of a design or manufacturing flaw. Weren't most designers working with RGB monitors, which show pretty distinct scanlines? I don't think all old PC games need scanlines because a lot of them were designed to be run at 640x480, right? I only think they're needed for 240p content, and would actually be undesirable for anything else. I think big blocky pixels are also just inherently less aesthetic than the smoothed edges created by the effect of proper scanlines. I think there's some evidence from psychology that circles are more attractive than squares or something, so this might not be completely subjective. XBR and other stuff is too heavy handed and results in too much lost detail.

Well keep in mind, there is no such thing as 240p. Your nes and snes are *NOT* putting out a 240p 60 fps signal, it just doesn't exist. They're putting out a 480i 30 fps signal where they're not marking the fields correctly, so every field gets drawn with the same offset. The result is taking a 240p picture, and streaching it out to be 480i, and leaving every other line blank, it's these blank lines that are called scanlines. I'm not 100% sure where the term 240p came from, and while it's not exactly wrong, it's misleading because it's not a standard res, and doesn't work like 480p 720p or any other p-type video mode.

Another misconception is scanlines dont show up on LCD due to a limitation of LCD tech, but that's not really the case. They could draw every other line just as easy as a CRT, but with early ones, interlaced video (like TV) looked like crap on them, so they deinterlaced the video. All is fine and dandy except for video games, since the games were using a non-standard use of 480i, the deinterlacing caused them to become displayed as 30 fps 480p, but with only 240 lines of actual data since it's repeating half of it, hence the doubled lines. And this manditory interlacing adds lag. You MUST have two fields to do it, so right there, no matter how fast the CPU, you have 16.7ish ms of lag waiting for the second field to show. And since processors were so slow back then when LCDs came out, and deinterlacing can be tricky depending on how you do it, you could miss 4-5 frames. And I mean FRAMES, not FIELDS. A nes puts out 30 FRAMES, and 60 FIELDS, but it displays the fields so they look like frames. So 5 frames is 167ms ish amount of lag. And some used temporal smoothing, over a set amoutn of time, so that requires grabbing a few more fields, and a lot more processing. Thats one reason why I think people bitching abotu lag is so silly. Chances are they don't have 1 frame of lag (really one field of lag), they're dealing with a ton of lag with many older TVs.

A standard TV shows 30 frames per second. A field is half a frame. You have the even field that draws say scanlines 0,2,4,6, ect. Then you have the odd field that draws 1,3,5,7 ect. Together they're a single frame. What the nest is doing is outputing an even frame, lines 0,2,4,6,8, followed by another even field, so 0,2,4,6,8 ect. So it only ever draws half the lines in the picture.

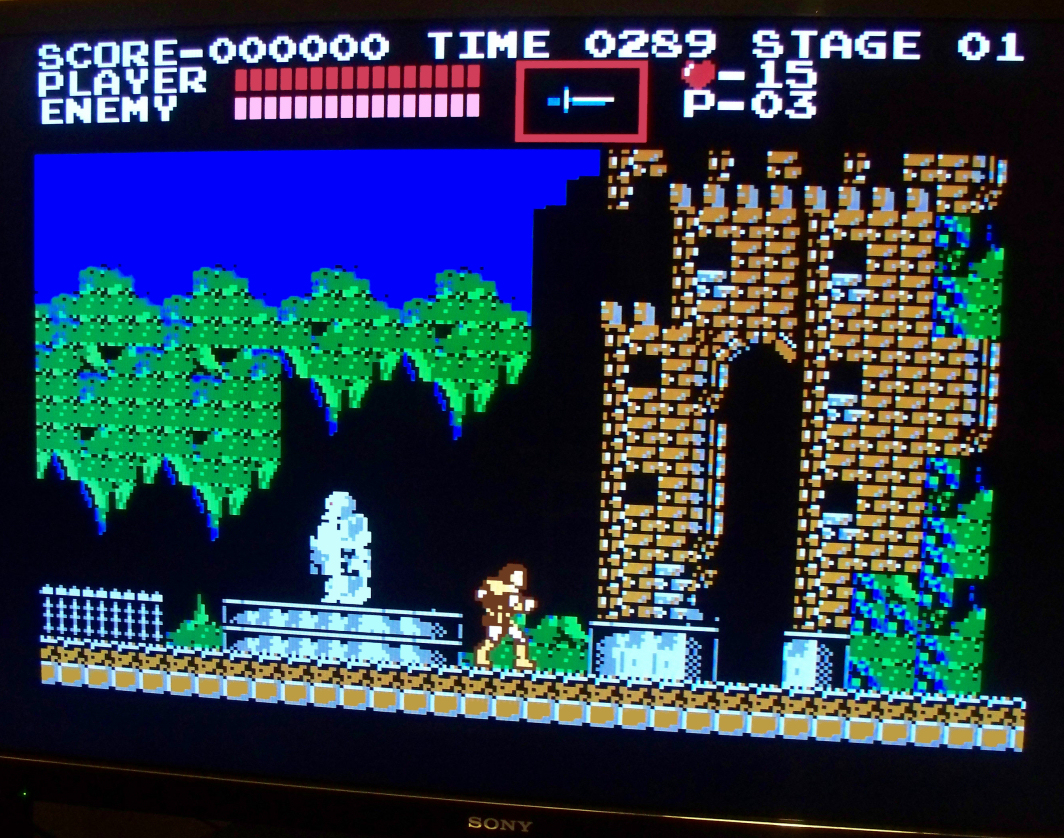

RGB monitors generally do NOT show scan lines, they accept progressive video, that the consoles did not putput. If someone worked on a computer with an RGB monitor using a raster paint program, they would not see the scanlines in their artwork, unless they were using a lower-end amiga. They couldn't use apples for this work cause of the non-standard way apples used colors, so you had IBM, Amiga, and atari to use as dev tools. If they were using home grown tools it's easy to simulate what it woudl look like with scan lines, but remember, many many games took advantage of CRTs limitations for special effects. Ever play on am emulator and see a cloud that looked like a checkerboard? On a TV from the 90s, it would look pretty smooth and transparent. Sonic's a common example, but not even close to the first to use it:

Look at the big brown block. The top is how it looks in an emulator, checkerboard. Below that, I suppect someone just applied a blur filter, since there's no scanlines or shadowmask, but you get the idea. Do you remember seeing checkerboards? I doubt it, cause by that point they were banking on your TV bluring the crap out of it.

So anything you do to improve graphics, has a very very very real chance of HURTING other graphics. There is no way to set it up and say "This is perfect for all games". You need to know the game, tricks used (If any), and your monitor. Me, I HATE seeing the "overscan" areas, thoese are not meant to be there, and should not be shown. I'd rather have too much cut off than a line of junk pixels.