This works fast enough. This makes me wonder how fast the Genesis would be if it had the same PPU as the SNES, and needed to do all this shit in order to have good animation, and vice-versa.

Agreed. It doesn't really seem that the SNES's CPU is slow, it actually seems like it has to do more work than the Genesis's because of the PPUs' design. The planar graphics format comes to mind...

psycopathicteen wrote:

I would've used 80 equally sized 32x32 slots.

Wow.

Well, you got to look on the bright side. You have more than 64 colors.

(I can barely get by with 256.

)

Stef said I only got my Gunstar Heroes demo running on the SNES because I "simplified" everything to run good on the system, when my demo was actually running a much more complicated dynamic animation engine than the original game, and still had plenty of CPU time left.

I decided to convert part of the code to 68000 myself to compare the cycle counts.

Code:

65816 code:

-;

inx //2

lda {vram_slot_table},x //4 6

cmp #$0f //2 8

beq - //3 11

-;

lsr //2

bcc + //3 5

iny //2 7

bra - //3 10

+;

68000 code:

-;

mov.b d0,(a0)+ //8

cmp.b d0,d1 //4 12

beq - //10 22

-;

lsr.w d0,#1 //8

bcc + //10 18

addq.b d2,#1 //4 22

bra - //10 32

+;

This is one of the speed crucial parts of the code. It looks for a 32x32 slot that is not completely full, then it looks at which 16x16 slot is still open. The first loop is 11 vs 22 cycles, the second loop is 10 vs 32 cycles. Look at how "fast" the 68000 is.

psycopathicteen wrote:

Stef said I only got my Gunstar Heroes demo running on the SNES because I "simplified" everything to run good on the system, when my demo was actually running a much more complicated dynamic animation engine than the original game, and still had plenty of CPU time left.

Why does it even matter how "simple" it is if it looks and plays exactly the same and doesn't have any slowdown? That'd be like I bragged that I could write "inc" 30 times instead "clc adc #$30" and the game would run without slowdown. It isn't any more impressive from a gameplay perspective and it just looks stupid.

psycopathicteen wrote:

This is one of the speed crucial parts of the code. It looks for a 32x32 slot that is not completely full, then it looks at which 16x16 slot is still open. The first loop is 11 vs 22 cycles, the second loop is 10 vs 32 cycles. Look at how "fast" the 68000 is.

Wow.

It's funny, because even though the SNES is clocked about 1/2 as fast as the Genesis, it takes about twice (or three times in the second example) the amount of time to do the same thing. I feel like video hardware generally matters more in a video game console than the main CPU anyway. You can try to optimize on a CPU, but not on video hardware, and if it's like the SNES, you can add more processing power with an expansion chip, (again, I never said it would be easy, it's just possible.) but you (unfortunately) can't add more overdraw. (No, the Yoshi's Island method is not a substitute for overdraw tepples.

)

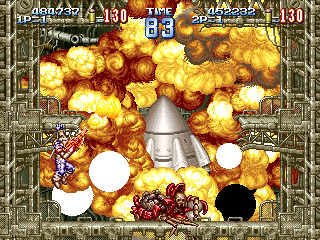

This is pretty random, and although I haven't been doing anything SNES related for the past week or so (I think you know why) I wonder. I plan on making both characters be able to carry a rocket launcher which will (obviously) cause an explosion. You won't be to shoot more than one rocket at a time, so there will be no more than 2 explosions onscreen at the same time. The main problem is that the explosions are going to be 64x64 pixels each, so there will (obviously) be a 1/4 of sprite vram gone. There will also only be about 2 32x32 sprites left I can animate, so I figure that I'd double buffer the explotions so there will be 3/8 of sprite vram taken up, (there will be the 2 64x64 explotions and 2 64x32 for the double buffering) but I'll only be using about 2/5 of the DMA bandwidth. I plan on cutting the top and bottom 8 pixels of the screen if they are not visible anyway, and if it comes down to it, I'll cut off 16 more pixels for DS resolution, but I'm only going to do that if I have to. I was originally going to make the scoreboard out of sprites, but I'm not sure how that's going to fit in the sprite vram...

Espozo wrote:

even though the SNES is clocked about 1/2 as fast as the Genesis, it takes about twice (or three times in the second example) the amount of time to do the same thing.

Cycles, not time. In FastROM, the procedure would take roughly 6/7 as long on the SNES as on the Genesis, assuming neither one can be further optimized (they look pretty simple, but they're out of context, and I don't know 68K).

Yes, that's what I meant. (The SNES's CPU obviously isn't twice as fast.

)

I felt like posting a YouTube video of me ranting about how it is bullshit to design game logic around perceived CPU speed. I'll still do it if I have enough time Today.

I did notice that the second loop could be optimized a little by rearranging the loop.

Code:

lsr //2

bcc + //3 5

-;

iny //2

lsr //2 4

bcs - //3 7

+;

lsr.w d0,#1 //8

bcc + //10 18

-;

addq.b d2,#1 //4

lsr.w d0,#1 //8 12

bcs - //10 22

+;

The 68000 still takes 3 times the cycles.

Decided to redo the 68000 version to see how fast I could get (going by the code originally posted):

Code:

moveq #0, d0 ; 4

lea @Table(pc), a1 ; 8

@Loop:

move.b (a0)+, d0 ; 8

move.b (a1,d0.w), d1 ; 14

bmi.s @Loop ; 10 usually

; ...

@Table:

dc.b $00, $01, $00, $02 ; %0000, %0001, %0010, %0011

dc.b $00, $01, $00, $03 ; %0100, %0101, %0110, %0111

dc.b $00, $01, $00, $02 ; %1000, %1001, %1010, %1011

dc.b $00, $01, $00, $FF ; %1100, %1101, %1110, %1111

That's 12 cycles for init and 32 cycles per iteration. For the sake of comparison, at 65816's usual speeds that'd be about 6 and 16, respectively.

Um,

ouch, although now I want to see psycopathicteen go ahead and try the same using look-up tables. Pretty sure that if there wasn't a dare (or I was heavily starved for cycles) I'd have tried an approach similar to his.

PS: if anybody wonders, bmi.s would take 6 cycles when not branching. That'd only happen in the last iteration though, so I've decided to not count that possibility for the purpose of profiling.

psycopathicteen wrote:

Stef said I only got my Gunstar Heroes demo running on the SNES because I "simplified" everything to run good on the system, when my demo was actually running a much more complicated dynamic animation engine than the original game, and still had plenty of CPU time left.

Your bad apple demo for snes is a proof that actualy the snes CPU and 6xx architecture are not crappy

The snes cpu is "slow" because is not at his full speed (3,58 mhz) mainly because the use of crappy slow WRAM

..

And add to this, how about inexperimented 65xx coders ??

i saw the source code of Art of fighting for PCE, this game is full of macro in 68k style, and the ice on the cake, the code is 6502, not even hu6280,and the game run very fast with a faked zoom ..

Quote:

Stef said I only got my Gunstar Heroes demo running on the SNES because I "simplified" everything to run good on the system, when my demo was actually running a much more complicated dynamic animation engine than the original game, and still had plenty of CPU time left.

I have got some discusion about GH, and i said him that is more simpliest than it seem .

I think you can do an exact port, not because snes CPU,but only because you cannot put the same amount of sprites in H32, readability become terrible, and should have an heavy sprites flicker .

TOUKO wrote:

psycopathicteen wrote:

Stef said I only got my Gunstar Heroes demo running on the SNES because I "simplified" everything to run good on the system, when my demo was actually running a much more complicated dynamic animation engine than the original game, and still had plenty of CPU time left.

Your bad apple demo for snes is a proof that actualy the snes CPU and 6xx architecture are not crappy

The snes cpu is "slow" because is not at his full speed (3,58 mhz) mainly bacause the use of crappy slow WRAM

..

Pretty much. People just look at 3.58 and 7.6 and say "Duh, 7.6 is biger dan 3.58." They don't know anything deeper than that. It's funny when people try to compare processor speeds by seeing how fast the screen scrolls.

Even James Rolfe does in his second SNES vs Genesis video. (You wouldn't believe how stupid the comments are there, and I'm including some of the people who are SNES side.)

Quote:

Pretty much. People just look at 3.58 and 7.6 and say "Duh, 7.6 is biger dan 3.58.

True, how many times i heard that

, mainly on the best 16 bits forum,the well know sega-16

Quote:

It's funny when people try to compare processor speeds by seeing how fast the screen scrolls.

And parallaxes ??, you know that snes can't do the millions ton of parallaxes which we have in practically all Md games ..

however i like this kind of parallaxes, on PCE you can only do the same with only 1 bck layer,you have no choice, but this are called screen ruptures and not parallaxes because there not overlapping .

3.580 on the Master System or 4.194 on the monochrome Game Boy is also bigger than 1.790 on the NES, yet the 6502 gets more done in each cycle than Z80 or similar chips, so it's mostly a wash. I'm under the impression that the 68000 and 65816 share a similar relationship: the latter can do twice as much Work Per Clock.

So to be able to compare work per clock on an even playing field, some time ago I invented a unit of time called

gencycles. One gencycle is a fraction of a 65816 cycle intended to roughly approximate the period of one 68000 cycle. Each slow access (WRAM, slow ROM, and each byte of DMA) takes 3 gencycles, and each fast access (most I/O ports, fast ROM, and "internal operation") takes 2 gencycles. So you can cycle count a 68000 subroutine, see how many cycles it used, cycle count a 65816 subroutine that does the same thing, count gencycles, and you should get something fairly close to the machines' relative speeds.

A Genesis emulator author (i don't remember his name) profiled the 68K in some games ,and his mips ratio is 0,7/0,8 mips at his best ..

This is not abnormal, the 68k was designed for workstations and servers, not for a gaming system .

his streinght is easy to code for, even in a high level language (like C), and his 24 bits addressing space, you don't have to care about the annoying mermory banks managment,and can access to a large memory space,not his freq/cycles ratio ,this is why in 68K arcade systems, the CPU manages only the game logic, an let all customs chips to do the dirty work.

The 68k profiling files are here :

http://exophase.devzero.co.uk/profiles.zip

http://forums.sonicretro.org/index.php?showtopic=33501 This thread has a lot of really stupid posts like this:

Quote:

I'd rather see it attempted with a plain vanilla SNES with nothing added. I would think these approaches are a good idea:

1. Optimize the hell out of the code and make sacrifices where it may not be noticed.

2. Maybe slow Sonic's max speed to 5 pixels per frame so that it doesn't look excessively fast.

3. Have an SCD-style pan forward when running.

4. Scale what art you can down to 80% original scale horizontally so that at least those don't look distorted.

News flash! Did it ever occur to you that deliberately causing the game to run slow at full speed, will cause **gasp** the game to run slow at full speed? Maybe that's the reason why you're having a hard time optimizing you're game? The SNES isn't actually lagging, you just programmed it that way?

Oh yes, i have the same kind of discussions with stef on a french forum about the snes 65816 and the Hu6280,and he assimilates all two to a vanilla 6502, i think all guys in sonic forum do the same .

And you know, the 68k is better because of 32 bits instructions

, but in a 16 bits and 2D games who care about this ??

For PCE i use mainly 8 bits and some 16 bits (for pointers ) ,and the hu6280 is clocked at 7,16 not 1,79.

At the same speed the 65816 is close to twice as fast as 6502 .

Quote:

News flash! Did it ever occur to you that deliberately causing the game to run slow at full speed, will cause **gasp** the game to run slow at full speed? Maybe that's the reason why you're having a hard time optimizing you're game? The SNES isn't actually lagging, you just programmed it that way?

Yes if you code badly you have the wrong result, the snes CPU doesn't have much cycles to waste with bad programming .

The big advantage of 65xx achitecture, is his high level of code optimisation.

For GH if it's the CPU the limiting factor, when why a similar game do not exist with a sfx or SA-1 ??

Same for BTU, if is the cpu which limit to 3/4 sprites on screen, why capcom don't put a faster processor for FF 2/3 like he did with MMX series ???

Simply because is the pixels sprite limit / scanline the real limiting factor,and a faster CPU cannot change this fact .

In the post above I was referring to the sonicretro guy stating "maybe slow Sonic's max speed to 5 pixels per frame so that it doesn't look excessively fast" making no sense, because lowering the pixels-per-frame movement speed of Sonic's character wouldn't speed up the CPU at all, it would just make it look like the game is lagging, even when it is running at 60fps.

It's like recording a slow-motion voice into a tape recorder. If you play it at normal speed, it will sound like slow-motion, because it was recorded that way.

You missed something:

Some Bloke on Sonic Retro wrote:

3.54MHz do not a fast system make

Dat Gramar. Anyway, I'm pretty sure it's 3.5

8. I know I'm nitpicking, but come on. It's as easy as typing "SNES" on google and looking at the Wikipedia page.

Quote:

Same for BTU, if is the cpu which limit to 3/4 sprites on screen, why capcom don't put a faster processor for FF 2/3 like he did with MMX series ???

Simply because is the pixels sprite limit / scanline the real limiting factor,and a faster CPU cannot change this fact .

Again, I can't stress enough how video hardware is generally more important to a video game system than processing power. I do think they could have at least added another enemy though and just said "oh well!" when the "cheese grader effect" (that's what I like to call it anyway. Yeah, I know I'm wierd) kicks in.

Quote:

In the post above I was referring to the sonicretro guy stating "maybe slow Sonic's max speed to 5 pixels per frame so that it doesn't look excessively fast" making no sense, because lowering the pixels-per-frame movement speed of Sonic's character wouldn't speed up the CPU at all, it would just make it look like the game is lagging, even when it is running at 60fps.

Yes, moving a sprite with 5 pixels or 50 is the same in term of CPU cycles, and sprites moving/repositioning is not cpu intensive at all .

Quote:

Again, I can't stress enough how video hardware is generally more important to a video game system than processing power.

Yes more powerfull hardware you have less processing power is needed .

But it's better to have the two if possible, because with cpu power you can do more

But the snes ppu is not good for me in the sprite department,too convoluted and bad designed, and force coders to spend a big amount of CPU cycles to deal with .

TOUKO wrote:

But the snes ppu is not good for me in the sprite department,too convoluted and bad designed, and force coders to spend a big amount of CPU cycles to deal with .

I don't think the high oam thing is

too bad, but the 1/4 of vram thing is certainty an issue. Not being able to use 8x8, 16x16, and 32x32 sprites is a major annoyance though. (I could care less about 64x64, considering there isn't nearly enough vram for sprites or overdraw to justify its existence.)

Just thinking, wouldn't Sonic (or any sprite really) moving at 5fps actually take

more processing power? Then you would have to deal with sub pixel velocity.

psycopathicteen> Sorry you took it as a critic... actually i was mainly speaking about the fact you were using only 1 type of enemy, with simplistic IA, explosion animation physic and so on. I'm just chocked people really compare your demo to GH... Maybe your dynamic sprite allocation system is complex enough but still, try to fit it in a real game with all the game logic and others calculations aside to see if it still works...

Honestly GH is one of the most impressive game on Sega Megadrive, do you really believe you can replicate it accurately on the SNES which does have a

weaker CPU and a dumb PPU design ? If you really believe that, sorry guys but your are really naive or just fanboy blind.

And speaking about the 65816 vs 68000, i never compared them on their clock, you definitely can't compare different CPU architecture on clock... The MD 68000 runs at 7.7 Mhz compared to the 2.68 (or ~3.1 Mhz with Fast ROM) 65816 but if we compare the BUS speed then the 68000 only runs at 1.92 Mhz. If you want to compare these CPU on cycles then do it on BUS cycles, it's more fair

And it's why i really think the 65xx architecture is just bad: it requires very fast memory to work with and their efficiency definitely sucks. The 65816 uses faster memory than the MD 68000 and does far less with it (of course the 8 bits data BUS does not help but that is a tribute for the 65xx inspired design).

If you really want to compare 65816 versus 68000, don't adapt your 65816 code in 68000 code, of course you won't use the same algorithm on the 68000 which has plenty of registers and a 16/32 bits architecture. At least you try to optimize your code to take benefit of the 16 bits data bus, here (in Sik's code) we can see we have 2 bytes accesses, that is already a good indication that original code has not be designed for the 68000, of course sometime you don't have the choice but almost time there is way to adapt for 16 bits accesses.

Stef wrote:

psycopathicteen> Sorry you took it as a critic...

What else is he supposed to take it as?

Stef wrote:

actually i was mainly speaking about the fact you were using only 1 type of enemy,

That's about all there is in the game.

Stef wrote:

with simplistic IA,

That's about how it looks in the game.

Stef wrote:

try to fit it in a real game with all the game logic and others calculations aside to see if it still works...

What other game logic is left exactly? Maybe a second player and other weapons, but not much else. I'm not saying that that's it, but

the CPU intensive stuff is for the most part done with. Adding more levels doesn't cause slowdown, it just takes up more memory. I guess you could be talking about the bosses or the stage set pieces (like the mine cart things), but those don't look like they'd be very processor intensive.

Stef wrote:

Honestly GH is one of the most impressive game on Sega Megadrive,

I'm sure the Megadrive can do better. If you're going to pick a game to have a fetish for, pick a better game.

Stef wrote:

weaker CPU

I thought we'd gone over this...

Stef wrote:

a dumb PPU design ?

I know, the SNES only having 64 colors was a really stupid design choice. The Megadrive is way better because it has 256 colors.

Stef wrote:

If you really believe that, sorry guys but your are really naive or just fanboy blind.

I'm really not sure what to say...

Stef wrote:

And speaking about the 65816 vs 68000, i never compared them on their clock, you definitely can't compare different CPU architecture on clock... The MD 68000 runs at 7.7 Mhz compared to the 2.68 (or ~3.1 Mhz with Fast ROM) 65816 but if we compare the BUS speed then the 68000 only runs at 1.92 Mhz. If you want to compare these CPU on cycles then do it on BUS cycles, it's more fair

I like how you made the MD go up .1 megahertz and the SNES go down .4 megahertz.

Stef wrote:

65xx architecture is just bad: it requires very fast memory to work with and their efficiency definitely sucks

It takes about twice the amount of cycles to do the same thing on the 68000 as the 65816, and we're talking about efficiency...

Stef wrote:

If you really want to compare 65816 versus 68000, don't adapt your 65816 code in 68000 code

You're right. He should try to adapt the code from the 68000 to the 65816 instead.

Stef wrote:

psycopathicteen> Sorry you took it as a critic... actually i was mainly speaking about the fact you were using only 1 type of enemy, with simplistic IA, explosion animation physic and so on. I'm just chocked people really compare your demo to GH... Maybe your dynamic sprite allocation system is complex enough but still, try to fit it in a real game with all the game logic and others calculations aside to see if it still works...

Honestly GH is one of the most impressive game on Sega Megadrive, do you really believe you can replicate it accurately on the SNES which does have a weaker CPU and a dumb PPU design ? If you really believe that, sorry guys but your are really naive or just fanboy blind.

I don't have any idea what you're basing your assumptions on. You can argue all you want about the advantages of each processor but at the end of the day I'm still not seeing any reason that it can't be done. You haven't done any math here, you're basically saying "because it's inferior in these few respects it can't POSSIBLY run this game" which is ridiculous. Do you really think that GH is so painstakingly optimized and pushing the limits of the system so extremely that a very-nearly-equivalent system couldn't possibly do the same thing, no matter how you made use of its advantages? That is a HUGE assumption. You have absolutely nothing to back it up.

The only way you're going to prove your point is if you or somebody else actually does try to port GH to SNES. And if they fail that only proves that THEY couldn't do it - not that it can't be done. This conversation is frankly ridiculous.

Thank you Khaz. I'm 99% sure the reason psychopathicteen quit the GH port was because he got sick of it and didn't feel like finishing it, not because it wasn't possible.

Oh, it's on now!

Espozo and Stef: let's see if we can't not get the thread locked this time (ie: have something approximating a rational discussion) - it's a useful thread and it'd be a shame if this digression sank it...

Stef wrote:

And speaking about the 65816 vs 68000, i never compared them on their clock, you definitely can't compare different CPU architecture on clock... The MD 68000 runs at 7.7 Mhz compared to the 2.68 (or ~3.1 Mhz with Fast ROM) 65816 but if we compare the BUS speed then the 68000 only runs at 1.92 Mhz. If you want to compare these CPU on cycles then do it on BUS cycles, it's more fair

And it's why i really think the 65xx architecture is just bad: it requires very fast memory to work with and their efficiency definitely sucks. The 65816 uses faster memory than the MD 68000 and does far less with it (of course the 8 bits data BUS does not help but that is a tribute for the 65xx inspired design).

We actually have clock data and instruction cycle counts for both SNES and MD, and it is possible to write equivalent code and compare wall clock time (obviously, the larger and more functionally complete the code sample the better). Complaining about 65x being a bus hog and requiring faster memory doesn't come to the point; the consoles are what they are, and have been for a quarter of a century.

On the topic of FastROM, notice how psycopathicteen's original example snippet only has a single slow-access cycle out of 21 cycles? Not all code is that insular, but to get near 3.1 MHz average speed you'd have to be doing non-indexed 16-bit direct page WRAM accesses pretty much exclusively; most instructions have a lower RAM access fraction than that.

(If you're really desperate, I think you can set direct page to $4300 and use the DMA registers as fast RAM; as long as $420B and $420C are zeroed, nothing too horrifying should happen...)

93143 wrote:

Espozo and Stef: let's see if we can't not get the thread locked this time (ie: have something approximating a rational discussion)

Aww...

That's no fun!

Espozo wrote:

I'm not saying that that's it, but the CPU intensive stuff is for the most part done with. Adding more levels doesn't cause slowdown, it just takes up more memory.

Compression costs CPU time. I remember reading an

interview stating that

Gunstar Heroes would be over 2 MB if uncompressed. The same interview states that complex jointed bosses also cost CPU time, and I'm guessing that the 68000's multiply instructions help with that.

Quote:

Stef wrote:

And speaking about the 65816 vs 68000, i never compared them on their clock, you definitely can't compare different CPU architecture on clock... The MD 68000 runs at 7.7 Mhz compared to the 2.68 (or ~3.1 Mhz with Fast ROM) 65816

I like how you made the MD go up .1 megahertz and the SNES go down .4 megahertz.

I'm assuming the extra .4 MHz came out of two things: slow RAM and RAM refresh.

Quote:

I'm 99% sure the reason psychopathicteen quit the GH port was because he got sick of it

The other 1% being a cease and desist letter?

93143 wrote:

to get near 3.1 MHz average speed you'd have to be doing non-indexed 16-bit direct page WRAM accesses pretty much exclusively

How many cycles does

STA [$69],Y take?

Espozo wrote:

Stef wrote:

psycopathicteen> Sorry you took it as a critic...

What else is he supposed to take it as?

Stef wrote:

actually i was mainly speaking about the fact you were using only 1 type of enemy,

That's about all there is in the game.

Stef wrote:

with simplistic IA,

That's about how it looks in the game.

Here's how I always thought the AI in Gunstar Heroes work.

Roll the dice. If it lands on X, perform action Y. Roll the dice again to determine how long before the enemy performs a another move. Repeat.

tepples wrote:

How many cycles does STA [$69],Y take?

Two or three ROM/internal, four or five RAM. How often do you actually use direct indirect indexed long stores? Or any indirect indexed instructions?

(I've only ever programmed test codes and mockups, and I don't hack ROMs, so I don't have a good idea of what game logic actually looks like...)

...

@psycopathicteen: If you load an indexed sprite sheet into the GIMP, copy it to a new RGB file, rescale it with high-quality interpolation, and copy-paste it back into the original indexed image (delete the original first if there's any transparency involved) so as to force it back to the original palette, there should be a fairly minimal amount of work involved in touching up the resulting rescaled sprites. Just be sure to delete any graphics that don't share the palette and re-index so you know you won't get misplaced colours. This gives much better results than simple nearest-neighbour rescaling.

Proof of concept attached:

Attachment:

super_tie_fighter.gif [ 11.24 KiB | Viewed 1132 times ]

super_tie_fighter.gif [ 11.24 KiB | Viewed 1132 times ]

tepples wrote:

The other 1% being a cease and desist letter?

...wait, did he actually get one?

Oh wow, there's bullshit from both ends here. First the "let's limit Sonic's speed to 5 pixels per frame" on one side, then the other side taking it to mean "make the game run at 5FPS". WTF?

Also I want somebody to try the table look-up method on the 65816 to see how it performs there.

93143 wrote:

tepples wrote:

The other 1% being a cease and desist letter?

...wait, did he actually get one?

If so, I'm not aware. It was a guess. But I wouldn't put it beyond a lot of major video game publishers nowadays, especially with the growing willingness of courts to enforce exclusive rights in "look and feel".

Sik wrote:

Oh wow, there's bullshit from both ends here. First the "let's limit Sonic's speed to 5 pixels per frame" on one side, then the other side taking it to mean "make the game run at 5FPS". WTF?

I know the original quote was unknowledgeable, but what happens when you still can't get all the processing done at 30fps? I sincerely doubt that you could even make the SNES run at 5fps, and there's absolutely no question that the SNES version wouldn't run as slow as 5fps even if it could. (I know it would pain him dearly, but even Stef could admit that.)

Espozo wrote:

I sincerely doubt that you could even make the SNES run at 5fps

What kind of frame rate do you get out of games like

Jurassic Park or some of the Super FX games like

Doom, especially in more complex scenes?

I didn't think it ever went slower than 10. Anyway, the main question is how much the frame rate drops every time it doesn't finish processing, like if the frame rate always got cut in half to where it went 60, 30, 15, 7.5. I just don't have a clue how it works, and it can't be any number because (at least to my knowledge) you cant have a game run at 59fps.

5fps means moving once every 12 tv frames.

If it's not a fixed rate you can have non-integer divisors. e.g. 59 could be "dropped one frame every second".

Espozo wrote:

Sik wrote:

Oh wow, there's bullshit from both ends here. First the "let's limit Sonic's speed to 5 pixels per frame" on one side, then the other side taking it to mean "make the game run at 5FPS". WTF?

I know the original quote was unknowledgeable, but what happens when you still can't get all the processing done at 30fps? I sincerely doubt that you could even make the SNES run at 5fps, and there's absolutely no question that the SNES version wouldn't run as slow as 5fps even if it could. (I know it would pain him dearly, but even Stef could admit that.)

Wait, what game are we talking about now? x_X Bleh, ignore that, although maybe it's a good moment to split the thread since this doesn't seem to be about dynamic VRAM slot algorithms anymore (I bet that split thread would get locked rather soon like the other one, though...).

I almost don't see the point in locking topics. All that happens is that the argument gets brought elsewhere to continue. I imagine people would get tired of arguing at one point or another anyway.

Quote:

The same interview states that complex jointed bosses also cost CPU time, and I'm guessing that the 68000's multiply instructions help with that.

Yes but you have only player and boss,and multiply instruction(if used here) is slow and you'd better use LUT .

A game like super aleste is much more cpu intensive, and here too i think the problem to have a snes port of AS is sprites limit .

This game like GH are designed to use the Md sprites capabilities to maximise sprites on screen with minimum flicker in H40 .

Khaz wrote:

I don't have any idea what you're basing your assumptions on. You can argue all you want about the advantages of each processor but at the end of the day I'm still not seeing any reason that it can't be done. You haven't done any math here, you're basically saying "because it's inferior in these few respects it can't POSSIBLY run this game" which is ridiculous. Do you really think that GH is so painstakingly optimized and pushing the limits of the system so extremely that a very-nearly-equivalent system couldn't possibly do the same thing, no matter how you made use of its advantages? That is a HUGE assumption. You have absolutely nothing to back it up.

The only way you're going to prove your point is if you or somebody else actually does try to port GH to SNES. And if they fail that only proves that THEY couldn't do it - not that it can't be done. This conversation is frankly ridiculous.

Oh i see, "we cannot say it is not possible to make GH on SNES as nobody tried to do it"... ok in this case maybe we could run FarCry 4 on SNES but we will never know as nobody tried it ? I admit you can always find new ways to improve / optimize your code but at the end you need to find something proper at least for real game condition exploitation. Imagine you need to use 1 MB lut and having GFX organized in very specific way with many hard coded constraints then your engine is definitely not usable in real conditions. After the optimization then you have the maths... A lot of people believe the 65816 is better because of its better IPS but the problem is that you will never be able to clock the 65816 at the same speed than a 68000 (because of the memory). Actually even 3.58 Mhz was not possible when the SNES was released, fast ROM were used quite late in the SNES lifetime... But anyway, i accept to compare with a 3.1 Mhz 65816 (which is, i believe, an optimist estimation of the CPU speed while it is running from Fast ROM) so let's do the maths.

memory fill68000 = 1 byte each 3 cycles (classic move) and ~2.2 cycles (with movem)

65816 = 1 byte each 2 cycles (STA DirectPage with 16 bits Acc)

memory copy68000 = 1 byte each 5 cycles (classic move) and ~4.5 cycles (with movem)

65816 = 1 byte each 7 cycles (MVN) and 5 cycles (LDA/STA Absolute sequence with 16 bits Acc)

I don't know enough the 65816 but i believe we can't do faster and i used simplest addressing mode to get fastest usable "case" but even in these particular situations we can see that for basic operation as memory fill or copy the 68000 is on par in term of cycles number... when the 68000 has many more available cycles.

Then we can find an unlimited number of examples but i am thinking about the one posted in Sega-16 by tomaitheous to show the 6502 can be fast, even for 24 bits calculation :

Code:

;24bit add for X and Y. 16:8 = 16bit for whole, 8bit for fractional

ldx #$xx ;2

jsr AddVelocity ;6

AddVelocity:

lda x_float,x ;4

clc ;2

adc x_float_inc,x ;4

sta x_float,x ;5

lda x_whole.l,x ;4

adc x_whole_inc,x ;4

sta x_whole.l,x ;5

lda x_whole.h,x ;4

adc x_whole_inc.h,x ;4

sta x_whole.h,x ;5 = 41

lda y_float,x ;4

clc ;2

adc y_float_inc,x ;4

sta y_float,x ;5

lda y_whole.l,x ;4

adc y_whole_inc,x ;4

sta y_whole.l,x ;5

lda y_whole.h,x ;4

adc y_whole_inc.h,x ;4

sta y_whole.h,x ;5 = 41

rts ;6

;41+41+6+6+2 = 96 cycles

The point of the method is to handle object displacement (player ship or enemies) and that the 6502 could even be faster here than a 68000 in term of number of cycles (Steve Snake wrote a method which was above 100 cycles for the 68000). But actually reworking a bit the data structure and the code for the 68000 ended to that :

Code:

.loop:

move.l (a0)+,d0 ; 12 X_inc

move.l (a0)+,d1 ; 12 Y_inc

add.l d0,(a0)+ ; 20 X += X_inc

add.l d1,(a0)+ ; 20 Y += Y_inc

dbra d7, .loop ; 10

74 cycles.

Ok, we were comparing against the 6502 which is not fair and i'm certain we can lower the initial 96 cycles number a lot with the 65816. But then, even if you are able to get the calculation done in 40 cycles with the 65816 you are still below the 68000 performance level.

Considering 7.67 Mhz against 3.1 Mhz you need a ratio of ~2.5 to be equivalent to the 68000, so here with 74 cycles for the 68000, you had to do it in 74/2.5~30 cycles to be on par... and this time i really doubt you can make it fit in 30 cycles with the 65816.

Stef wrote:

Oh i see, "we cannot say it is not possible to make GH on SNES as nobody tried to do it"... ok in this case maybe we could run FarCry 4 on SNES but we will never know as nobody tried it ?

The audacity of this guy, making such claims about what is and is not possible on the SNES when he admits that:

Stef wrote:

I don't know enough the 65816

If you don't know enough then maybe you aren't qualified to be dictating what can and cannot be done on the system, huh? For example, are you even familiar with the concept of DMA? I would think that has some bearing on your calculations. And furthermore you missed my point entirely; the point was that pointing out subtle differences where the SNES doesn't come out on top is not proving ANYthing about what games can and can't run on it. This conversation is still ridiculous.

I'm sorry for continuing to post off topic.

You see! Stupid shit like this happens if you don't be aggressive and take action.

Stef wrote:

I don't know enough the 65816

I don't know enough about the 68000, but you don't see me picking fights. If you're here just to bitch about things, go somewhere else.

You know, why don't we just do a topic split right here and now for a "Useless Hate" thread.

Khaz wrote:

If you don't know enough then maybe you aren't qualified to be dictating what can and cannot be done on the system, huh?

I have played with many CPU with different architecture in my programmer life and the first assembly i ever touched (maybe 20 years ago) was actually 6502 assembly (on a VIC20). So even if i am not currently programming with the 65816 and don't know its ISA by heart I have a perfect understanding of its architecture and a good view its capabilities.

Quote:

For example, are you even familiar with the concept of DMA? I would think that has some bearing on your calculations.

Are you serious ? I just wanted to compare the CPU performance just to point out the problem with the 65xx architecture.

Quote:

And furthermore you missed my point entirely; the point was that pointing out subtle differences where the SNES doesn't come out on top is not proving ANYthing about what games can and can't run on it.

Oh really ? so please tell me how can you quantify it then ? The CPU is the heart of the system... if a CPU A can't compute as much than a CPU B then necessarily you can't replicate games from system B on system A at same speed, just pure logic. Honestly i really amazed that some people still dare to compare the 65816 to the 68000, the later one is so much more advanced there is no competition. Even with a 65816 running @3.1 Mhz you barely get about half of the performance than a 7.7 Mhz 68000 which requires slower memory, that is the point. You can definitely find particular situation where the 65816 will perform well (and why not even outperform the 68000) but generally the 68000 will be the clear winner and by a large margin.

Espozo wrote:

You see! Stupid shit like this happens if you don't be aggressive and take action.

I don't know enough about the 68000, but you don't see me picking fights. If you're here just to bitch about things, go somewhere else.

You know, why don't we just do a topic split right here and now for a "Useless Hate" thread.

Why do you need to be that aggressive ? I was just replying about the hilarious stuff i read in this topic and i came with arguments and numbers. Still I agree it turns out of the topic and we should split it but imo it can be an interesting discussion if people try to think and speak without their biased point of view.

Quote:

So even if i am not currently programming with the 65816 and don't know its ISA by heart I have a perfect understanding of its architecture and a good view its capabilities.

No, and i said you all the time, 65816 or hu6280 are not 6502 ..

Hu6280 is more faster than vanilla a 6502, and run at 7,16mhz, the 65816 is faster than the other two at same clock .

Do you remember you claimed that 68k was 4x more faster than 65816 ??

I told you they are close for a gaming machine,and at 2,58 the 68k is still faster, but not by a lot .

TOUKO wrote:

No, and i said you all the time, 65816 or hu6280 are not 6502 ..

Hu6280 is more faster than vanilla a 6502, and run at 7,16mhz, the 65816 is faster than the other two, but run at a lower clock than 6280.

Of course the 65816 is faster than a 6502. It's why i said we can lower the number of cycles of the previous code by a large margin on the 65816 (from 96 cycles with the 6502 i guess we can at least obtain 50 cycles on the 65816).

Quote:

Do you remember you claimed that 68k was 4x more faster than 65816 ??

Please, i just invite you to find where i claimed that ok ?

As usual you probably mis-interpreted my words or put them out of context

I always said the 65816 in the SNES (and so clocked at ~3 Mhz with Fast ROM) is about half of the performance level than the 7.7 Mhz 68000.

Quote:

I told you they are close for a gaming machine,and at 2,58 the 68k is still faster, but not by a lot .

Yeah i know that but you are just wrong

@2.68 Mhz the 68000 is actually *a lot* faster.

Quote:

Please, i just invite you to find where i claimed that ok ?

As usual you probably mis-interpreted my words or put them out of context

I always said the 65816 in the SNES (and so clocked at ~3 Mhz with Fast ROM) is about half of the performance level than the 7.7 Mhz 68000.

No stef, i'am sure of that, but i'am not sure to find where in the "snes vs md" (ton of posts) topic

Quote:

Yeah i know that but you are just wrong

@2.68 Mhz the 68000 is actually *a lot* faster.

A lot ??, you're hardly optimistic

Do you claim again that bad apple in 8 MB is impossible on snes ??,i don't see the "a lot" factor here

Quote:

I'm just chocked people really compare your demo to GH

Huuuum, maybe like you compare your starfox demo ??

Or axelay scroll demo with nothing around ??

To be honest i am really impressed by the SNES version of BadApple but even more impressed by the fact that they figured a codec fast enough to work on the 65816 and to fit the video and sound in 8 MB.

Still currently even if 90% of the demo is done the last 10% which consist of getting rid of the last slowdowns will be really tricky as the code is already quite optimized. Also there is the sound streaming issue but i believe this one is easier to sort.

The MD version of the demo uses more complex compression methods. I used about 5/6 compression schemes for the tilemap and about 7 or 8 for the tile data... honestly i think my code is definitely not optimal on that point.

For sure the native 2bpp mode of the SNES help for the compression, in the MD version i need to encode 2 2bpp frames into a single 4bpp frame and that definitely reduces the compression ratio, also i have 25% more data to unpack compared to the SNES (wider resolution).

Quote:

Huuuum, maybe like you compare your starfox demo ??

Or axelay scroll demo with nothing around ??

Ok for axelay but the starfox demo is a different, what cost the most and *by far* in this case is the 3D math calculations and the 3D rendering. If you get the engine done then you can consider to make a game from it without much troubles. Any game logic will have an insignificant cost on the CPU compared to the 3D stuff.

Quote:

Ok for axelay but the starfox demo is a different, what cost the most and *by far* in this case is the 3D math calculations and the 3D rendering. If you get the engine done then you can consider to make a game from it without much troubles. Any game logic will have an insignificant cost on the CPU compared to the 3D stuff.

Agreed, but is not a proof of anything, you can have the CPU ressource for your demo, but not enough for collisions, and all around .

Sorry but your arguments can be applied to GH demo, the difficult part is to have many sprites on screen with collisions without any slowdown, the rest is easy to do,in GH the sprites are simply clones, i think all move fit in VRAM because they are very limited,same for Ai and physic ,which are realy simplistic .

Quote:

The MD version of the demo uses more complex compression methods. I used about 5/6 compression schemes for the tilemap and about 7 or 8 for the tile data

May be, but same final result,if you want impress, do the same just with 68k, and not 68k+z80 ..

TOUKO wrote:

May be, but same final result,if you want impress, do the same just with 68k, and not 68k+z80 ..

Should the SNES demo be done without the SPC700?

DoNotWant wrote:

Should the SNES demo be done without the SPC700?

It's different, i think most of the audio work in snes version is done by 65816, it stream audio directly to SPC .

But i don't know exactly what sort of technics (audio and tiles) psycopathicteen use ..

In Md case all audio was done by z80,let a big amount of 68k cycles free ..

i think we should stop derivating, this is not the good topic for CPU comparison

Well, I originally typed out something much larger and "aggressive", but I've decided to play "nicer". Here we go...

Stef wrote:

Why do you need to be that aggressive ?

Do you see me starting arguments? That'd be like If I went to Sega16 just to say "The 68000 is trash, the 65816 is better because it can handle Space Megaforce."

Stef wrote:

their biased point of view.

...

Stef wrote:

You can definitely find particular situation where the 65816 will perform well (and why not even outperform the 68000) but generally the 68000 will be the clear winner and by a large margin.

Why do you find that the 68000 "is the clear winner", but nobody else else does? Is it because of their "biased point of view"? Anyway, psychopathicteen made a code that performed better on the 65816 and you don't like it because he "ported" it over to the 68000 and was faster on the 68516, so you make a code on the 68000 and "port" it over to the 65816 and it's faster on the 68000, to show that the 68000 is faster because it handled that code better, completely disregarding what psychopathicteen wrote. From what I got of the codes, the 65816 and the 68000 are better for different things, but you don't want a draw, you want to win. I understand that the Genesis's 68000 is still faster than the SNES's 65816 but not even relatively close to 4x. (Don't even pretend you didn't say that.)

Anyway, can we finally settle on a draw and not have another one of these issues?

Espozo wrote:

Do you see me starting arguments? That'd be like If I went to Sega16 just to say "The 68000 is trash, the 65816 is better because it can handle Space Megaforce."

Did you noticed that i basically replied to psychopathicteen and argued about why his demo is not enough to say "we can port GH on the SNES" ? Of course you are more than welcome on Sega-16 and get some technicals talks, as soon your arguments are valid and you are not aggressive against others members.

Stef wrote:

Why do you find that the 68000 "is the clear winner", but nobody else else does? Is it because of their "biased point of view"?

Nobody else does ? Are you speaking about the general statement and the one we find here ?

I really wonder in which world you live... Did you have ever had a look on specialized hardware forum ?

Quote:

Anyway, psychopathicteen made a code that performed better on the 65816 and you don't like it because he "ported" it over to the 68000 and was faster on the 68516, so you make a code on the 68000 and "port" it over to the 65816 and it's faster on the 68000, to show that the 68000 is faster because it handled that code better, completely disregarding what psychopathicteen wrote. From what I got of the codes, the 65816 and the 68000 are better for different things, but you don't want a draw, you want to win.

But you can take the code from psychopathicteen if you want, take the *best* code for your 65816 but then consider to take the best one for the 68000... then you can compare. And of course there is a win, again these CPU does not compete in the same category. Even clocked at 3Mhz (which is fast regarding the required memory speed) the MD 68000 has the edge by a large amount. You don't want to accept it but that is just simple truth. The only advantage of the 65xx serie CPU is the price, it's a really cheap CPU but then you have to pay it elsewhere, as the general very poor "efficiency".

Quote:

I understand that the Genesis's 68000 is still faster than the SNES's 65816 but not even relatively close to 4x. (Don't even pretend you didn't say that.)

Oh yeah i said it, you better know than me

are you Touko's cousin ?

I said the Genesis's 68000 is faster than SNES's 65816 and about twice as fast which is already a nice difference when you think the Genesis is 2 years older. A 6 Mhz 65816 should be on par with a 7.7 Mhz 68000 which is, actually, not a good score for the 65816.

Stef wrote:

I have played with many CPU with different architecture in my programmer life and the first assembly i ever touched (maybe 20 years ago) was actually 6502 assembly (on a VIC20).

Then it sounds like you should have enough experience to know better than to try to pick this particular fight. The war between Genesis and SNES has been going on for decades now. Like any war that lasts decades, nobody has emerged a clear winner. There are no fanboys left - they have grown into fanmen.

The Sega Genesis was the first console I ever had. I loved it to death back then and I still do now. If you forced me to pick a side, I would be on YOUR SIDE. The reason I'm against you right now is because you're trying to make a ridiculous point. You're throwing around wild speculation that you believe GH can't be replicated, with nothing that specifically backs that point up.

When I say to "back it up", I mean with some serious analysis that warrants consideration. Something like "to reproduce GH you'd need these tile sizes in this video mode, you'd need this much time for sprite routines and this much time to process the AI and this much time for the rest, and due to the much faster way the Genesis does ____ there is no possible way the SNES can do the same job." That would warrant a response. Saying "This specific sample of code is slower on SNES" is totally meaningless, and you being a programmer yourself should know that.

Stef wrote:

Are you serious ? I just wanted to compare the CPU performance just to point out the problem with the 65xx architecture.

Uh, yes, I'm quite serious. DMA makes a huge difference to the speed of writing/copying blocks of data to either WRAM or VRAM. Since you were doing all your comparisons based on direct page instructions and not considering this advantage I don't think your assessment was fair.

Stef wrote:

Quote:

And furthermore you missed my point entirely; the point was that pointing out subtle differences where the SNES doesn't come out on top is not proving ANYthing about what games can and can't run on it.

Oh really ? so please tell me how can you quantify it then ?

I DON'T.

I don't go around trying to say that Game X is too complicated for System Y because that would be a foolish thing to do - you can't prove it and you're just inviting people to prove you wrong. You talk exclusively about CPU power, which again you should know better than. The SNES is more than a 65816. The Genesis is more than a 68000.

Anyways, I don't see the point of this conversation here. I don't see why somebody would come into the SNES Development forums to try to convince people that the SNES is an inferior console. What is it you're trying to accomplish, Stef? Right now I only see two possible motivations: Either you're just the biggest GH fan on earth and you're trying to goad somebody into porting it for you, or you just hate the SNES and you're trying to distract us all from our work here.

Regardless, I think the best course of action would be to split this discussion out of this (extremely valuable!) thread. And I second my call for a dedicated "SNES VS GENESIS BLOODBATH" forum where we can just HAVE all these ridiculous discussions and keep them away from the actual work being done. I don't think it is possible to prevent people from debating it altogether.

I am sorry, again, for posting more.

Espozo wrote:

You know, why don't we just do a topic split

PM me the split point and I'll handle it.

Okay I have a lot of posts to reply to.

The slowdown in Bad Apple is mostly the 65816 waiting for the SPC700 to respond to it.

Somebody said that I kept the frames in vram in GH. I could've did that because I only used 4 animation frames for enemies, but I used a dynamic animation engine instead because I anticipated eventually running out of vram.

psycopathicteen wrote:

The slowdown in Bad Apple is mostly the 65816 waiting for the SPC700 to respond to it.

That can be worked around by polling the SPC700 in other parts of your 65816 code, such as during tile decoding.

Anyway, I'll proceed to lighten the mood by missing the point:

To do GH, you'd need either Sega CD or MSU1 to stream large background and guitar parts. The

Nintendo DS version is a 1 Gbit cartridge, and all other versions come on optical disc.

To do TF3, you'd need not only 3D acceleration but also a time machine. Valve can't count to three, and last time I checked, it was making enough money selling hats in TF2 that it doesn't

need to make a TF3.

It seems to me that using two HDMA channels, one indirect addressed to read from a buffer (or straight from ROM, if in repeat mode, though bank boundaries might be trouble) and one direct addressed to write the control byte, should save most of the time, as long as the SPC700 can keep up. That way you wouldn't need to repopulate a whole HDMA table every frame.

Did you uncover a reason not to use HDMA, or is it just not working yet?

Khaz wrote:

Then it sounds like you should have enough experience to know better than to try to pick this particular fight. The war between Genesis and SNES has been going on for decades now. Like any war that lasts decades, nobody has emerged a clear winner. There are no fanboys left - they have grown into fanmen.

The Sega Genesis was the first console I ever had. I loved it to death back then and I still do now. If you forced me to pick a side, I would be on YOUR SIDE. The reason I'm against you right now is because you're trying to make a ridiculous point. You're throwing around wild speculation that you believe GH can't be replicated, with nothing that specifically backs that point up.

It's not even about SNES versus Genesis, i owned both consoles back in time and people who know me can confirm i always claimed i played more on my SNES than my MD and that my all time favorite game is Super Metroid. It's just about technical mis-information, i think there is nothing worst than that... Here what annoy me is that we can read the 65816 is a beast and even surpass the 68000 as it takes less cycles to execute comparable operations. I just want to explain why we should not compare on cycle and why the 65816 is definitely a poor choice as a main CPU (for whatever system actually, not only the SNES). I know the SNES has others flaws as the convoluted PPU with spitted OAM, sprites size restriction and memory arragement but all systems has its flaws (the Megadrive has only 4 palettes to play with and the sound system has some nice holes too) and honestly i think they can be worked out, at least partially. But here, in the SNES, the CPU is definitely and *by far* the main issue of the whole system. Honestly i tried to develop on SNES but the CPU is just so under powered you can't correctly use the offered graphics features. Accessing memory by bank of 32KB / 64 KB on a 16 bits system (released in 1990) is just ridiculous and painful for developers, it is as if i was coding on a 8 bits system with boosted graphics and audio hardware, totally unbalanced and very unpleasant. You can believe if you want that GH is possible on SNES, that is your right but the truth is that the CPU alone is a good reason to not see it happening. The guys from Treasures left Konami company because they wanted to have more freedom in their development but also because they felt limited by the SNES CPU, GH relies a lot on the power offered by the 68000 so definitely it would not work on SNES because of its *slow CPU*, that is...

If you are interested, here is an interview from the guys who actually worked on GH:

http://megadrive.me/2011/11/03/an-inter ... -treasure/And a relevant part of it:

Quote:

Q: Konami is a big 3rd party for Nintendo, so why are you now making games for Sega?

A: I’ve always been fascinated with hardware. People are constantly comparing Mega Drive to SNES, saying that the SNES has more colors etc…

But the Mega Drive has a 68000 processor, which is very easy for programmers to work with. I was a programmer for years, making games for the SNES, and I can tell you, the hardware is a pain in the butt. If consumers look at a still shot, they may think the SNES is better, but actually, if you tried to put Gunstar Heroes onto the SNES there would be no way. See those bosses? On the SNES they would slow down, that movement requries sooo much computation. It could only be done on the Sega hardware.

...

as I said the hardware is very easy to work with. All things considered, the 68000 is a very good CPU allowing room for experimentation while the SNES hardware limits you to their design standards. Scaling and rotation can be implemented in the Sega software, forget it on the SNES.

Then now free feel to ignore it... and continue to believe it can be done on the SNES.

Quote:

When I say to "back it up", I mean with some serious analysis that warrants consideration. Something like "to reproduce GH you'd need these tile sizes in this video mode, you'd need this much time for sprite routines and this much time to process the AI and this much time for the rest, and due to the much faster way the Genesis does ____ there is no possible way the SNES can do the same job." That would warrant a response. Saying "This specific sample of code is slower on SNES" is totally meaningless, and you being a programmer yourself should know that.

Any program is just about dealing with data: read data, interpret it, transform it, modify it...

So taking the performance of extra basic operation as read and copy data is already a good start point to evaluate what you can achieve with the CPU. Of course that's not enough, i just say it's a good start point.

If you want more advanced maths to compare these CPU then we can go in it but trust me you won't like the result.

Stef wrote:

Uh, yes, I'm quite serious. DMA makes a huge difference to the speed of writing/copying blocks of data to either WRAM or VRAM. Since you were doing all your comparisons based on direct page instructions and not considering this advantage I don't think your assessment was fair.

Of course i do know what DMA is (and the MD also has a DMA) but the point was to compare the CPU (see my previous point).

Quote:

I DON'T.

I don't go around trying to say that Game X is too complicated for System Y because that would be a foolish thing to do - you can't prove it and you're just inviting people to prove you wrong. You talk exclusively about CPU power, which again you should know better than. The SNES is more than a 65816. The Genesis is more than a 68000.

Ok, you don't, i try... and what do you expect ? do we need to reverse engineer entirely GH game to see if you can port the engine 1 to 1 ? Do you know at least what can the 68000 CPU do ? Again we can go further in the calculation, but do we really need it ??

psycopathicteen wrote:

The slowdown in Bad Apple is mostly the 65816 waiting for the SPC700 to respond to it.

Too bad to waste CPU time here, but you will probably try get back to the HDMA method ?

Reading the SPC7000 documentation, it looks like you have a 4 bytes port communication between the SPC and the main CPU. When you fed the SPC from the HDMA i guess you do something as write 1 byte of compressed data to port 0 and write a specific value to port 1 to notify the SPC a data is ready then the SPC can read and acknowledge it by writing 0 back to port 1 ? From this scheme you can probably build a BBR circular buffer inside the SPC RAM where the DSP will fetch its samples but i guess synchronizing read and write is all the matter (avoiding to read where write are occurring).

Quote:

Somebody said that I kept the frames in vram in GH. I could've did that because I only used 4 animation frames for enemies, but I used a dynamic animation engine instead because I anticipated eventually running out of vram.

Does you dynamic engine re-allocate at each frame or it keeps tiles in VRAM as long it can ?

Quote:

See those bosses? On the SNES they would slow down, that movement requries sooo much computation. It could only be done on the Sega hardware.

Aahahah, i 've seen many of this words for any console, from many developpers, i call this a self errection ..

"We are so good that we can only prove it on ....(replace by your favorite machine)"

Are we really going to start this again?

Stef wrote:

Quote:

Q: Konami is a big 3rd party for Nintendo, so why are you now making games for Sega?

A: I’ve always been fascinated with hardware. People are constantly comparing Mega Drive to SNES, saying that the SNES has more colors etc…

But the Mega Drive has a 68000 processor, which is very easy for programmers to work with. I was a programmer for years, making games for the SNES, and I can tell you, the hardware is a pain in the butt. If consumers look at a still shot, they may think the SNES is better, but actually, if you tried to put Gunstar Heroes onto the SNES there would be no way. See those bosses? On the SNES they would slow down, that movement requries sooo much computation. It could only be done on the Sega hardware.

...

as I said the hardware is very easy to work with. All things considered, the 68000 is a very good CPU allowing room for experimentation while the SNES hardware limits you to their design standards. Scaling and rotation can be implemented in the Sega software, forget it on the SNES.

I don't consider this proof of anything. It just means that this particular programmer, using the particular technique he used in one platform, probably wouldn't be able to replicate the same effects in some other platform with the same performance. It's arrogant to say that something is impossible simply because you haven't figured out a way to do it.

Fortunately, there's more than one way to implement the same idea, and if you design your algorithms around the limitations and strong points of each CPU/system, you're likely to succeed in implementing that idea. Unless we're talking about larger generational gaps, which is not the case of SNES vs. Genesis (although that didn't stop Chinese pirates from porting several 16-bit titles to the NES, with varying degrees of success).

Something that happens to me sometimes is that I spend a lot of time thinking of how to implement something non-trivial, and when I finally find the answer, it becomes my point of reference on how to perform that particular task, and I'll base all my performance estimations off of that. Then comes along someone else with a different point of view, and a new idea on how to do the same thing, and I realize that I didn't have the absolute answer after all. Sometimes it's not even someone else, I often think of alternative ways to implement something out of the blue, and the new way can be even twice as fast as the old solution.

In before someone calls me a SNES fanboy: Out of the 2 systems, the Genesis is my favorite. I grew up with it and I find the overall aesthetics of Genesis games more interesting. But I also like the SNES very much, and I don't consider either system obviously superior to the other.

This is not a question of who's better, stef cannot accept than some impressive games exist for snes or PCE because all two have a 8 bits CPU ..

I saw some shmups on PCe/snes that are at least, as impressive as TF3/4 or Gh in term of cpu usage and sprites on screen .

TOUKO wrote:

two have a 8 bits CPU ..

You mean 1, right?

TOUKO wrote:

I saw some shmups on PCe/snes that are at less, as impressive as TF3/4 or Gh in term of cpu usage and sprites on screen .

If I recall correctly, one of the TF games on the Genesis actually has some slowdown. (Not Gradius III level, but definatelly not far away enough to brag.) Anyway, I said that Space Megaforce looked as good as Gunstar Heroes in that there are the same amount of sprites and stuff flying around, (and other CPU taxing stuff,) but he complained that it didn't have enough animation and that the game seemed "stiff" because of it. They're spaceships, they don't have arms and limbs. All you need to do is create multiple frames of the ships at different angles, which the game does.

also TOUKO, I think you mean "at least", not at less. I completely changes the meaning of the sentence.

A lot of big name companies used very traditional methods, and were afraid to deviate from the standard.

And programmers didn't necessarily have a lot of experience with the systems or CPUs they were using, which explains that kind of conservative programming.

Being a good programmer allows you to code for practically anything if you have proper documentation, but that doesn't mean you'll become a master at it overnight.

Are you referring to animation, psychopathicteen? Most of the "clever" ideas I thought of (like the sprite vram slot thing, even though you actually mode the idea into code. I'm going to do this, but I've been a bit busy.) I thought were standard. I looked at the DKC games in the bsnes games in a debugger and assumed that all games were as solid from a technical standpoint as they were. I looked at other games and saw how they did stupid stuff like store all the palettes for every enemy in the entire game in cgram, even though there is only one type of enemy on screen at a time and others. I had thought that DKC did the same thing that I thought of with finding vram for sprites, but it appears that Rare's way was actually simpler.

New post just came in while I was typing:

tokumaru wrote:

Being a good programmer allows you to code for practically anything if you have proper documentation, but that doesn't mean you'll become a master at it overnight.

I've learned that with x86.

Espozo wrote:

If I recall correctly, one of the TF games on the Genesis actually has some slowdown.

I thought all of them did o.o (although I believe TF4 slows down right in the first level around the area with the large snake-like enemy) Also reminder that they can't play PCM while anything else is playing either (even TF4, which is from 1992 and was unexcusable by then).

tokumaru wrote:

And programmers didn't necessarily have a lot of experience with the systems or CPUs they were using, which explains that kind of conservative programming.

Deadlines usually play a much bigger role (forcing programmers to just stick to something quick that works than bothering to come up with good code).

Sik wrote:

I thought all of them did o.o

They probably do, I just never played them.

I was referring to game physics. Konami/Treasure relied heavily on 16.16 physics which is convenient for the 68000, but not for the 65816. On the 65816 it's better to do physics in a signed 8.8 format with the coordinates being in 16.8 format.

I'm not even going to lie, but I don't have the slightest clue what "16.16" means. Is it just the first 16 represents pixels, and the second 16 is subpixels, like to where when you made metasprites, you only dump the first 16 bits? I guess this would be convenient for the 68000, because it can do some 32bit instructions. Really though, this doesn't seem hard to fix. Physics for y would only need to be 8.8, because 256 pixels is large enough to cover the height of the screen and hide 32 pixel tall sprites.

Espozo wrote:

Physics for y would only need to be 8.8, because 256 pixels is large enough to cover the height of the screen and hide 32 pixel tall sprites.

Ideally, physics happens in level space, not screen space.

Yeah, level space would make 8.8 pointless.

What I do these days with the 68000 though is store coordinates as 16-bit integers and speed as 8.8 fixed point, and then make use of subpixel faking to work around that. Kind of lame, but saves memory, makes things simpler (by being able to ignore subpixels almost everywhere) and should be easily doable on either system really. Heck, on 3rd generation systems this should be easily feasible.

Amusingly, Miniplanets uses 8-bit coordinates (!!). This was to make looping maps easier, I just abuse overflow =P

Quote:

also TOUKO, I think you mean "at least", not at less. I completely changes the meaning of the sentence.

Oups yes

Quote:

You mean 1, right?

The 65816 is a 8 bit datas bus cpu, for me it can not be a true 16 bits like the 68k can not be a 32 bits .

A game is not related only to physics, physic is used in few games and i don't think it was applied for all sprites .

you must count all branch/tests, variables read/write, subroutine calls, interruptions,all this stuffs which are far slower on 68k ..

For my PCE stuffs, i never used any 32 bits operations, on a 2D system i think is useless,or at least is not really an advantage .

Sik wrote:

What I do these days with the 68000 though is store coordinates as 16-bit integers and speed as 8.8 fixed point, and then make use of subpixel faking to work around that.

That's a good idea! It really isn't any different than 16.16 fixed point, because sub pixel coordinates don't really help collision detection and things, but they do help velocity. It actually seems more like an optimization than a compromise, and is obviously useful on the 68000 (as 32 bit instructions are supposed to be slower). Well, that's one area an SNES version of Gunstar Heroes could be faster in (Because Treasure was supposedly using 32.32 fixed point, even though it was unnecessary).

Me wrote:

I completely changes the meaning of the sentence.

Dat grammar. I meant to say "it". (You're not the only one who has trouble writing, TOUKO...)

Sik wrote:

What I do these days with the 68000 though is store coordinates as 16-bit integers and speed as 8.8 fixed point

How do you actually add the 16 bits integer coordinate to the 8.8 speed efficiently with the 68k ? You probably waste some cycles using that no ? the 16.16 is very practical, using 32 bits only for the speed addition and 16 bits when sending position in vram or for collision calculation.

Well how do you change accumulator (or whatever here) width on the 68000? On the 65816, It only takes about 1 or 2 instructions, and I'm pretty sure the instructions only take 2 cycles, which is the smallest amount of cycles any of the instructions have. This wouldn't be the first time different approaches are better for different processors.

Espozo wrote:

Well how do you change accumulator (or whatever here) width on the 68000?

I don't think you change this globally, instead there are different opcodes for the different data sizes. Does that mean that it takes 0 cycles to change register widths?

Stef wrote:

How do you actually add the 16 bits integer coordinate to the 8.8 speed efficiently with the 68k ? You probably waste some cycles using that no ? the 16.16 is very practical, using 32 bits only for the speed addition and 16 bits when sending position in vram or for collision calculation.

There's a ton of spare time, I'd rather reduce memory usage instead (but then again there's also a lot of spare memory...). The other issue is one that I had

a lot with Project MD (which used 16.16), suddenly now you can't rely on pixel precision for a lot of things and that can become an issue, although granted Project MD made it even worse by accounting for NTSC and PAL speed differences.

As for adding efficiently, huh, first I calculate the subpixel offset for the frame (this is done once when the frame starts):

Code:

moveq #0, d6

move.w (Anim), d7

rept 8

add.b d7, d7

roxr.b #1, d6

endr

move.w d6, (Subpixel)

Then when adding the speed to the position it's just a matter of adding that offset, then bit shifting (d2 = 8.8 speed, d0 = 16-bit position):

Code:

add.w (Subpixel), d2

asr.w #8, d2

add.w d2, d0

Espozo wrote:

Well how do you change accumulator (or whatever here) width on the 68000? On the 65816, It only takes about 1 or 2 instructions, and I'm pretty sure the instructions only take 2 cycles, which is the smallest amount of cycles any of the instructions have. This wouldn't be the first time different approaches are better for different processors.

The size is part of the instruction, no flags controlling it at all. (heck, I think the 65816 is the only CPU I know that does the flags thing, neither 68k nor Z80 nor x86 do it)

Sik wrote:

There's a ton of spare time, I'd rather reduce memory usage instead (but then again there's also a lot of spare memory...). The other issue is one that I had a lot with Project MD (which used 16.16), suddenly now you can't rely on pixel precision for a lot of things and that can become an issue, although granted Project MD made it even worse by accounting for NTSC and PAL speed differences.

Hehe i more often think there are many spare memory (64 KB is a lot, except for very specific case) and so tend to prefer to optimize for speed (reasonably i mean, not anything insane).

Quote:

As for adding efficiently, huh, first I calculate the subpixel offset for the frame (this is done once when the frame starts):

Code:

moveq #0, d6

move.w (Anim), d7

rept 8

add.b d7, d7

roxr.b #1, d6

endr

move.w d6, (Subpixel)

What do (Anim) contains at first ? And about this :

Code:

rept 8

add.b d7, d7

roxr.b #1, d6

endr

It looks like you're trying to reverse bits from (Anim) and store result into (Subpixel) right ?

Quote:

Then when adding the speed to the position it's just a matter of adding that offset, then bit shifting (d2 = 8.8 speed, d0 = 16-bit position):

Code:

add.w (Subpixel), d2

asr.w #8, d2

add.w d2, d0

I've to admit i don't really get the point of doing that O_o ? the asr.w #8 is very taxing... it seems over complicated to me but i am probably missing something. It's ok for a system where you don't have 32 bits operations but here on the 68k you have just need to do :

Code:

add.l d1, d2

where d1 is the 16.16 speed and d2 the 16.16 coordinate (that we can easily extends it to 32.16 for world position with an extra addx). I guess you use the offset information for some others calculations but shouldn't offset be different for each object ?

Espozo wrote:

The size is part of the instruction, no flags controlling it at all. (heck, I think the 65816 is the only CPU I know that does the flags thing, neither 68k nor Z80 nor x86 do it)

It's another reason why i dislike this CPU, the designers hardly tried to preserve 6502 compatibility but at the cost of some awfuls choices (as this insane register size change instruction).

x86 has the weird real mode also... I remember you had to prefix an instruction by 0x33 to set it in 32 bits ("mov word" becomes then "mov long") and in flat mode it was the contrary (i.e, the 0x33 prefix allowed 16 bits instruction instead of default 32 bits). But that is definitely different from the 65816.

Stef wrote:

Hehe i more often think there are many spare memory (64 KB is a lot, except for very specific case) and so tend to prefer to optimize for speed (reasonably i mean, not anything insane).

You also program in C and we all know that GCC for 68000 is... kind of crap. Especially with optimizations disabled (and I see that people don't want to use anything above -O1 out of fear of breaking things, even though if you break any hardware access with optimizations enabled that means your code is probably wrong and you probably misused volatile).

Eh, each to their own =P I just have too much to spare on both ends of the spectrum. Lately I'm literally using RAM as a giant buffer to decompress data into.

Stef wrote:

What do (Anim) contains at first ?

Oh, just a generic counter that increments every frame. I normally use it to do the timing of the animations of most things (hence "anim"), so I don't have to waste time giving everything its own counter (also helps keeping everything synchronized).

Anyway, just a value that increments every frame.

Stef wrote:

It looks like you're trying to reverse bits from (Anim) and store result into (Subpixel) right ?

Yep. Only the bottom byte though (since that's the size of the fractional part in speed values).

Stef wrote:

I've to admit i don't really get the point of doing that O_o ? the asr.w #8 is very taxing... it seems over complicated to me but i am probably missing something. It's ok for a system where you don't have 32 bits operations but here on the 68k you have just need to do :

Code:

add.l d1, d2

where d1 is the 16.16 speed and d2 the 16.16 coordinate (that we can easily extends it to 32.16 for world position with an extra addx). I guess you use the offset information for some others calculations but shouldn't offset be different for each object ?

Then

everything needs to account for the possibility of subpixels, that's the issue. Remember this is only done when applying speeds, every other operation just treat positions as 16-bit integers.