I really cannot stand people who use "logic" to excuse whatever unscientifically sound nonsensical argument they make to fit their narrative. The whole "it's logic" excuse doesn't fly. People might as well just say "it's magic" or "I have a larger dick size, so my opinion is right" because, ultimately what difference does it make?

Another variation to the "it's logic" excuse, is the "my argument is backed by research" excuse. What it really means is that they're arguments are backed by other people's speculation, without any personal investigation. You might as well say "somebody pointed a gun to my head and forced me to believe it."

I think that I agree, to a point... Although also I may add a couple things:

-Life sucks, and can depress you when you least expect it (Do you think it's 42?!?), But this is my experience,

-Logic can be random and dumb... as we know, But in the right hands (electronically speaking, for example... making NES games or even soldering logic boards onto a Computer) can be the smartest and best way to make a Game or a Computer work. Even a Business for a living!

-Have you heard of these things called Picross/Nonogram/Oekaki puzzles, They are logic puzzles too.

psycopathicteen wrote:

I really cannot stand people who use "logic" to excuse whatever unscientifically sound nonsensical argument they make to fit their narrative. The whole "it's logic" excuse doesn't fly. People might as well just say "it's magic" or "I have a larger dick size, so my opinion is right" because, ultimately what difference does it make?

Do you have any example of what you mean ?

And are you refering to people who use fallacious logic in order to bring up fake arguments, or simply to people who mention "it's logic" when no logic is involved ?

Yeah, examples would probably clarify.... I don't remember ever seeing anyone using "logic" as an argument unless there was a pretty solid reason to.

Vulcans are such bs artists

Bregalad wrote:

psycopathicteen wrote:

I really cannot stand people who use "logic" to excuse whatever unscientifically sound nonsensical argument they make to fit their narrative. The whole "it's logic" excuse doesn't fly. People might as well just say "it's magic" or "I have a larger dick size, so my opinion is right" because, ultimately what difference does it make?

Do you have any example of what you mean ?

And are you refering to people who use fallacious logic in order to bring up fake arguments, or simply to people who mention "it's logic" when no logic is involved ?

Every debate between the 68000 and the 65816 is like that.

Now I need to know why debates between 68000 and 65816 even exist

Because Sega fanboys are butt hurt that I can pull off action games on the SNES.

Ah yeah, lots of ignorant statements thrown around in the "snes games have slowdown because the CPU is slow" ballpark. I thought you were talking from a programmer's point of view.

There seems to be a lot of butthurt on both sides.

There wouldn't be quite this much hate for the 65816 if a judge hadn't decided that Pixar Animation Studios owns what amount to exclusive rights in the concept of a sentient unicycle.

Are you suggesting that people would have a different impression of the SNES CPU if DMA Design weren't sued over Unirally ?_?

My unread posts feed looks funny right now.

BOO LOGIC!

Attachment:

boo_logic.png [ 2.22 KiB | Viewed 3696 times ]

boo_logic.png [ 2.22 KiB | Viewed 3696 times ]

Edit: Ha ha, bregalad made the

complementary joke in that thread too.

Itching to start the thread "why i like cubase and protools more than logic"

Sumez wrote:

Are you suggesting that people would have a different impression of the SNES CPU if DMA Design weren't sued over Unirally ?_?

Yes. It would have at least led to a more nuanced debate about what makes a system or game "fast".

tepples wrote:

Sumez wrote:

Are you suggesting that people would have a different impression of the SNES CPU if DMA Design weren't sued over Unirally ?_?

Yes. It would have at least led to a more nuanced debate about what makes a system or game "fast".

Huh. The SNES was released in 1990. Uniracers wasn't released until '94,. In my small corner of the world, people had long given up the debates about console speeds by then.

Also, Unirally was released without problems, to a moderate amount of hype, too! They weren't sued until after its release, and it remains a common and relatively popular game.

Besides, there are plenty of other SNES games without slowdown.

The problem isn't even widespread

gauaau wrote:

Uniracers wasn't released until '94,. In my small corner of the world, people had long given up the debates about console speeds by then.

The debates moved from your "small corner of the world" to the Internet, such as newsgroups and web forums.

Sumez wrote:

They weren't sued until after its release, and it remains a common and relatively popular game.

The lawsuit by the company that is now a Disney subsidiary still blocked a longer print run, sequels, and crossover appearances.

Sumez wrote:

Besides, there are plenty of other SNES games without slowdown.

But not many with slams against SEGA's mascot that are as direct as the "Hedgehog Mode" and "Not Cool Enough" parts.

I guess it's a question of perspective? I never even heard of the lawsuit until many, many years later.

I also never really saw a relation between Unirally and Sonic back in the days. I can see it now that you mention it, but I don't think the game was ever intended as a "Sonic killer", it's a racing game with a focus on stunts and showing off, no one ever talked about it being blazingly fast.

I think I'll have to dig up some of the old magazines from back then, and see if anyone talked about it. I remember the game getting quite a lot of coverage.

Sumez wrote:

I guess it's a question of perspective? I never even heard of the lawsuit until many, many years later.

And others may have never heard of the game in the first place because it couldn't get a cartridge rerelease, Virtual Console rerelease, or trophy cameo in

Smash Bros.

Uniracers is also kind of unimpressive, to be honest.

It's fast, sure, but the tracks are also totally barren and visually hyper-repetitive. And as far as I remember it was only ever one-on-one, right?

This isn't just 20/20 hindsight, either; Wikipedia's "Reception" section implies that this was consensus at the time of release, too.

adam_smasher wrote:

Uniracers is also kind of unimpressive, to be honest.

It's fast, sure, but the tracks are also totally barren and visually hyper-repetitive. And as far as I remember it was only ever one-on-one, right?

I

love Uniracers, and I think it's one of my most memorable games for the SNES.

I never thought it was supposed to be technically impressive at all, though. That wasn't why it was good. It was just a fun game.

Oh, yeah, I agree. It's one-of-a-kind and a ton of fun. And as repetitive as the visuals are, I love them too - they're really stylish. But I don't see it swaying many 16-bit Console Warriors then or now.

(not that much of anything would sway them...or that it matters.)

psycopathicteen wrote:

Every debate between the 68000 and the 65816 is like that.

So, what about ignoring them ? This debate has already been done over and over, and every time the conclusion was the same.

adam_smasher wrote:

This isn't just 20/20 hindsight, either; Wikipedia's "Reception" section implies that this was consensus at the time of release, too.

Of course back then I couldn't just go on the internet and scan through aggregrate review sites, so my impression is only based on a few contemporary reviews, word of mouth, and the general atmosphere around what games were coming out, but I remember most people loving the game. I didn't care for it myself as it's a racing game, but I'd think it did quite well.

And yeah, it's kinda off-topic... as you said yourself, it's not really an impressive game.

Bregalad wrote:

psycopathicteen wrote:

Every debate between the 68000 and the 65816 is like that.

So, what about ignoring them ? This debate has already been done over and over, and every time the conclusion was the same.

I have a breaking point.

To make sure we're all on the same page about this 65816 vs. 68000 CPU debate, the conclusion

last time was as follows:

- Data bandwidth is a draw

- Processing speed without large multiplies and divides is a draw

- 68000 has an advantage for 16x16 multiplies and 32/16 divides when interrupt-driven raster effects are not in use

- 68000 has an advantage for high-level languages (such as C) because of the 65816's segmented architecture and dearth of registers

Anything I missed?

tepples wrote:

Anything I missed?

That's about right. The 65816 itself is honestly the least of the SNES's hardware problems. For one, you've got that slow ass, way-bigger-than-it-needs-to-be ram that limits the CPU.

I missed the backstory, but the whole point of "logic" is that it can be independently verifiable using the same (old and established) rules for everyone, increasing the chances that a statement is true as more unconnected people test it.

Did I miss something? Or are you bristling at someone's logical conclusions?

I'm only sensing rectal discomfort again...

All the logic in the world won't save you if your premises are false. An argument using good logic and bad premises is

valid but not

sound.

ccovell wrote:

I missed the backstory, but the whole point of "logic" is that it can be independently verifiable using the same (old and established) rules for everyone, increasing the chances that a statement is true as more unconnected people test it.

Did I miss something? Or are you bristling at someone's logical conclusions?

I feel like "logic" is a moving goal post. In order to back up a statement you must:

-Be very good at writing essays.

-Point out the obvious a lot

-Cite hundreds of sources

-Remember every detail from every website you've ever read ever

-Be a robot

I had a guy once tell me that cow's milk couldn't possibly be good for humans, because it was for calves. I pressed him for more detailed reasoning, and "It's only logical!" was literally all he could say.

Kinda bugged me, but oh well...

tepples wrote:

All the logic in the world won't save you if your premises are false. An argument using good logic and bad premises is

valid but not

sound.

True, true. That is also part of the verification process: do we agree on all the premises before hearing an argument.

You don't have to be a savant with an encyclopedic memory; just be culturally & scientifically up-to-date. And pursue knowledge rather than ignorance.

Also, you can train your senses for bullshit, which is an important part of logical self-defence, too.

For example, that cow's milk argument, that 93143 mentioned, was uttered by an obvious idiot (who probably has a clouded mind).

Cow's milk being, or not being,

good for humans should stay close to arguments about the composition, chemicals, toxicity of milk, since the argument depends on the meaning of

"good" = healthful in this case.

However, saying that it's

"for" calves goes off the rails into implications of

intentionality, as though there is a god-given list of rules for what living organisms can and cannot, or should not consume. Heck, you can eat earthworms if you like, and get nutrients from them, too. Even if they are "for" fish.

This bullshit in a person's argument (or train of thought) should be easy to spot, but I guess it takes practice.

This is the Blast Processing crap? How to put a MD fool in their place.

Mayhem In Monsterland is on a Commodore 64 which is 8bits and runs at 1Mhz and it scrolls half as fast as Sonic - 8pf. Truth be told it could do 16pf just that is unplayable for the game and kind of pointless. So the fact the MD does it nothing special, 2x a C64 for a 16bit 7Mhz processor is kind of lame. TBF MIM is only horizontal and not 8 way, however give a C64 a cart and Sonic speeds in 8 way full colour can be reached. Which is fair as the MD has carts too.

So a SNES with Cart at 2.8 easy 3.5 oddly just as easy

Are the 68K vs 65816 argument conclusions in a clock for clock regard or 8 vs 3.5Mhz? I would expect at clock for clock the 65816 would punch the 68K pretty hard in most cases. Like a 6502 does a Z80 at same clock.

Unirally was awesome, lots of fun. The simple graphics were needed so you brain could process and anticipate what was next. Putting more complicated graphics would have just distracted and weaken the game in my option. And then the soundtrack....

Never new about the pixar things, always did wonder why no sequel.

I think the cow's milk argument ultimately comes down to some medicinal reports that are often repeated in newspapers and magazines referring to this or that study, but that guy didn't know them/cared to remember them.

Overall they can be distilled to:

-Countries with a "milk culture"/higher average milk intake also have more cases of sclerosis

-Most people around the world are allergic to the lactose found in cow's milk. It is assumed you can maintain a tolerance by drinking it since childhood. Many still acquire this allergy over time, even if they've been brought up on cow's milk.

-Overconsumption is cancergenous (as is overconsumption of a lot of foodstuffs)

-There's some ties (of an importance unknown to me) to diabetes

-and to higher cholesterol levels, too.

Sclerosis seems to be the widest relation. Also, what defines over-consumption? Those details rarely pass the media filter. In the sclerosis case you can at least put a rough line between having a glass of milk for lunch and maybe dinner for a good part of your life, and having a few drops in your coffee, i think?

Oziphantom wrote:

always did wonder why no sequel.

DMA Design happened to release a new series a few years later which would turn out to be a pretty big cash cow, unfortunately.

You obviously weren't blessed with an uncle with a perpetual motion machine proposal in his trunk.

I wonder if he ever did make it to DC.

Probably not. Logic!

Logic leads you to the conclusion that everyone and everything, even the everything that is nothing, will not exist in a 10^100 years.

Quote:

Mayhem In Monsterland is on a Commodore 64 which is 8bits and runs at 1Mhz and it scrolls half as fast as Sonic - 8pf.

The very idea to use scrolling speed as a measurement of "CPU power" is utter nonsense and goes against any kind of logic.

As for the milk debate, yes the logic is fallacious. Because cow milk is harmless for cows does not mean it is harmful for humans - or that it isn't for that matter - it just proves nothing. And I guess we all agree that industrially exploit human milk would be... a weird idea at lest.

Not any more than doing it with cows, but that's a completely different discussion I guess

You know something is awfully wrong when they manage to keep the price for a litre of milk just below the price for the same of mundane bottled water...

psycopathicteen, if you want to show off the capabilities of the SNES, I think you should work on your game.

The fact that there is more Genesis/Megadrive homebrew than SNES homebrew is a problem that we should all work to correct.

Also, it was the graphics (color and mode 7) and music that made SNES games stand out. Don't focus so much on processing speed.

Bregalad wrote:

The very idea to use scrolling speed as a measurement of "CPU power" is utter nonsense and goes against any kind of logic.

I try to reverse engineer this argument, and I end up with two plausible premises:

I. It takes time to decode each column of a map.

II. It takes time to copy the decoded column to video memory.

More CPU speed benefits I. So does more ROM, which allows the developer to tilt the time-space tradeoff of map compression toward less time and more space. So does more RAM, which allows the engine to do more of the decoding in advance. More CPU speed or suitably flexible DMA benefits II. If you disagree, I'm willing to revise these premises.

Map decoding in my experience doesn't involve a lot of multiplication or division other than by powers of two. This means the 68000 in the Genesis is on par with the S-CPU. In turn, I'd estimate the S-CPU is about three times as fast as the NES CPU, with a faster clock and 16-bit indexing.

I mean, I broadly agree with Bregalad here, scroll speed is a dumb metric.

But FWIW, tepples, it's not just the raw map data: there's plenty of bookkeeping that moving through a game world entails - loading and unloading entities for instance, or even just more frequent collision detection and physics for the player (unless you want them to go flying through walls).

tepples wrote:

Bregalad wrote:

The very idea to use scrolling speed as a measurement of "CPU power" is utter nonsense and goes against any kind of logic.

I try to reverse engineer this argument, and I end up with two plausible premises:

I. It takes time to decode each column of a map.

II. It takes time to copy the decoded column to video memory.

There's also not really any performance benefit of having a max scroll speed that is less than 8 pixels per frame, because then you'd need extra code to distribute the "work load" between frames.

This is also why I don't think distributing collision across multiple frames is good way to "optimize" a game. It probably adds too much extra overhead sorting all these collisions.

psycopathicteen wrote:

Bregalad wrote:

psycopathicteen wrote:

Every debate between the 68000 and the 65816 is like that.

So, what about ignoring them ? This debate has already been done over and over, and every time the conclusion was the same.

I have a breaking point.

Why would you read or care about these internet debates about vintage CPUs for vintage game systems, and

then identify the arguments and motivations for the side you don't like as personally and emotionally offensive?

In other words,

ignore them.

psycopathicteen wrote:

There's also not really any performance benefit of having a max scroll speed that is less than 8 pixels per frame, because then you'd need extra code to distribute the "work load" between frames.

Yet plenty of NES games distribute the work load.

Super Mario Bros. has its infamous(?) 21-frame counter.

Super Mario Bros. 3 glitches if it's forced to move more than 4 pixels per frame.

Thwaite and

RHDE do some things every frame, some things every other frame, and some things only 10 times a second, when frames mod 6 (NTSC) or 5 (PAL) equals a certain value. (They need this 10 Hz time base anyway to clock the game's timer.)

Haunted: Halloween '85 and its sequel run many enemies' AI only when frames is congruent to the enemy's position in the enemy table mod 8.

tepples wrote:

psycopathicteen wrote:

There's also not really any performance benefit of having a max scroll speed that is less than 8 pixels per frame, because then you'd need extra code to distribute the "work load" between frames.

Yet plenty of NES games distribute the work load.

Super Mario Bros. has its infamous(?) 21-frame counter.

Super Mario Bros. 3 glitches if it's forced to move more than 4 pixels per frame.

Thwaite and

RHDE do some things every frame, some things every other frame, and some things only 10 times a second, when frames mod 6 (NTSC) or 5 (PAL) equals a certain value. (They need this 10 Hz time base anyway to clock the game's timer.)

Haunted: Halloween '85 and its sequel run many enemies' AI only when frames is congruent to the enemy's position in the enemy table mod 8.

With scrolling it makes more sense for the NES, because the NES doesn't do VRAM dma.

I didn't think about using an object's slot number to determine to run collision or not. That would actually speed stuff up a bit.

Bregalad wrote:

Quote:

Mayhem In Monsterland is on a Commodore 64 which is 8bits and runs at 1Mhz and it scrolls half as fast as Sonic - 8pf.

The very idea to use scrolling speed as a measurement of "CPU power" is utter nonsense and goes against any kind of logic.

As for the milk debate, yes the logic is fallacious. Because cow milk is harmless for cows does not mean it is harmful for humans - or that it isn't for that matter - it just proves nothing. And I guess we all agree that industrially exploit human milk would be... a weird idea at lest.

I think this is a hang over from the Spectrum vs CPC vs C64 debates. The Spectrum and CPC got put down because they were slow, game scrolling was jerky and slow. While the C64 won because it had smooth scrolling, and then when you get to MIM it was insane scrolling. While the CPC and Spectrum were just under-powered. The reason for the slow scrolling on a CPC and Spectrum is due to lack of CPU power. While the C64 was able to do it because of Hardware. But then a lot of earlier games had small windows and fixed colour scrolling which was blamed on CPU power. In the 8bit computer world, scrolling is very much a measure of CPU power.

So if you were one of the downtrodden CPC , Spectrum losers and then you got jipped again on the MD, the fact that the MD seems to and Sega advertised it as beating the SNES in the "old ways" you would cling to it. While us who actually now how the machines work know that they both have multiple screens wide pixel based hardware scrolling and the argument is pointless.

Also see Speedy Gonzales - Los Gatos Bandidos which is a bad sonic clone

Aaaah the good old debate ..

We all know that the 65816 is a crappy slow 8 bits CPU, and the 65xxx are all inefficient processors with an inefficient architecture .

And the best,i have also read that the 68k is more efficient with memory ..

And obviously,we all know that 32 bits operations are often used and are essential for 16 bits games .

If we are speaking about a PC, i'll go for the 68k all the days, because he is tailored for that purpose,but for a limited 16bits game system, the 65xxx are a better choice .

Yeah, and "loading" a sprite into OAM takes forever on such a puny CPU. So we got to make sure we load AROUND sprites in the OAM that aren't moving, or are moving only in one axis.

At least that's what he meant by bullshit.

"Comparing processors by their clock frequency is just like Sonic being depicted by SEGA with blue arms: BULLSHIT!"

-Unreleased episode of Penn & Teller: Bullshit

I can't stand it when I see somebody pointing at something that is clearly just line scrolling in a Sega Genesis game and they're like "wow, the Sega Genesis can do 3D with it's faster processor." It's the same thing as the backgrounds in Donkey Kong Country 2, where they move the backgrounds every scanline to fake parallax scrolling. 68000 isn't drawing everything pixel by pixel.

psycopathicteen wrote:

I can't stand it when I see somebody pointing at something that is clearly just line scrolling in a Sega Genesis game and they're like "wow, the Sega Genesis can do 3D with it's faster processor." It's the same thing as the backgrounds in Donkey Kong Country 2, where they move the backgrounds every scanline to fake parallax scrolling. 68000 isn't drawing everything pixel by pixel.

On the other hand, it feels like the Mega Drive's 68000 is the best processor when talking about 3D polygon software rendering. Not that the SNES is incapable of doing that or anything.

Well, I would make a demo of StarFox running on a stock SNES if I wasn't already working on so much other stuff.

psycopathicteen wrote:

Well, I would make a demo of StarFox running on a stock SNES if I wasn't already working on so much other stuff.

You shouldn't do it if the goal is just to prove a point anyway. It's already hard enough to keep motivated when working on things we actually want to make.

I find the whole math behind 3D polygons a bit too hard TBH, so I don't think I could ever code something like that, specially considering the textured polygons, but I'd be very interested in seeing how fast the SNES would be able to handle Star Fox without the Super FX. I do think it would do worse than the Genesis though.

I think you can start by making an RLE decoder, and then work backwards.

On the SNES vs the Genesis doing 3D rendering, the graphics format probably is the most limiting factor. Of course, you can use Mode 7, but then your rendering area is very limited. Really though, a size much bigger than this will lead to garbage framerates regardless of the graphics format. The SNES port of Wolfenstein is really underwhelming for the hardware, but so were 90% of games in the console's early lifetime.

psycopathicteen wrote:

It's the same thing as the backgrounds in Donkey Kong Country 2, where they move the backgrounds every scanline to fake parallax scrolling.

Donkey Kong Country 2 actually does it better than 90% of games though, as it doesn't just shear the layer horizontally, but also stretches/shrinks the layer vertically. I've noticed that while it's fairly rare for SNES games to do this, you almost never see it for the Genesis, likely do to having the line scroll table instead of HDMA.

Punch wrote:

"Comparing processors by their clock frequency is just like Sonic being depicted by SEGA with blue arms: BULLSHIT!"

I don't think I've ever seen Sonic with blue arms. However, they did decide to give him blue legs.

TOUKO wrote:

http://www.sega-16.com/forum/showthread ... (or-others)

Sega16 Stef (the same Stef as here?) wrote:

the GBC Z80@2 mhz slower than the 6502 @1.79 Mhz ?!? No way...

What the actual fuck Stef.

Using Mode-7 as the buffer, there's probably ways of recycling blank or solid colored tiles, but it I would need to think a lot about it.

I have also thought about representing 2 colors per 8x8 tile, and shrinking the mode 7 layer down to a 256x128 area, and using the tile map as a pattern map, but a 256x128 window doesn't look that good. Now that I think about it, you can take the 256x128 area, stretch it to 192 and put it into interlace mode.

psycopathicteen wrote:

but a 256x128 window doesn't look that good.

Dude, this is the SNES we're talking about.

I wasn't even aware that size was possible using Mode 7, because if it is, the graphics format basically isn't a problem.

Not normally, but if every 8x8 tile consists of 2 solid colored halves, then you can pull off a 4bpp packed pixel 256x128 window.

psycopathicteen wrote:

4bpp

Oh...

Star Fox only uses 16 colors for polygons.

Espozo wrote:

I don't think I've ever seen Sonic with blue arms. However, they did decide to give him blue legs.

psycopathicteen wrote:

Not normally, but if every 8x8 tile consists of 2 solid colored halves, then you can pull off a 4bpp packed pixel 256x128 window.

In other words, you can use the S-CPU to render into what is essentially an untiled version of Mega Drive VDP format, and use the result as your tilemap.

Only catch is, you don't have any space left over for any other colours in CGRAM. Unless you use direct colour mode for the Mode 7 layer, and since you're rendering to the map and not the tiles, there's no reason you couldn't do this. It just limits you to 8-bit RGB, which is kinda bad, but eh... EDIT: see below.

Furthermore, 256x128 is the absolute limit of what you can cover with 16x16 sprites, meaning you can't do seamless backdrop tilting or even scroll unless you use 32x32 - or possibly 16x32...? The non-square PAR might let you get away with more vertical skew than horizontal, but then it wouldn't match the rendered layer unless you applied a transform to one of the axes every time you computed a projection, which would be expensive, and hidden penalties like that are best avoided if you're trying to prove something about CPU power... maybe the Corneria background wouldn't be all that bad; it doesn't have much in the way of verticality...

...just thought of something: I believe the MD Star Fox mockup uses column scroll to tilt the rendered layer, in order to avoid the extra rotation transform. Technically tilting a Mode 7 layer should be trivial, but if you're already at 256x128 there's no wiggle room...

One final issue is that if you're rendering into Mode 7, you can't use the PPU multiplier during the display period. This could be a serious obstacle to doing decent 3D on a stock SNES. I haven't looked into this much, but I think there are a bunch of tricks that have been used in the past to do fast bitplane rendering of polygons on a 65xx. If you can render more than one or two pixels at a time, because most polygons are untextured, the bitplane format isn't such a crushing disadvantage. Plus you can set up the VRAM gate to take linear bitplane and distribute it into tiles in VRAM, so you don't need to worry about tiled rendering either way...

...

I actually used this same packed-tile Mode 7 idea for a background for my port, except I used it as a compression technique. With a brute force optimizer in Matlab, plus a bit of hand-tuning, I managed to get a 128x128 image with over a hundred colours into 24 KB of VRAM (well, 12 KB of tile data and 8 KB for the map). The result is virtually indistinguishable from the uncompressed image, and as a bonus, rotation is a lot smoother at the same zoom level, because it's basically doing sub-texel positioning.

Unfortunately, the success of this compression method seems to be highly material-dependent. I tried it again on a different image (a larger one - 128x192) and had to back it all the way down to 14 colours to fit it in 192 tiles with acceptable levels of artifacting.

Why is CGRAM a problem? Only the first 16 colors are needed, so the rest can be used for sprites. You're right that I might need the Mode-7 multiplication registers.

It's not. I must have been thinking of my other scheme, which was to draw the tiles themselves in packed-pixel format and blend the 16 colours into 256 combinations so it would still work. The result would be blurry but potentially serviceable.

Your way's better.

I once heard of a trick called XOR filling, which is to just draw dots at the top and bottoms of polygons, and XOR each group of pixels with the group above them. I think it can aim for 256x192 at 20fps.

Yeah, I seem to recall something about that from a discussion about polynes.

Also, I found this:

http://codebase64.org/doku.php?id=base:6502_6510_mathsin which is found this:

http://codebase64.org/doku.php?id=base: ... he_vectorsI don't know if it's the fastest way, but it appears to claim to do something similar to your goal in a reasonable amount of time.

One might also want to take a look at what Overdrive 2 is doing in that polygonal section near the end. I think there's a video; they've got some shenanigans going on that might be conceptually useful...

tokumaru wrote:

those demos were helped a lot by the chunky display that the MD has,but i agree a 2.68mhz 65816 cannot do a 3D game like the 7.6 mhz 68k can do .

Wolfenstein on Apple 2 gs trounce the MD version, and this computer has no hardware acceleration at all like sprites,DMA,background layers.

But The 2gs has a 8/10 mhz 65816 accelerator ,however he accesses to his "video RAM" @1mhz (apple 2 compatibility) .

https://www.youtube.com/watch?v=E3uHip1Qr-4&t=263sBut with a planar format, even (IMO) a 10mhz 65816 cannot do some miracles .

Toy Story on SNES did a much better job with ray casting, although they were helped by Mode-7's packed pixel format, and vertical mirroring. Too bad they had to make the screen smaller to fit within 256 tiles.

https://www.youtube.com/watch?v=AuvcCvRMbU8

Yes,but it's not 100% software,i think mode 7 must be used for zooming .

Zooming?

I think that's the first time I've seen that 3D segment from Toy Story on the SNES. What's really impressive is how smooth it runs; looks like it even runs at a higher framerate than the Genesis version. Of course, the resolution is lower, so there's that.

The people in the comment section are crazy; the Genesis version looks better but the SNES version sounds better? Wtf? I like the dark colors of the Genesis version, but I don't think that makes it more technically impressive. The SNES version sounds like hot garbage; the only part that sounds clearer is the "hello" voice sample, but it sounds nothing like the aliens from the movie. Sounds like people are giving pre-programmed responses based on "knowledge" of the hardware.

The SNES version has more colors onscreen. I don't think it's zooming in, because it looks as sharp as the Sega version, just with a smaller screen.

I like the "preprogrammed responses" comment. It's like how Thunder Force 3 runs at the same speed on both SNES and Sega Genesis and people think the Genesis version is twice as fast. I've seen slowdown on both versions of the game, and they're pretty much in the same places.

Quote:

because it looks as sharp as the Sega version, just with a smaller screen.

Yes i have also noted that it's not as pixelated as usual with mode 7.

It's really good if all the redering is done with the CPU, and mode 7 is only here to have a "chunky" display .

thunder spirit on snes is really bad coded, it slowdown for nothing,in the space level, there is a lot a things on screen and it doesn't slowdown that mush compared with the first levels .

https://youtu.be/yiFV_-ENxjc?t=9m18s

The weird thing is that the frame rate only drops a little bit, like it's running at 45 fps.

Besides the whole CPU speed comparison stuff, I see the same type of "logic" in everything including college academia. College Professors either just believe what other people with PHDs told them, or make shit up without any kind of experimentation or anything. I really don't give a damn what kind of nonsense is on "US department of education" website. Everything is just circular reporting.

I don't think actual logic is the issue. It sounds to me like what people call logic isn't really logic, which is an issue.

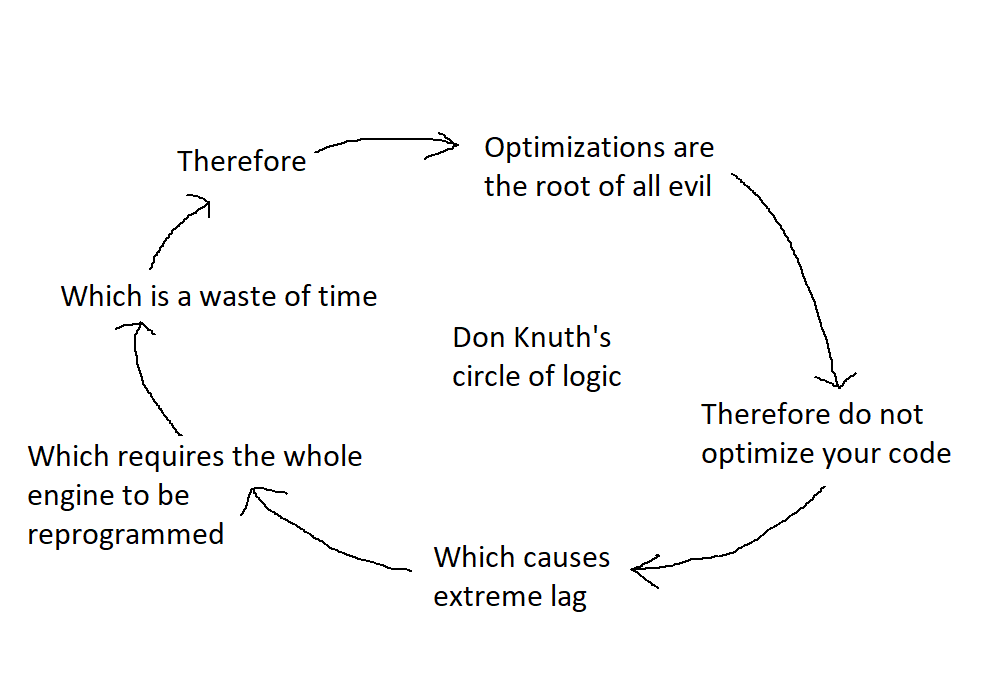

Here is some more "logic".

Attachment:

don knuth's circle of logic.png [ 22.26 KiB | Viewed 1589 times ]

don knuth's circle of logic.png [ 22.26 KiB | Viewed 1589 times ]

You're kind of wildly misrepresenting what Knuth actually said:

Quote:

We should forget about small efficiencies, say about 97% of the time: premature optimization is the root of all evil. Yet we should not pass up our opportunities in that critical 3%.

In other words, optimize when it actually matters, and be able to recognize when it doesn't.

It would've made more sense if he said "unnecessary optimization" instead of "premature optimization". "Premature" sounds like you shouldn't make any optimizations early on.

But Knuth is speaking out against a

particular form of unnecessary optimization. The idea is that it's hard to predict in advance what parts of your code will be the bottleneck (and sometimes even once you've written your code it's hard to know where the bottleneck is without profiling), so don't expend lots of effort micro-optimizing stuff and making it harder to maintain before (i.e. prematurely) you have an idea if that's worth doing.

At risk of appealing to authority here, you're almost certainly not smarter or more experienced (or better at choosing words, for that matter) than Donald Knuth. That doesn't mean smart people can't make mistakes or anything, but you probably ought to apply the

principle of charity and try to listen to and understand what smart people are saying before attributing the most banal and trivially incorrect possible reading to it so as to dismiss it out of hand.

That's another thing that bothers me. I hate coming across as a know-it-all if experts in a field want me to believe something that doesn't add up, or sounds politically biased. If I find just one flaw in somebody's logic, people might think I'm a jerk who thinks he's smarter than everyone else, and I just want things to make sense.

psycopathicteen wrote:

It would've made more sense if he said "unnecessary optimization" instead of "premature optimization". "Premature" sounds like you shouldn't make any optimizations early on.

No, thats not his point at all. Everything he talks about in the context that quote applies only to early optimization, and the accumulated effect they then have over the course of the project.

"Avoid unnecessary optimization" would be an unhelpful platitude. Knuth's advice is offering his intuition based on his experience: that mistakes made due to optimizing early are overall more harmful than the mistakes made by not optimizing early enough. It's advice to try to recognize when you're making an early decision like this, consider its long term implications, and if unsure he would probably want to err on the side of optimizing too late.

The reason people optimize early is to avoid optimizing too late: the case where you have to rewrite/refactor a bunch of stuff because the structure of things made it impossible to fix locally. That case is something real to worry about, but Knuth was warning against the long term impact of doing it too early, which in his experience was often overlooked. Over the long term code has to be revised many times, and requirements frequently change, and optimization has a tendency to make code both more difficult to maintain and more specialized in its requirements. On the other hand, most late optimization opportunities are easy local fixes, so the rewrite case is rarer, and even then the time spent in a rewrite might be comparable to the time spent in added maintenance if it had been done earlier.

That's the perspective he's trying to share, and it would not at all be intimated by warning merely about "unnecessary" optimization.

It's

not at all a statement that you should never optimize early. It is an opinion about which category of mistake is most important to avoid, advice from a seasoned professional about how to weigh hard decisions in code design, not some sort of dogmatic rule.

...and just in case it's not clear, it is talking about a specific kind of optimization: something that makes code faster but more complex. Not all optimization falls into this category, but almost all optimization that a programmer needs to make difficult/critical decisions about does.

If you'd like to see its actual context, one of the cited versions of the idea from that wikiquote link is this 1974 paper, about GOTO but also touching on a bunch of general programming issues:

http://web.archive.org/web/201307312025 ... -knuth.pdf

Man, this Knuth quote has been regularly geting on my nerve and I already wrote my tought about it

on 6502.org forums, so I'm not going to do this again, but I'll just quote myself.

On 6502.com, Bregalad wrote:

I absolutely hate those one lined so-called "rules". They make absolutely no sense, as if you could summary good and bad programming practice in 5 (or so) lines.

It depends so much on the context, if you are in education, or industry, if you are writing for your hobby or just because you're paid to do so, if you have deadlines or not, if you want to make elegant code, and of course even if in theory the programming language is not related to programming in itself, in practice it is, so yes it will depend on the programming language.

It is absolutely wrong to say you can't know where a program is going to spend it's time. In fact if you have half a brain you can guess it very easily. The only exception is if you are doing system calls and have no idea what the library will do behind it. Which may be pretty much always the case of people programming in very high level language, where they have no idea what they're actually doing on the machine.

And, the sentence, "premature optimisation is the root of all evil" is simply stupid and was probably a dumb joke, but people took it seriously.

I think it would be trivial to find one evil which is not due to premature optimisation. For instance, nazism was not a premature optimisation. Enough said.

Now let's pretend "premature optimisation is the root of all bad-programming practice (aka "evil") ". Again this is wrong. Some of the random programming practices that comes in mind :

- Bad indentation

- Variable names that makes no sense or aren't related to the variable's purpose

- Function names that makes no sense or [...]

- Not comment what a function expect as input and output

- Writing too much stuff on one line like : while (--i != function_call(arg1++, arg2))

- Copy-paste a function and make it only slightly different, instead of using an extra argument

None of those bad practices are premature optimisation, so again this sentence is plain wrong.

Now let's dumb it down to "premature programming optimisation is the root of some bad-programming practice (aka "evil")"

Then finally this is true of course (at last). If you want to make a super optimal program before writing it, there is higher probability you'll write spaghetti code and fail or simply loose interest in continuing it, because it's too much effort (if you're a hobbyist).

However, completely ignore any kind of "optimisation" when programming is not a very good practice either. You should really think about the proper data structures, so that you'll gain time, and not having to say "oh if I use structure Y instead of structure X, my program could be 10x faster" and having to rewrite everything.

So my advice is to optimize data structure first, then write clear, commented code (having it "optimized" is ok as long as it doesn't discard the comprehension), and finally, make a few optimizations that could alter the comprehension of the code if it is needed.

Also, optimising non-bottlenecks is not a crime. It's just a waste of your time in the worst case, but it won't hurt the performance in any way. Don't get me wrong - optimising bottlenecks is better, but that doesn't make optimise the rest wrong. There is plenty of situations where measuring the bottleneck directly is simply impossible or terribly difficult, so you'll have to resort to cheap heuristics.

Conclusion : I think paradoxally those dumb one-liners and people citing them as if it was the bible is the reason there's so many bad programmers around. In reality most of the approach depends on the context (embedded or not, hobby or work) and programming language. Just don't trust one-liners without thinking.

I'd say : "Dumb one-liners are the root of all evil".

Quote:

The idea is that it's hard to predict in advance what parts of your code will be the bottleneck

Wrong, in most cases it's actually very easy.

Bregalad wrote:

I absolutely hate those one lined so-called "rules". They make absolutely no sense, as if you could summary good and bad programming practice in 5 (or so) lines.

It wasn't a "one line rule" when he said it. I linked the original article it appeared in above.

It is not at all intended as a "rule" against ever optimizing early. It's only the idea that the mistakes of the worst magnitude come from doing so. It's a thing to remember when you're making a difficult decision about optimization, not when the decision to optimize is easy.

In fact, directly preceding the quote in that article, he talks about some cases where you should routinely try to optimize immediately. In context he's not saying anything of the sort you are attributing to it. It's a small part of a much larger, and very practical discussion about optimization (and the point of the article: the place of GOTO in optimization circa 1974).

Maybe the quote is commonly being misused, misapplied, or misinterpreted, I don't know (psychopathicteen's diagram is absurd)... but the point of a quote is supposed to

stand in for the larger idea, not encapsulate it entirely. (This is precisely why twitter is so good at fostering arguments; everything is reduced to a "quote" size that can't possibly mean all that it needs to.)

psycopathicteen wrote:

That's another thing that bothers me. I hate coming across as a know-it-all if experts in a field want me to believe something that doesn't add up, or sounds politically biased. If I find just one flaw in somebody's logic, people might think I'm a jerk who thinks he's smarter than everyone else, and I just want things to make sense.

I can sympathize with this. I've found that an effective remedy is to approach an argument objectively, and not in a way that is hostile or certain. If you believe you see a flaw in someone's argument, all you have to do is calmly walk through the points of that argument and attempt to follow it through to conclusion. You will almost certainly find a point in the argument you cannot move past, either because you do not understand that point, or that point in the argument is invalid/unsound. Alternatively, you follow it through to conclusion and find it makes sense after all.

Sometimes you need to walk someone else through their own argument and highlight the point in question, prefacing it all with "Perhaps I'm misunderstanding, but..." or "I could be wrong, but...". If you're prefacing your arguments with things like that, people won't think you're a jerk; you're just trying to understand. It's all about diffusing the sense of confrontation, shifting the purpose away from "winning the argument", and more towards "coming to an understanding".

rainwarrior wrote:

Bregalad wrote:

I absolutely hate those one lined so-called "rules". They make absolutely no sense, as if you could summary good and bad programming practice in 5 (or so) lines.

It wasn't a "one line rule" when he said it. I linked the original article it appeared in above.

It is not at all intended as a "rule" against ever optimizing early. It's only the idea that the mistakes of the worst magnitude come from doing so. It's a thing to remember when you're making a difficult decision about optimization, not when the decision to optimize is easy.

In fact, directly preceding the quote in that article, he talks about some cases where you should routinely try to optimize immediately. In context he's not saying anything of the sort you are attributing to it. It's a small part of a much larger, and very practical discussion about optimization (and the point of the article: the place of GOTO in optimization circa 1974).

Maybe the quote is commonly being misused, misapplied, or misinterpreted, I don't know (psychopathicteen's diagram is absurd)... but the point of a quote is supposed to

stand in for the larger idea, not encapsulate it entirely. (This is precisely why twitter is so good at fostering arguments; everything is reduced to a "quote" size that can't possibly mean all that it needs to.)

What page of the article is the quote on?

If he is talking about structural optimizations, what does he mean by "small inefficiencies"?

Quote:

What page of the article is the quote on?

I think it's on page 268.

Espozo wrote:

TOUKO wrote:

http://www.sega-16.com/forum/showthread ... (or-others)

Sega16 Stef (the same Stef as here?) wrote:

the GBC Z80@2 mhz slower than the 6502 @1.79 Mhz ?!? No way...

What the actual fuck Stef.

Just to reply on that point : we were speaking about "external" frequency, so basically by saying "the GBC Z80@2Mhz" we meant 8Mhz as internal frequency (GBC CPU is 4 or 8 Mhz)... you should read more carefully the post.

After that, maybe you're really thinking that a 6502@1.79 Mhz beat the GBC CPU @8 Mhz (as tomaitheous seems to think)

Still i'm surprised to see that kind of discussion still living here

If it can push you to make some awesome demos for the SNES then that is all good

If you really want to compare what you can each CPU, i think the 3D level in Toy Story is a good comparison.

Definitely both versions are awesome and has been programmed by very talented developers and i bet than we can hardly do better that what you can see here...

They used mode 7 on SNES so they can use chunky pixel as well. In fact the SNES version is really impressive still the resolution is lower than the Genesis version, the window view is smaller and the H resolution is doubled (better to use emulator to compare). You can make your own conclusions...

I know it won't change the point of view of some of you and i don't really care anyway

Stef wrote:

After that, maybe you're really thinking that a 6502@1.79 Mhz beat the GBC CPU @8 Mhz (as tomaitheous seems to think)

I've concluded over recent discussions that between the two, the NES's 6502 might win at random access, such as properties of an enemy in physics or AI code or nested metatile decoding. But the GBC's LR35902 wins at sequential access because of things like

(HL+) and

INC DE, not to mention HDMA to VRAM in the support circuitry.

Bregalad wrote:

Quote:

The idea is that it's hard to predict in advance what parts of your code will be the bottleneck

Wrong, in most cases it's actually very easy.

Some games are programmed so badly, that every piece of code is a bottleneck.

I don't know much about the plain old 6502 and I know even less about the Z80, but a 6502@1.79MHz beating a Z80@8MHz sounds even more ludicrous than a Z80@2MHz beating a 6502@1.79MHz. Sorry I misinterpreted your post Stef; yeah, that's ridiculous.

I agree with what you said about the 3D section in Toy Story; they both probably just about showcase the best each processor can do, or at least close to it. Don't expect this kind of fanboy war to ever go away; I can't say it never gets on my nerves though. However, most of the time I'll actually just bring up relevant technical information and everyone just shuts up.

tepples wrote:

Stef wrote:

After that, maybe you're really thinking that a 6502@1.79 Mhz beat the GBC CPU @8 Mhz (as tomaitheous seems to think)

I've concluded over recent discussions that between the two, the NES's 6502 might win at random access, such as properties of an enemy in physics or AI code or nested metatile decoding. But the GBC's LR35902 wins at sequential access because of things like

(HL+) and

INC DE, not to mention HDMA to VRAM in the support circuitry.

Are you still comparing the 1.79Mhz vs the 8 Mhz GBC CPU ? There is absolutely no chance the 6502 can win against it, but if you compare to the 4 Mhz one, well... the 6502 may provide faster random access but in the end the bottlenecks always remains in sequential processing code (where you have loop) so you tend to arrange your data structure depending CPU strengths and in the end the winner is the CPU which can process more data (for a same amount of time). Difficult to say which one here...

The thread you started is not if a 1.79mhz 6502 vs a 8mhz Z80 would compete (because it's obvious it cannot) but "Let's compare 65x CPU architecture vs 68000 (or others)"

I'm even surprised that you choose a 1.79 mhz vs a 8mhz Z80 to be sure to be right .

The hu6280 destroy litteraly your 8mhz Z80 even with your crappy code examples, and it's a fact, why you don't take this in account ??,not easy here right ??

Your comparison with code was also hilarious,you code some bad exemple with bad 6502 code, and you summarise the results with all the processors serie,which is utterly false,but easy with no one who contradicts you, eh, be sure to lunch a comparison thread on a sega forum, the best place for this, without any doubt .

When you want a 68K or Z80 vs 65xx comparison, what's the best place than a 65x forum ??

Why not here,were some people can contradict your arguments ??

Ah yes ,you said me that there are only fanboys here.

Quote:

They used mode 7 on SNES so they can use chunky pixel as well. In fact the SNES version is really impressive still the resolution is lower than the Genesis version, the window view is smaller and the H resolution is doubled (better to use emulator to compare). You can make your own conclusions...

Of course, and it's not in your favour,it show that a 2.58mhz 65816 can do raycaster close to a 7.67 68K, and with a one phase custom 65816 @5mhz which don't need to change anything on the system as you like to said on a french forum would crush the 68K in this exercice .

What are you speaking about ? I didn't wanted to compare the 6502@1.79 Mhz vs GBC CPU @8Mhz in first place, I was just replying as i was quoted on something which could be wrongly interpreted...

Quote:

The hu6280 destroy litteraly your 8mhz Z80 even with your crappy code examples, and it's a fact, why you don't take this in account ??,not easy here right ??

Again.. what are you speaking about ? Of course the hu6280 would destroy a 8 Mhz Z80, i have no doubt about it too... where did i said the opposite ?

Quote:

Your comparison with code was also hilarious,you code some bad exemple with bad 6502 code, and you summarise the results with all the processors serie,which is utterly false,but easy with no one who contradicts you, eh, be sure to lunch a comparison thread on a sega forum, the best place for this, without any doubt .

Why not here,were some people can contradict your arguments ??

And you are the one bringing the subject back... i don't want to open the war again about it but honestly I've absolutely no problem to discuss and debate about it wherever you want :p

Again i really don't care about convincing you, you're free to believe whatever you want. Myself I don't need to believe anything as i just know it :p

Quote:

And you are the one bringing the subject back... i don't want to open the war again about it but honestly I've absolutely no problem to discuss and debate about it wherever you want :p

But you have lunched it on sega-16, why not here ??

And it's not a war,i thing we are grown enough to be gentle even in a CPU comparison(i know it's a little bit hard with you) .

You can post your code exemple here, and we can discuss, it's not a fight, it will be just less easy than on sega-16 to post bullshit .

Quote:

Again i really don't care about convincing you, you're free to believe whatever you want.

... I don't need to believe, i just know

And i don't care too, because i know you are only a fanboy persuaded to be right in any case,and that's really boring to discuss with you,because you're not able to admit when you're wrong even when most people said you .

Good night my friend

Doing it here would sound like a provocation and honestly I think i already did it no ? we already has some talks here about it at least...

Stef wrote:

the H resolution is doubled

Isn't that because the MD can write two 4-bit pixels in one shot, rather than one 8-bit pixel? IIRC it's only doing raycasting for every pair of columns.

You can do the same thing in Mode 7, by using tiles for pixel pairs and drawing the graphics to the tilemap. But it looks like they didn't do that.

The trouble with comparing multiplats is that it's almost impossible to get a real apples-to-apples comparison. Even if the same developer did both,

and put the same level of effort into both, it's likely that their level of familiarity with each system and CPU was different, and the SNES was inarguably harder to develop for and had a less popular CPU. (And indeed, it looks like TT had way more experience with the MD at the time.) You'd have to put together a systematic bipartisan effort to come up with an optimal implementation on both consoles, and even then it would be difficult to eliminate all such lingering questions...

A spectrum guy compared some years ago the C64's 6502 @1mhz and the 3.5 mhz in the spectrum .

The two CPU was close,and overal the Z80 was ahead(not by far) by his frequency .

But if his tests were done with the nes or A8's CPU (which run @1.79 mhz) no way for a 3.5mhz Z80 to win .

It's often common for the Z80 side to take the slowest 6502 computer for comparing,like stef did when comparing the 65xx with other architectures,and pushing his biased mind to do this comparison on a sega forum .

@stef: if you want to discuss how the 6502 architecture is efficient(or not), you must speak with GARTH WILSON .

TOUKO wrote:

I'm even surprised that you choose a 1.79 mhz vs a 8mhz Z80 to be sure to be right .

The hu6280 destroy litteraly your 8mhz Z80 even with your crappy code examples, and it's a fact, why you don't take this in account ??,not easy here right ??

It's "not easy here" to obtain a HuC6280-based console in the first place. Plenty of stores that offer used NES, GBC, and TI-83 units for sale have no used TG16.

Why does that even matter?

If you have a solid interest in classic video games you should have a PC Engine, it doesn't matter that US stores rarely sell it.

And even if you don't have one, that doesn't change the fact that the CPU exists, which was the only preface to TOUKO's comment.

I think comparing CPU:s only matters when designing *new* hardware, and then, availability and price is going to outweigh performance as long as you can do what you set out to do with it. When it comes to desigining software (homebrew/game), the most important factor to me would be who's going to be able to use/play it.

PC Engine and MSX are special cases then because they're interesting enough in their feature sets that i'd be interested in making something despite that only a subset of game collectors have them.

tepples wrote:

TOUKO wrote:

I'm even surprised that you choose a 1.79 mhz vs a 8mhz Z80 to be sure to be right .

The hu6280 destroy litteraly your 8mhz Z80 even with your crappy code examples, and it's a fact, why you don't take this in account ??,not easy here right ??

It's "not easy here" to obtain a HuC6280-based console in the first place. Plenty of stores that offer used NES, GBC, and TI-83 units for sale have no used TG16.

Sorry, maybe i did not use the right expression, i mean by "not easy here" was rather "it's more easy to say that a Z80 is more powerful than a 6502 than with a hu6280".

Quote:

And even if you don't have one, that doesn't change the fact that the CPU exists, which was the only preface to TOUKO's comment.

Yes that was my point,but maybe not well translated in english,i apologise .

Here many people know the 6502, some the 65816, ccovell ,tomaitheous and i(and maybe others),we know the hu6280.

rainwarrior wrote:

psycopathicteen wrote:

It would've made more sense if he said "unnecessary optimization" instead of "premature optimization". "Premature" sounds like you shouldn't make any optimizations early on.

No, thats not his point at all. Everything he talks about in the context that quote applies only to early optimization, and the accumulated effect they then have over the course of the project.

"Avoid unnecessary optimization" would be an unhelpful platitude. Knuth's advice is offering his intuition based on his experience: that mistakes made due to optimizing early are overall more harmful than the mistakes made by not optimizing early enough. It's advice to try to recognize when you're making an early decision like this, consider its long term implications, and if unsure he would probably want to err on the side of optimizing too late.

The reason people optimize early is to avoid optimizing too late: the case where you have to rewrite/refactor a bunch of stuff because the structure of things made it impossible to fix locally. That case is something real to worry about, but Knuth was warning against the long term impact of doing it too early, which in his experience was often overlooked. Over the long term code has to be revised many times, and requirements frequently change, and optimization has a tendency to make code both more difficult to maintain and more specialized in its requirements. On the other hand, most late optimization opportunities are easy local fixes, so the rewrite case is rarer, and even then the time spent in a rewrite might be comparable to the time spent in added maintenance if it had been done earlier.

That's the perspective he's trying to share, and it would not at all be intimated by warning merely about "unnecessary" optimization.

It's

not at all a statement that you should never optimize early. It is an opinion about which category of mistake is most important to avoid, advice from a seasoned professional about how to weigh hard decisions in code design, not some sort of dogmatic rule.

...and just in case it's not clear, it is talking about a specific kind of optimization: something that makes code faster but more complex. Not all optimization falls into this category, but almost all optimization that a programmer needs to make difficult/critical decisions about does.

If you'd like to see its actual context, one of the cited versions of the idea from that wikiquote link is this 1974 paper, about GOTO but also touching on a bunch of general programming issues:

http://web.archive.org/web/201307312025 ... -knuth.pdfIs there any well known advice on how to predict maintainability with code? Other than writing notes?

Why aren't CPUs measured by memory bandwidth speed in the first place?

psycopathicteen wrote:

Why aren't CPUs measured by memory bandwidth speed in the first place?

They are.

Example:

https://www.anandtech.com/show/5091/intel-core-i7-3960x-sandy-bridge-e-review-keeping-the-high-end-alive/4

rainwarrior wrote:

psycopathicteen wrote:

Why aren't CPUs measured by memory bandwidth speed in the first place?

They are.

Example:

https://www.anandtech.com/show/5091/intel-core-i7-3960x-sandy-bridge-e-review-keeping-the-high-end-alive/4I mean on Wikipedia and popular gaming websites.

TOUKO wrote:

A spectrum guy compared some years ago the C64's 6502 @1mhz and the 3.5 mhz in the spectrum .

The two CPU was close,and overal the Z80 was ahead(not by far) by his frequency .

But if his tests were done with the nes or A8's CPU (which run @1.79 mhz) no way for a 3.5mhz Z80 to win .

It's often common for the Z80 side to take the slowest 6502 computer for comparing,like stef did when comparing the 65xx with other architectures,and pushing his biased mind to do this comparison on a sega forum .

@stef: if you want to discuss how the 6502 architecture is efficient(or not), you must speak with GARTH WILSON .

I think speaking about efficiently of a CPU mean less or more "what can it does with a given memory".

The internal speed of the CPU is just matter of implementation, what is important is external / memory frequency... and if we compare on that, a 6502 is definitely not a "efficient" CPU. A 6502@1Mhz is equivalent to a ~3.5Mhz Z80 in term of external frequency, and i think the 3.5 Mhz Z80 can do more than a 1Mhz 6502.

A 1.79 Mhz 6502 may be more powerful than a 3.58 Mhz Z80, but it requires faster memory so that is easy.

Same can be said for a 65816 vs a 68000 but the 65816 use a 8 bit bus, making it incomparable to the 68000 CPU from the start.

If we want to be more fair for the 65816 we would probably allow it to use memory at twice the speed of the 68000 memory (so compare a 65816@ 3.8Mhz versus a 68000@7.67 Mhz), but then that becomes unfair for the 68000 which always do 16 bit memory accesses... Still, i believe than a 68000@7.67Mhz is already faster than a 65816@3.8Mhz, that shows how much the 68000 is more "efficient".

Wouldn't a lower internal frequency give you more room for overclocking? How fast can you make a 6502 vs Z80 before it overheats? This isn't relevant to any system during the time though.

Stef wrote:

Same can be said for a 65816 vs a 68000 but the 65816 use a 8 bit bus, making it incomparable to the 68000 CPU from the start.

If we want to be more fair for the 65816 we would probably allow it to use memory at twice the speed of the 68000 memory (so compare a 65816@ 3.8Mhz versus a 68000@7.67 Mhz), but then that becomes unfair for the 68000 which always do 16 bit memory accesses...

It depends; how much would 8 bit memory cost compared to 16 bit memory at half the frequency?

Stef wrote:

Still, i believe than a 68000@7.67Mhz is already faster than a 65816@3.8Mhz, that shows how much the 68000 is more "efficient".

Although I don't have the best knowlege of the processor, even factoring in the increased utility of each instruction, with the number of cycles per instruction don't see a 7.67MHz 68000 being better than an always 3.8MHz 65816 for a 2D game console. Of course, there's no way to prove this, but it's fun to speculate.

Opcodes that a 4 Mhz 65816 would be faster than a 8 Mhz 68000 would be:

-branching

-shifting by less than 3

-shifting by 8

-ALU and load/store instructions that use immediate, absolute or indexed addressing (except when "clc" and "sec" are needed)

-non-word aligned memory accesses

Opcodes 8 Mhz 68000 is faster at:

-shifting by more than 3 but less than 8

-ALU and load/store instructions that use registers or register indirect addressing

-ALU instructions that require "clc" or "sec"

-32-bit ALU instructions

Here's some cringe fest logic from

https://www.smwcentral.net/?p=viewthread&t=91363&page=4Quote:

question: how long have you been beating this drum and had nobody care and blamed everybody else for not caring, from what i hear you have an old account here from like 2010 or something

also legit why do you think that sprite priority is causing any slowdown, im just, i dont get where the hell you drew that link from

you said, after eliding most of the content of my last post, that this is to

"get rid of the slowdown caused by the non-contiguous OAM."

but vanilla SMW doesn't do any sprite priority stuff of note, it just pre-assigns oam slots to sprite slots (btw you should definitely learn how people around here use words or youre going to be talking nonsense to us); the reason we use NSTL is because it runs out of oam slots, not time. the only sprites im aware of with any priority needs are the net koopas and they arent exactly notorious slowdown beasts

im assuming you got the thing about non-contiguous OAM from my post on the NSTL patch, but thats because the NSTL has to allocate tiles, not order them; if youre just ordering them you can treat them as contiguous since having an extra off-screen tile in the middle of your list isn't going to affect the priority literally at all

moreover, you absolute chumpo idiot, i personally have already written a patch that makes the NSTL allocation linear-time, and i didn't need to make 8 oam mirrors to do it, and didnt inexplicably tie oam allocation to creating an oam priority thing.

So in other words, I'm an idiot for thinking SMW allocates sprites in OAM based on sprite priorities? I mean, what the fuck does she think determines what sprite goes on top of what inside the sPPU?

Seems like they passive aggressively sort of ganged up on you, if that's even a thing.

Quote:

(btw you should definitely learn how people around here use words or youre going to be talking nonsense to us)

This was pretty funny. In other words, talk technologically illiterate so they can understand you.

I don't think the majority of them are actually interested in coding, but rather, messing around with a level editor. If they were more interested in the SNES hardware, they'd immediately abandon that pos game engine immediately.

And yes, that girl probably doesn't know anything about the HiOAM table, or cares to learn about it. You're probably wasting you time there, but you already knew that.

Espozo wrote:

Wouldn't a lower internal frequency give you more room for overclocking? How fast can you make a 6502 vs Z80 before it overheats? This isn't relevant to any system during the time though.

Well, overclocking a CPU isn't interesting if externals parts don't follow... In fact you can overclock the Genesis 68000 close to 12 Mhz without too much troubles while i think you can't overclock the 65816 at all as the memory is already close to its limit.

Probably you could get a 65816 @5 Mhz back in time (as the PCE was able a 6502 @7Mhz with some modification) but there is no point in doing that if you can't get the ROM working at that speed. In fact ROM cost was the big deal here (not only the RAM).

Quote:

It depends; how much would 8 bit memory cost compared to 16 bit memory at half the frequency?

It's doesn't really cost more to use 8 / 16 memory, you can use 2x8bit memory chip to do a 16bit one... the cost is on the CPU / BUS side here.

psycopathicteen wrote:

Opcodes that a 4 Mhz 65816 would be faster than a 8 Mhz 68000 would be:

-branching

-shifting by less than 3

-shifting by 8

-ALU and load/store instructions that use immediate, absolute or indexed addressing (except when "clc" and "sec" are needed)

-non-word aligned memory accesses

Opcodes 8 Mhz 68000 is faster at:

-shifting by more than 3 but less than 8

-ALU and load/store instructions that use registers or register indirect addressing

-ALU instructions that require "clc" or "sec"

-32-bit ALU instructions

We can say that, but again you will tend to use more one or the other depending the CPU you are working on...

On a 68000 you tend to cache everything in register and do all operations from them, also you try to optimize to code to take benefit of the 32/16 bits as much as possible. What is important is to see how much you can process bottlenecks on these CPUs, and for me bottlenecks always tend to be a data processing stream less or more. If it has a lot of branching the 65816 can have the edge, but in general the 68000 will be faster to process data (higher bandwidth / higher ALU rate with 32/16 bits)

Quote:

Probably you could get a 65816 @5 Mhz back in time (as the PCE was able a 6502 @7Mhz with some modification) but there is no point in doing that if you can't get the ROM working at that speed. In fact ROM cost was the big deal here (not only the RAM).

Doing a 1 phase 65816 is not like an overclocking,a classic 6502 design which works in 2 phases accesse to his ram at the speed of CPU x2,and for a 1mhz CPU you need 2mhz RAM .

With a one phase you need ram at the same speed than CPU so 1mhz for a 1mhz CPU .

So with the same RAM/ROM and a 1 phase 65816 you can go up to 5mhz in the snes without any design change like hudson did .

The 1 phase design was useful for other access like video or something else, useful for un Pc which share his main memory, but useless for a gaming system with dedicated VRAM/AUDIO RAM .

PS:To be precise,in 2 phases the accesses were a little bit under 0.5 cycles, and less than 1 cycle in 1 phase.

Quote:

In fact you can overclock the Genesis 68000 close to 12 Mhz without too much troubles while i think you can't overclock the 65816 at all as the memory is already close to its limit.

You're right but there is not much MD that accept this frequency,but you can find all superCPU for C64 which are all 65816 @14mhz overclocked to 20mhz, close to 50% more .

The 65816 was by himself very oveclocable, but not in the snes.

Quote:

It's doesn't really cost more to use 8 / 16 memory, you can use 2x8bit memory chip to do a 16bit one... the cost is on the CPU / BUS side here.

it depend of modules available,this is why sega put 8ko of 100ns SRAM for his Z80, completely useless but i'am sure at a very good price because the needs was for the 16/32/64 ko modules and there was so much stocks of 8K modules.

i dont' see how it was expensive for nec and faster SRAM was affordable for SEGA, it's a nonsense,and here i'am not even speaking of the snes's audio RAM .

Espozo wrote:

Seems like they passive aggressively sort of ganged up on you, if that's even a thing.

Quote:

(btw you should definitely learn how people around here use words or youre going to be talking nonsense to us)

This was pretty funny. In other words, talk technologically illiterate so they can understand you.

They mean purposely flip flopping the definition of "sprites" back and forth for the sake of disagreeing.

Quote:

I don't think the majority of them are actually interested in coding, but rather, messing around with a level editor. If they were more interested in the SNES hardware, they'd immediately abandon that pos game engine immediately.

And yes, that girl probably doesn't know anything about the HiOAM table, or cares to learn about it. You're probably wasting you time there, but you already knew that.

The people at smwcentral aren't even interested in actual game design. Lets say you have a pyramid level, and you have the idea of having some blocks coming in and out of the walls that you have to make timed jumps to. The very first response you get is "that will cause slowdown!"

SMWCentral users think the limits of the

SMW engine are the limits of the Super NES because no comparable engine is available to them. No comparable engine has been created because an original engine needs original artwork, level designs, etc. as a demonstration. (These are things that Wizards of the Coast's

Open Game License refers to as "Product Identity" and the industry calls "an IP".) And many who are creating engines along with artwork and level designs are more interested in a low-destructibility, hide-the-grid style of background art than the high-destructibility, embrace-the-grid style of classic

Super Mario.

TOUKO wrote:

So with the same RAM/ROM and a 1 phase 65816 you can go up to 5mhz in the snes without any design change like hudson did.

So the PCE uses 7MHz RAM? I don't think I'm understanding this correctly...

TOUKO wrote:

You're right but there is not much MD that accept this frequency,but you can find all superCPU for C64 which are all 65816 @14mhz overclocked to 20mhz, close to 50% more .The 65816 was by himself very oveclocable, but not in the snes.

That's what I was wondering. They'd probably be one of the most powerful chips used in an embedded system, assuming "fast" ram chips are available for this use. (65816's are still being produced, if I'm not mistaken). Then again, I can't think of a single appliance or whatnot that couldn't get by with a 1MHz Z80.

Stef wrote:

In fact ROM cost was the big deal here (not only the RAM).

I know I complain about the SNES a lot, but I really don't know what Nintendo was thinking when they decided they needed a whopping 128KB of RAM, but that it could be slower than the CPU. What do you think it would have cost comparatively for Nintendo to have used 64KB of RAM at 3.58MHz?

Stef wrote:

(higher bandwidth / higher ALU rate with 32/16 bits)

The majority of 16 bit instructions on the 65816 only use on extra cycle; the ones that use two are basically all bit shift operations. I don't think x or y being 16 bit makes a difference either, unless you're moving data in and out of them, in which case it's one extra cycle. As such, I didn't find any instructions where there is an extra speed penalty for both a 16 bit accumulator and a 16 bit x and y. (I'm of course basing what I've said of this:

https://wiki.superfamicom.org/65816-reference) The 68000 is always going to be significantly better at 32 bit operations other than just moving data, unless you push the 65816 to well over half the frequency of the 68000.

psycopathicteen wrote:

They mean purposely flip flopping the definition of "sprites" back and forth for the sake of disagreeing.

Are you sure it's not that they didn't know the difference?

tepples wrote:

SMWCentral users think the limits of the SMW engine are the limits of the Super NES because no comparable engine is available to them.

I can think of 100 games on the SNES that couldn't work on that shitty engine without a complete rewrite.

The way forward would require a new engine, but that would require a new Product Identity. This can be prototyped in a retraux styled PC game using SDL, or it can be prototyped as a total conversion of SMW that replaces all graphics. Are the modders at SMWCentral up to the task of using zero SMW graphics?

Nah, keep the graphics the same, but add a bunch of cool new stuff.

Quote:

So the PCE uses 7MHz RAM? I don't think I'm understanding this correctly...

Yes it use 120ns SRAM,else it would have needed 60ns .

Quote:

Then again, I can't think of a single appliance or whatnot that couldn't get by with a 1MHz Z80.

Of course a 1mhz Z80 would be very slow, but this processor was intended to be used at 3/3.5 minimum i think, but with slow ram .

psycopathicteen wrote:

Nah, keep the graphics the same

If by "the same" you mean "the same as

SMW", I was trying to avoid the project getting shut down by Nintendo's legal department, as

many of these were.

tepples wrote:

SMWCentral users think the limits of the SMW engine are the limits of the Super NES because no comparable engine is available to them. No comparable engine has been created because an original engine needs original artwork, level designs, etc. as a demonstration.

That's something I've been intending to solve. Nova the Squirrel (and its engine) is a very Mario-like game that's completely free from any actual Mario assets or code, with a level editor that intentionally mimics Lunar Magic's interface (though it could be improved a lot). I could port the engine to SNES eventually, add slopes and vertical scrolling, and design some levels.